Joonmyeong Choi

Read-only Prompt Optimization for Vision-Language Few-shot Learning

Aug 29, 2023

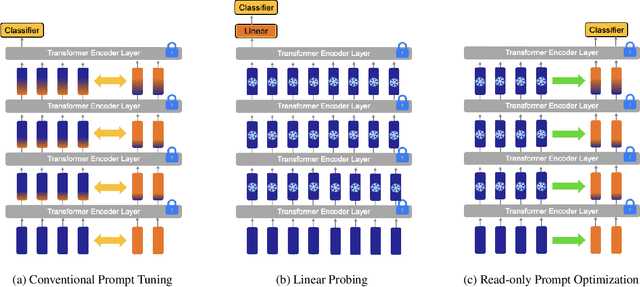

Abstract:In recent years, prompt tuning has proven effective in adapting pre-trained vision-language models to downstream tasks. These methods aim to adapt the pre-trained models by introducing learnable prompts while keeping pre-trained weights frozen. However, learnable prompts can affect the internal representation within the self-attention module, which may negatively impact performance variance and generalization, especially in data-deficient settings. To address these issues, we propose a novel approach, Read-only Prompt Optimization (RPO). RPO leverages masked attention to prevent the internal representation shift in the pre-trained model. Further, to facilitate the optimization of RPO, the read-only prompts are initialized based on special tokens of the pre-trained model. Our extensive experiments demonstrate that RPO outperforms CLIP and CoCoOp in base-to-new generalization and domain generalization while displaying better robustness. Also, the proposed method achieves better generalization on extremely data-deficient settings, while improving parameter efficiency and computational overhead. Code is available at https://github.com/mlvlab/RPO.

Fully Automated Hand Hygiene Monitoring\\in Operating Room using 3D Convolutional Neural Network

Mar 20, 2020

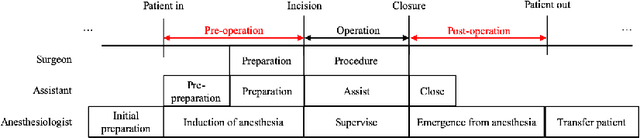

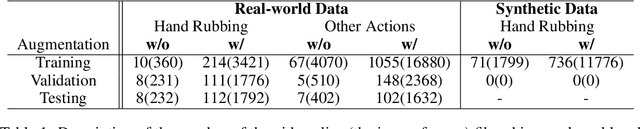

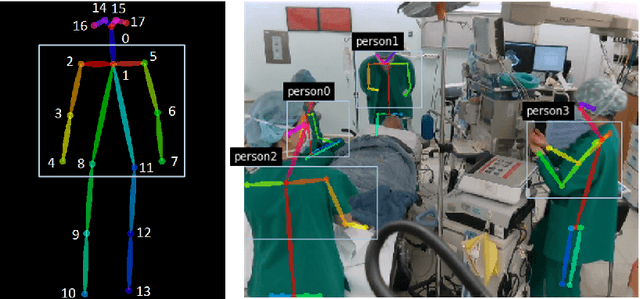

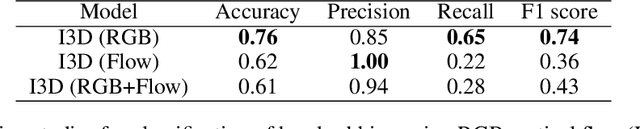

Abstract:Hand hygiene is one of the most significant factors in preventing hospital acquired infections (HAI) which often be transmitted by medical staffs in contact with patients in the operating room (OR). Hand hygiene monitoring could be important to investigate and reduce the outbreak of infections within the OR. However, an effective monitoring tool for hand hygiene compliance is difficult to develop due to the visual complexity of the OR scene. Recent progress in video understanding with convolutional neural net (CNN) has increased the application of recognition and detection of human actions. Leveraging this progress, we proposed a fully automated hand hygiene monitoring tool of the alcohol-based hand rubbing action of anesthesiologists on OR video using spatio-temporal features with 3D CNN. First, the region of interest (ROI) of anesthesiologists' upper body were detected and cropped. A temporal smoothing filter was applied to the ROIs. Then, the ROIs were given to a 3D CNN and classified into two classes: rubbing hands or other actions. We observed that a transfer learning from Kinetics-400 is beneficial and the optical flow stream was not helpful in our dataset. The final accuracy, precision, recall and F1 score in testing is 0.76, 0.85, 0.65 and 0.74, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge