Johannes Nauta

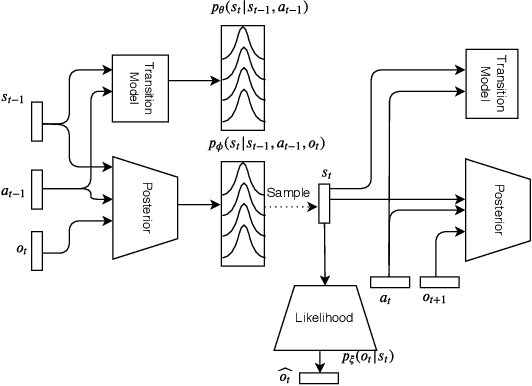

Learning Perception and Planning with Deep Active Inference

Feb 24, 2020

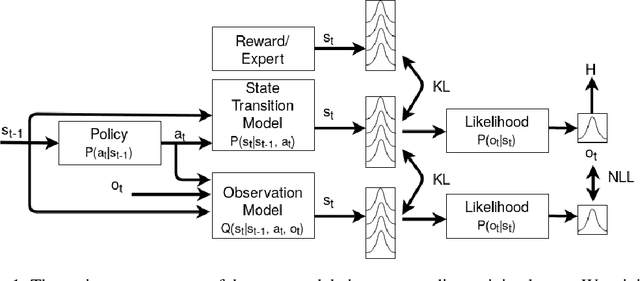

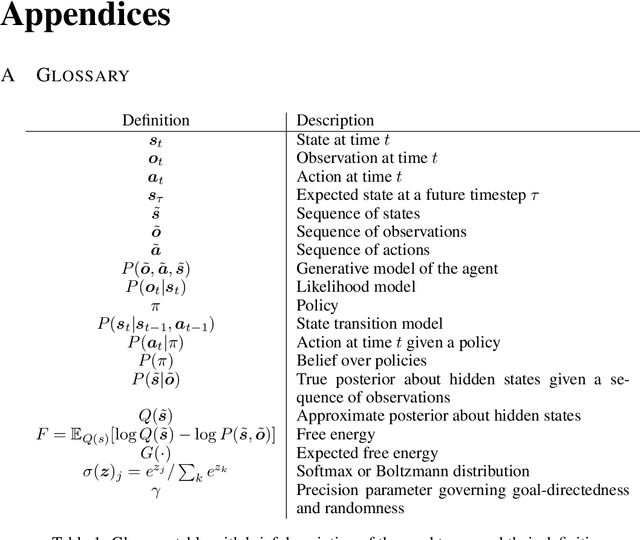

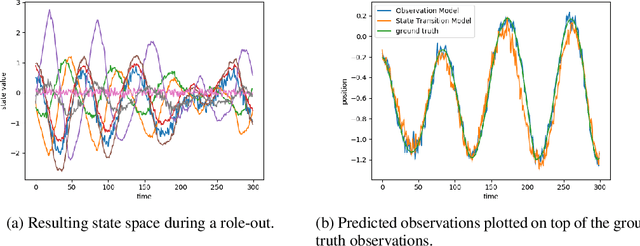

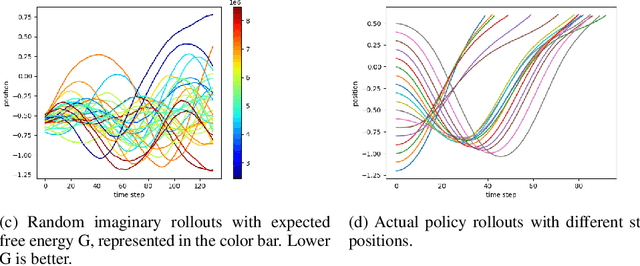

Abstract:Active inference is a process theory of the brain that states that all living organisms infer actions in order to minimize their (expected) free energy. However, current experiments are limited to predefined, often discrete, state spaces. In this paper we use recent advances in deep learning to learn the state space and approximate the necessary probability distributions to engage in active inference.

Bayesian policy selection using active inference

Apr 25, 2019

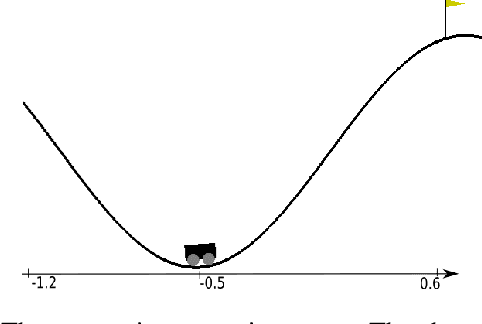

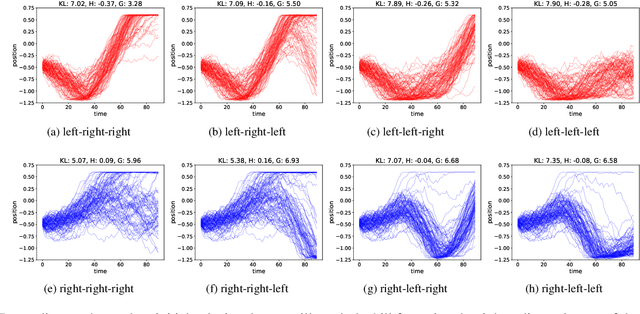

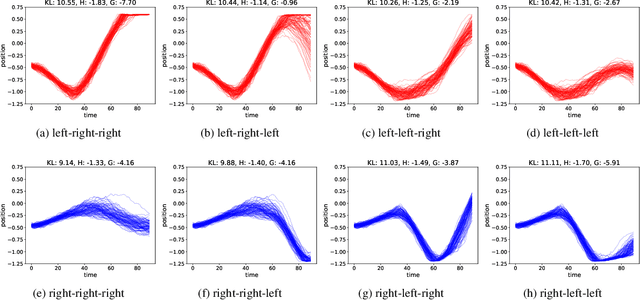

Abstract:Learning to take actions based on observations is a core requirement for artificial agents to be able to be successful and robust at their task. Reinforcement Learning (RL) is a well-known technique for learning such policies. However, current RL algorithms often have to deal with reward shaping, have difficulties generalizing to other environments and are most often sample inefficient. In this paper, we explore active inference and the free energy principle, a normative theory from neuroscience that explains how self-organizing biological systems operate by maintaining a model of the world and casting action selection as an inference problem. We apply this concept to a typical problem known to the RL community, the mountain car problem, and show how active inference encompasses both RL and learning from demonstrations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge