João Madeiras Pereira

Video-Based Rendering Techniques: A Survey

Dec 08, 2023Abstract:Three-dimensional reconstruction of events recorded on images has been a common challenge between computer vision and computer graphics for a long time. Estimating the real position of objects and surfaces using vision as an input is no trivial task and has been approached in several different ways. Although huge progress has been made so far, there are several open issues to which an answer is needed. The use of videos as an input for a rendering process (video-based rendering, VBR) is something that recently has been started to be looked upon and has added many other challenges and also solutions to the classical image-based rendering issue (IBR). This article presents the state of art on video-based rendering and image-based techniques that can be applied on this scenario, evaluating the open issues yet to be solved, indicating where future work should be focused.

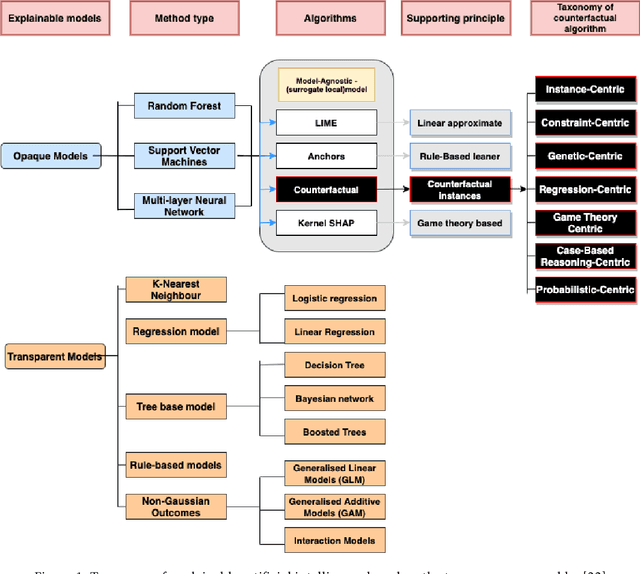

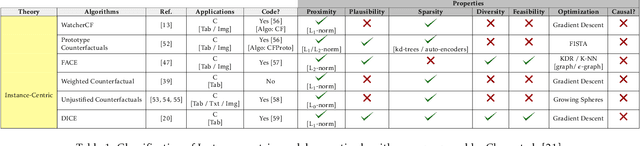

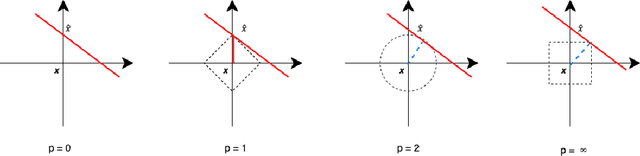

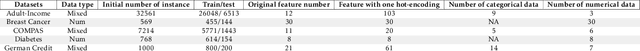

Benchmark Evaluation of Counterfactual Algorithms for XAI: From a White Box to a Black Box

Mar 04, 2022

Abstract:Counterfactual explanations have recently been brought to light as a potentially crucial response to obtaining human-understandable explanations from predictive models in Explainable Artificial Intelligence (XAI). Despite the fact that various counterfactual algorithms have been proposed, the state of the art research still lacks standardised protocols to evaluate the quality of counterfactual explanations. In this work, we conducted a benchmark evaluation across different model agnostic counterfactual algorithms in the literature (DiCE, WatcherCF, prototype, unjustifiedCF), and we investigated the counterfactual generation process on different types of machine learning models ranging from a white box (decision tree) to a grey-box (random forest) and a black box (neural network). We evaluated the different counterfactual algorithms using several metrics including proximity, interpretability and functionality for five datasets. The main findings of this work are the following: (1) without guaranteeing plausibility in the counterfactual generation process, one cannot have meaningful evaluation results. This means that all explainable counterfactual algorithms that do not take into consideration plausibility in their internal mechanisms cannot be evaluated with the current state of the art evaluation metrics; (2) the counterfactual generated are not impacted by the different types of machine learning models; (3) DiCE was the only tested algorithm that was able to generate actionable and plausible counterfactuals, because it provides mechanisms to constraint features; (4) WatcherCF and UnjustifiedCF are limited to continuous variables and can not deal with categorical data.

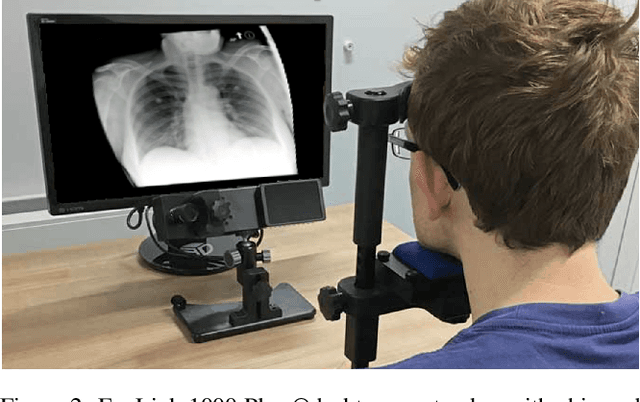

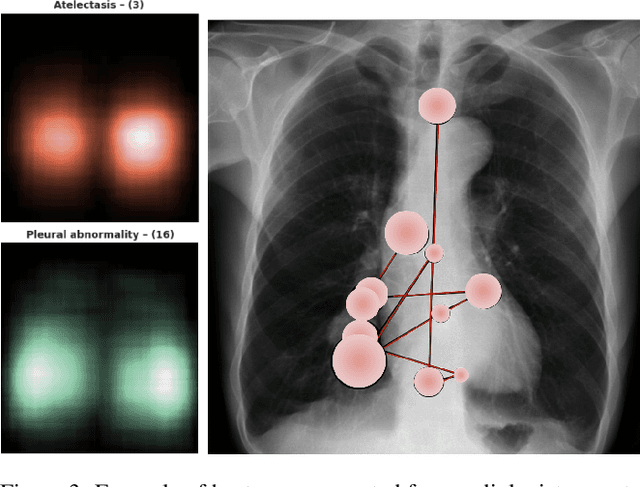

Improving X-ray Diagnostics through Eye-Tracking and XR

Mar 03, 2022

Abstract:There is a growing need to assist radiologists in performing X-ray readings and diagnoses fast, comfortably, and effectively. As radiologists strive to maximize productivity, it is essential to consider the impact of reading rooms in interpreting complex examinations and ensure that higher volume and reporting speeds do not compromise patient outcomes. Virtual Reality (VR) is a disruptive technology for clinical practice in assessing X-ray images. We argue that conjugating eye-tracking with VR devices and Machine Learning may overcome obstacles posed by inadequate ergonomic postures and poor room conditions that often cause erroneous diagnostics when professionals examine digital images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge