Jingyao Tang

Reasoning-Oriented and Analogy-Based Methods for Locating and Editing in Zero-Shot Event-Relational Reasoning

Jan 01, 2025Abstract:Zero-shot event-relational reasoning is an important task in natural language processing, and existing methods jointly learn a variety of event-relational prefixes and inference-form prefixes to achieve such tasks. However, training prefixes consumes large computational resources and lacks interpretability. Additionally, learning various relational and inferential knowledge inefficiently exploits the connections between tasks. Therefore, we first propose a method for Reasoning-Oriented Locating and Editing (ROLE), which locates and edits the key modules of the language model for reasoning about event relations, enhancing interpretability and also resource-efficiently optimizing the reasoning ability. Subsequently, we propose a method for Analogy-Based Locating and Editing (ABLE), which efficiently exploits the similarities and differences between tasks to optimize the zero-shot reasoning capability. Experimental results show that ROLE improves interpretability and reasoning performance with reduced computational cost. ABLE achieves SOTA results in zero-shot reasoning.

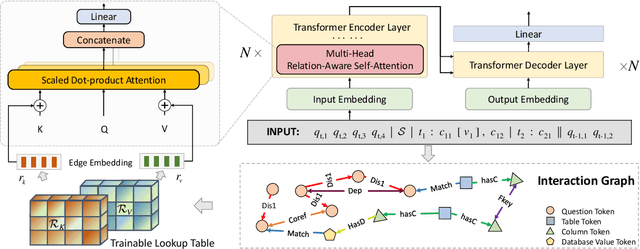

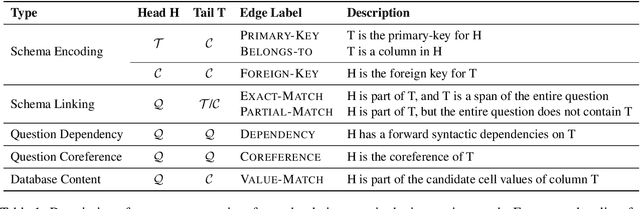

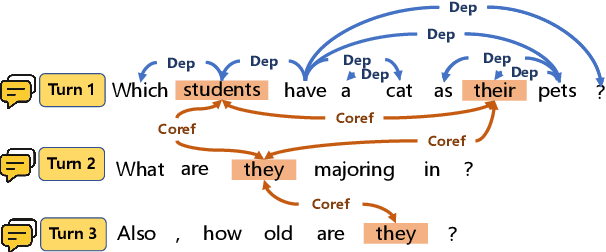

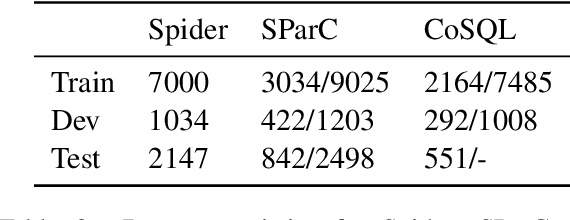

RASAT: Integrating Relational Structures into Pretrained Seq2Seq Model for Text-to-SQL

May 14, 2022

Abstract:Relational structures such as schema linking and schema encoding have been validated as a key component to qualitatively translating natural language into SQL queries. However, introducing these structural relations comes with prices: they often result in a specialized model structure, which largely prohibits the use of large pretrained models in text-to-SQL. To address this problem, we propose RASAT: a Transformer seq2seq architecture augmented with relation-aware self-attention that could leverage a variety of relational structures while at the meantime being able to effectively inherit the pretrained parameters from the T5 model. Our model is able to incorporate almost all types of existing relations in the literature, and in addition, we propose to introduce co-reference relations for the multi-turn scenario. Experimental results on three widely used text-to-SQL datasets, covering both single-turn and multi-turn scenarios, have shown that RASAT could achieve competitive results in all three benchmarks, achieving state-of-the-art performance in execution accuracy (80.5\% EX on Spider, 53.1\% IEX on SParC, and 37.5\% IEX on CoSQL).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge