Jieun Lim

Automatic Network Adaptation for Ultra-Low Uniform-Precision Quantization

Jan 04, 2023Abstract:Uniform-precision neural network quantization has gained popularity since it simplifies densely packed arithmetic unit for high computing capability. However, it ignores heterogeneous sensitivity to the impact of quantization errors across the layers, resulting in sub-optimal inference accuracy. This work proposes a novel neural architecture search called neural channel expansion that adjusts the network structure to alleviate accuracy degradation from ultra-low uniform-precision quantization. The proposed method selectively expands channels for the quantization sensitive layers while satisfying hardware constraints (e.g., FLOPs, PARAMs). Based on in-depth analysis and experiments, we demonstrate that the proposed method can adapt several popular networks channels to achieve superior 2-bit quantization accuracy on CIFAR10 and ImageNet. In particular, we achieve the best-to-date Top-1/Top-5 accuracy for 2-bit ResNet50 with smaller FLOPs and the parameter size.

Learning from distinctive candidates to optimize reduced-precision convolution program on tensor cores

Feb 24, 2022

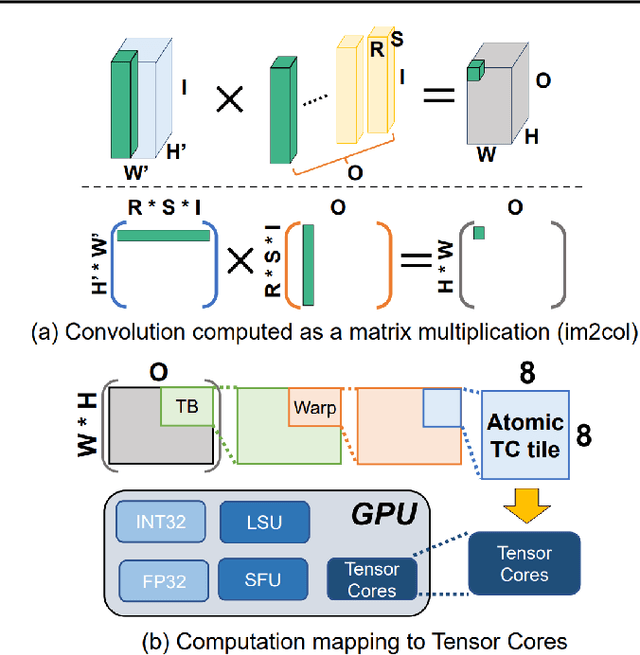

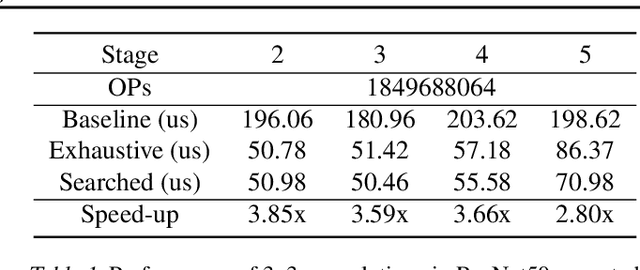

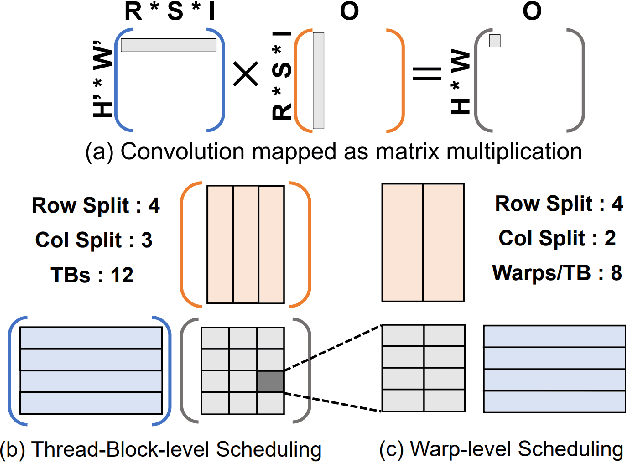

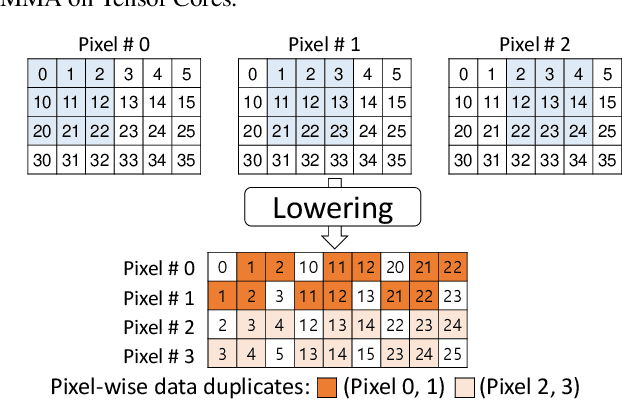

Abstract:Convolution is one of the fundamental operations of deep neural networks with demanding matrix computation. In a graphic processing unit (GPU), Tensor Core is a specialized matrix processing hardware equipped with reduced-precision matrix-multiply-accumulate (MMA) instructions to increase throughput. However, it is challenging to achieve optimal performance since the best scheduling of MMA instructions varies for different convolution sizes. In particular, reduced-precision MMA requires many elements grouped as a matrix operand, seriously limiting data reuse and imposing packing and layout overhead on the schedule. This work proposes an automatic scheduling method of reduced-precision MMA for convolution operation. In this method, we devise a search space that explores the thread tile and warp sizes to increase the data reuse despite a large matrix operand of reduced-precision MMA. The search space also includes options of register-level packing and layout optimization to lesson overhead of handling reduced-precision data. Finally, we propose a search algorithm to find the best schedule by learning from the distinctive candidates. This reduced-precision MMA optimization method is evaluated on convolution operations of popular neural networks to demonstrate substantial speedup on Tensor Core compared to the state of the arts with shortened search time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge