Jiayuan Tian

SeaDATE: Remedy Dual-Attention Transformer with Semantic Alignment via Contrast Learning for Multimodal Object Detection

Oct 15, 2024

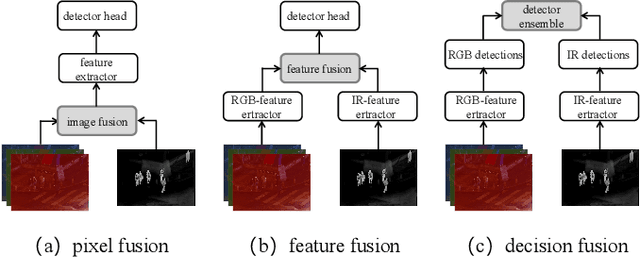

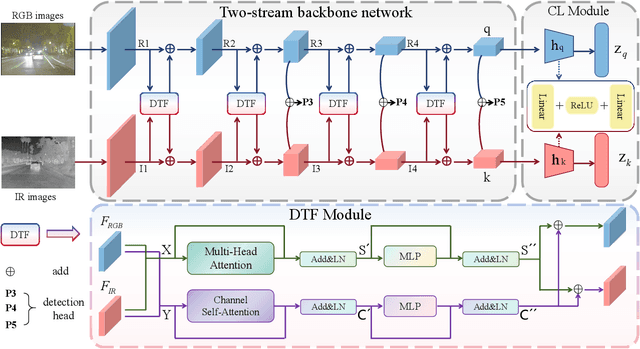

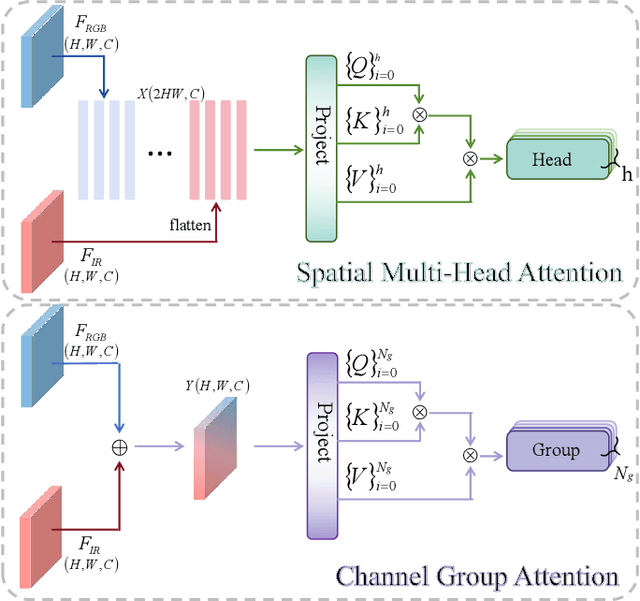

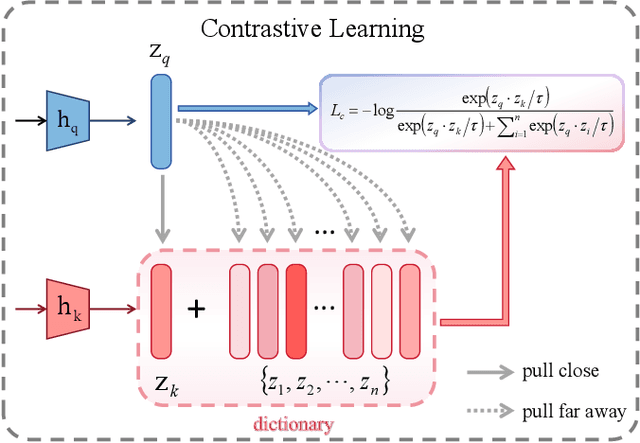

Abstract:Multimodal object detection leverages diverse modal information to enhance the accuracy and robustness of detectors. By learning long-term dependencies, Transformer can effectively integrate multimodal features in the feature extraction stage, which greatly improves the performance of multimodal object detection. However, current methods merely stack Transformer-guided fusion techniques without exploring their capability to extract features at various depth layers of network, thus limiting the improvements in detection performance. In this paper, we introduce an accurate and efficient object detection method named SeaDATE. Initially, we propose a novel dual attention Feature Fusion (DTF) module that, under Transformer's guidance, integrates local and global information through a dual attention mechanism, strengthening the fusion of modal features from orthogonal perspectives using spatial and channel tokens. Meanwhile, our theoretical analysis and empirical validation demonstrate that the Transformer-guided fusion method, treating images as sequences of pixels for fusion, performs better on shallow features' detail information compared to deep semantic information. To address this, we designed a contrastive learning (CL) module aimed at learning features of multimodal samples, remedying the shortcomings of Transformer-guided fusion in extracting deep semantic features, and effectively utilizing cross-modal information. Extensive experiments and ablation studies on the FLIR, LLVIP, and M3FD datasets have proven our method to be effective, achieving state-of-the-art detection performance.

SwiMDiff: Scene-wide Matching Contrastive Learning with Diffusion Constraint for Remote Sensing Image

Jan 10, 2024

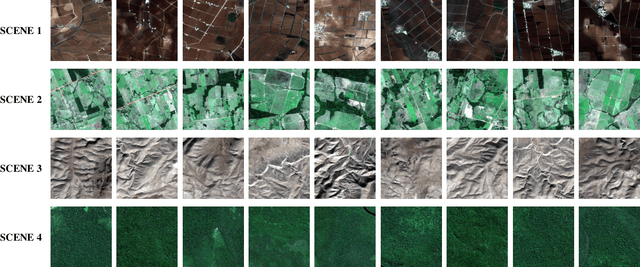

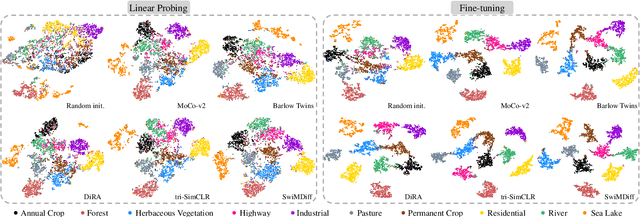

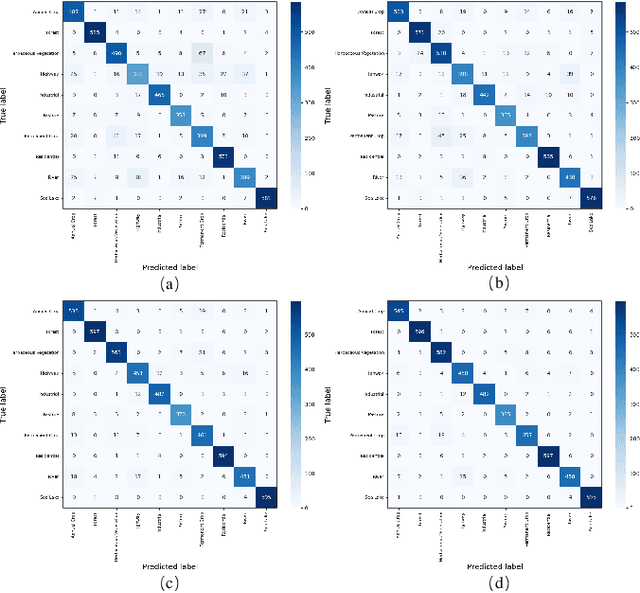

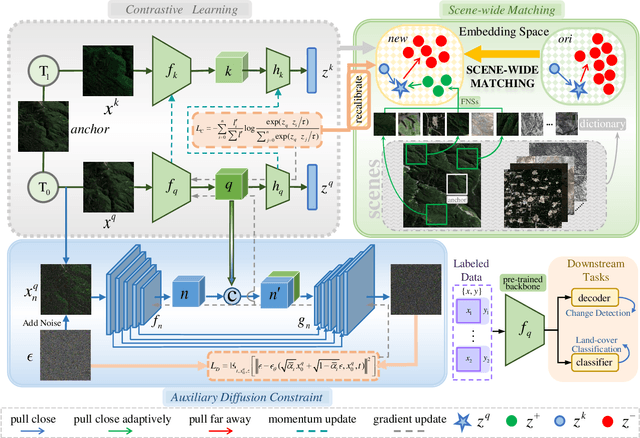

Abstract:With recent advancements in aerospace technology, the volume of unlabeled remote sensing image (RSI) data has increased dramatically. Effectively leveraging this data through self-supervised learning (SSL) is vital in the field of remote sensing. However, current methodologies, particularly contrastive learning (CL), a leading SSL method, encounter specific challenges in this domain. Firstly, CL often mistakenly identifies geographically adjacent samples with similar semantic content as negative pairs, leading to confusion during model training. Secondly, as an instance-level discriminative task, it tends to neglect the essential fine-grained features and complex details inherent in unstructured RSIs. To overcome these obstacles, we introduce SwiMDiff, a novel self-supervised pre-training framework designed for RSIs. SwiMDiff employs a scene-wide matching approach that effectively recalibrates labels to recognize data from the same scene as false negatives. This adjustment makes CL more applicable to the nuances of remote sensing. Additionally, SwiMDiff seamlessly integrates CL with a diffusion model. Through the implementation of pixel-level diffusion constraints, we enhance the encoder's ability to capture both the global semantic information and the fine-grained features of the images more comprehensively. Our proposed framework significantly enriches the information available for downstream tasks in remote sensing. Demonstrating exceptional performance in change detection and land-cover classification tasks, SwiMDiff proves its substantial utility and value in the field of remote sensing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge