Jianxiong Shen

Estimating 3D Uncertainty Field: Quantifying Uncertainty for Neural Radiance Fields

Nov 03, 2023Abstract:Current methods based on Neural Radiance Fields (NeRF) significantly lack the capacity to quantify uncertainty in their predictions, particularly on the unseen space including the occluded and outside scene content. This limitation hinders their extensive applications in robotics, where the reliability of model predictions has to be considered for tasks such as robotic exploration and planning in unknown environments. To address this, we propose a novel approach to estimate a 3D Uncertainty Field based on the learned incomplete scene geometry, which explicitly identifies these unseen regions. By considering the accumulated transmittance along each camera ray, our Uncertainty Field infers 2D pixel-wise uncertainty, exhibiting high values for rays directly casting towards occluded or outside the scene content. To quantify the uncertainty on the learned surface, we model a stochastic radiance field. Our experiments demonstrate that our approach is the only one that can explicitly reason about high uncertainty both on 3D unseen regions and its involved 2D rendered pixels, compared with recent methods. Furthermore, we illustrate that our designed uncertainty field is ideally suited for real-world robotics tasks, such as next-best-view selection.

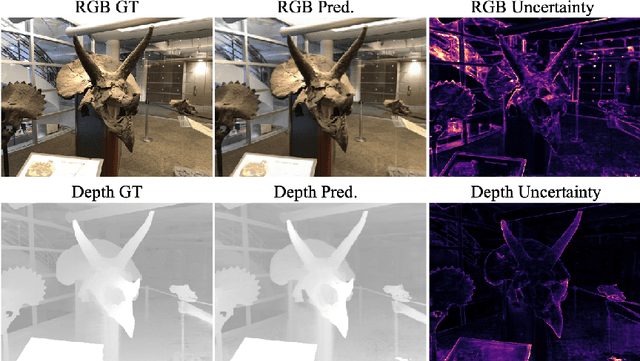

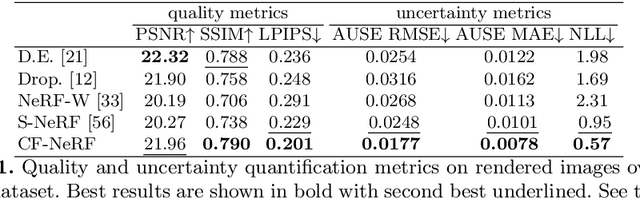

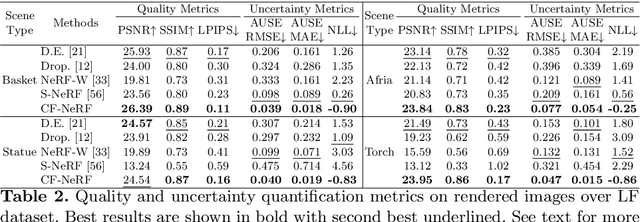

Conditional-Flow NeRF: Accurate 3D Modelling with Reliable Uncertainty Quantification

Mar 18, 2022

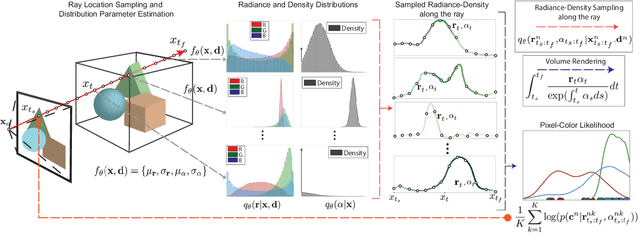

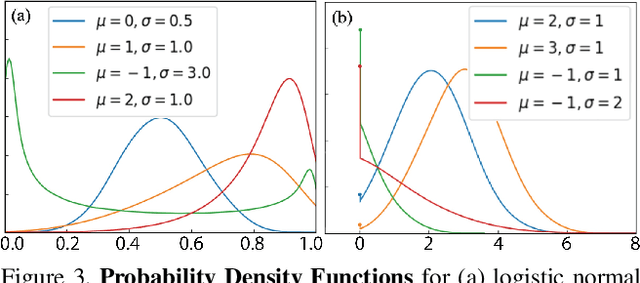

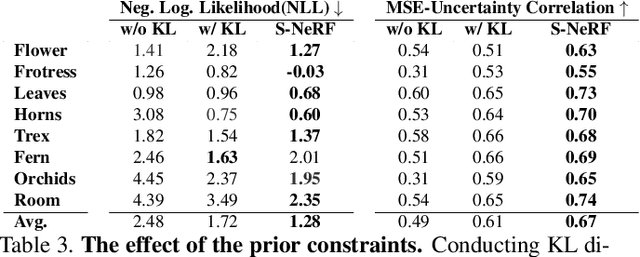

Abstract:A critical limitation of current methods based on Neural Radiance Fields (NeRF) is that they are unable to quantify the uncertainty associated with the learned appearance and geometry of the scene. This information is paramount in real applications such as medical diagnosis or autonomous driving where, to reduce potentially catastrophic failures, the confidence on the model outputs must be included into the decision-making process. In this context, we introduce Conditional-Flow NeRF (CF-NeRF), a novel probabilistic framework to incorporate uncertainty quantification into NeRF-based approaches. For this purpose, our method learns a distribution over all possible radiance fields modelling which is used to quantify the uncertainty associated with the modelled scene. In contrast to previous approaches enforcing strong constraints over the radiance field distribution, CF-NeRF learns it in a flexible and fully data-driven manner by coupling Latent Variable Modelling and Conditional Normalizing Flows. This strategy allows to obtain reliable uncertainty estimation while preserving model expressivity. Compared to previous state-of-the-art methods proposed for uncertainty quantification in NeRF, our experiments show that the proposed method achieves significantly lower prediction errors and more reliable uncertainty values for synthetic novel view and depth-map estimation.

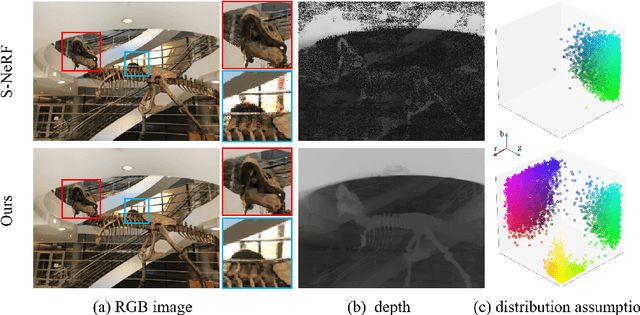

Stochastic Neural Radiance Fields: Quantifying Uncertainty in Implicit 3D Representations

Sep 28, 2021

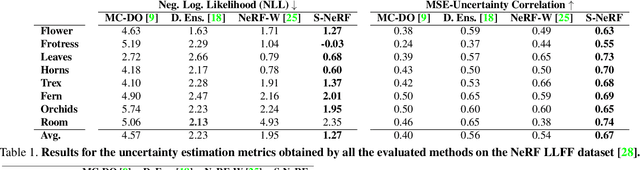

Abstract:Neural Radiance Fields (NeRF) has become a popular framework for learning implicit 3D representations and addressing different tasks such as novel-view synthesis or depth-map estimation. However, in downstream applications where decisions need to be made based on automatic predictions, it is critical to leverage the confidence associated with the model estimations. Whereas uncertainty quantification is a long-standing problem in Machine Learning, it has been largely overlooked in the recent NeRF literature. In this context, we propose Stochastic Neural Radiance Fields (S-NeRF), a generalization of standard NeRF that learns a probability distribution over all the possible radiance fields modeling the scene. This distribution allows to quantify the uncertainty associated with the scene information provided by the model. S-NeRF optimization is posed as a Bayesian learning problem which is efficiently addressed using the Variational Inference framework. Exhaustive experiments over benchmark datasets demonstrate that S-NeRF is able to provide more reliable predictions and confidence values than generic approaches previously proposed for uncertainty estimation in other domains.

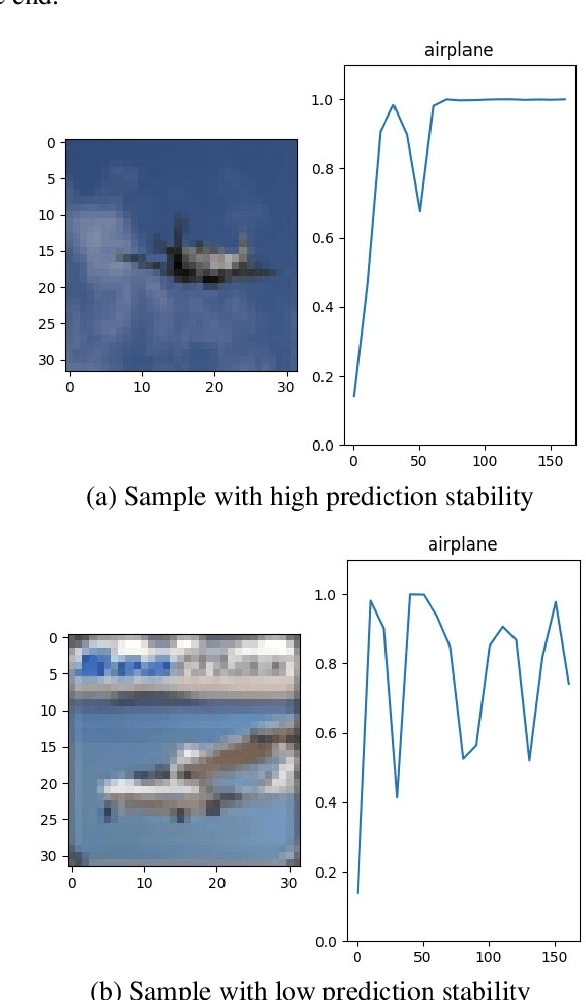

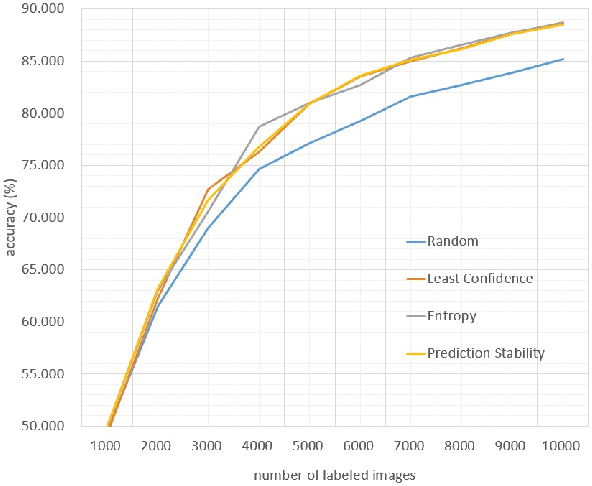

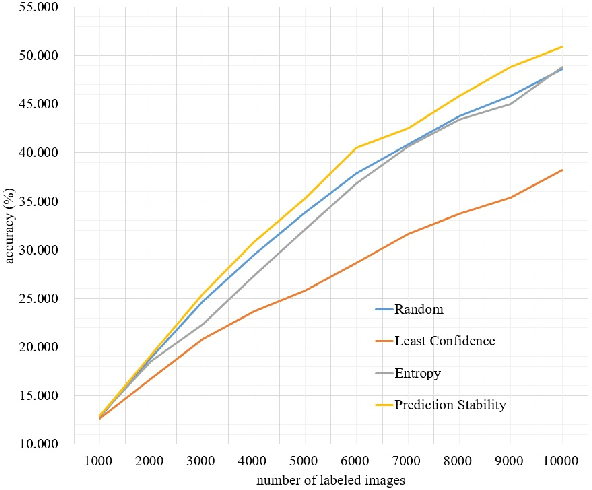

Prediction stability as a criterion in active learning

Oct 27, 2019

Abstract:Recent breakthroughs made by deep learning rely heavily on large number of annotated samples. To overcome this shortcoming, active learning is a possible solution. Beside the previous active learning algorithms that only adopted information after training, we propose a new class of method based on the information during training, named sequential-based method. An specific criterion of active learning called prediction stability is proposed to prove the feasibility of sequential-based methods. Experiments are made on CIFAR-10 and CIFAR-100, and the results indicates that prediction stability is effective and works well on fewer-labeled datasets. Prediction stability reaches the accuracy of traditional acquisition functions like entropy on CIFAR-10, and notably outperforms them on CIFAR-100.

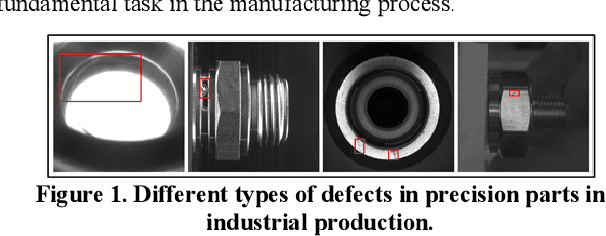

PartsNet: A Unified Deep Network for Automotive Engine Precision Parts Defect Detection

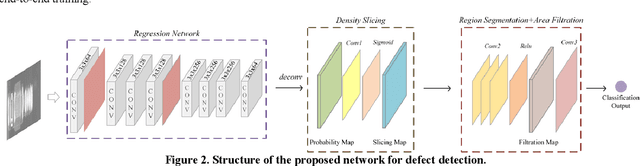

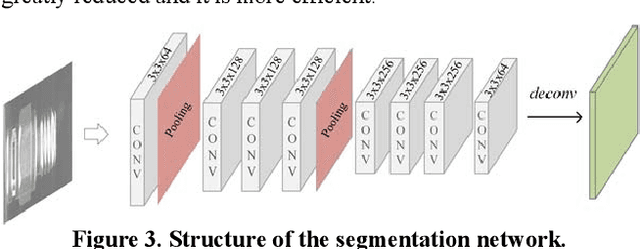

Oct 29, 2018

Abstract:Defect detection is a basic and essential task in automatic parts production, especially for automotive engine precision parts. In this paper, we propose a new idea to construct a deep convolutional network combining related knowledge of feature processing and the representation ability of deep learning. Our algorithm consists of a pixel-wise segmentation Deep Neural Network (DNN) and a feature refining network. The fully convolutional DNN is presented to learn basic features of parts defects. After that, several typical traditional methods which are used to refine the segmentation results are transformed into convolutional manners and integrated. We assemble these methods as a shallow network with fixed weights and empirical thresholds. These thresholds are then released to enhance its adaptation ability and realize end-to-end training. Testing results on different datasets show that the proposed method has good portability and outperforms the state-of-the-art algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge