Jayalakshmi Mangalagiri

University of Maryland, Baltimore County

CCS-GAN: COVID-19 CT-scan classification with very few positive training images

Oct 01, 2021

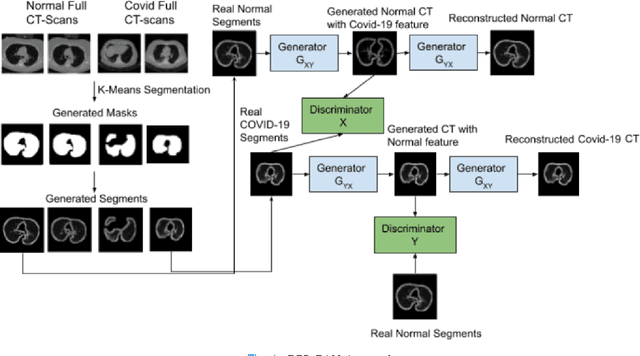

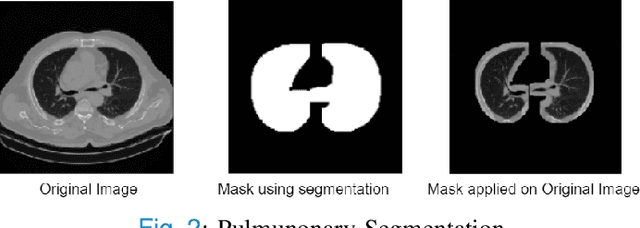

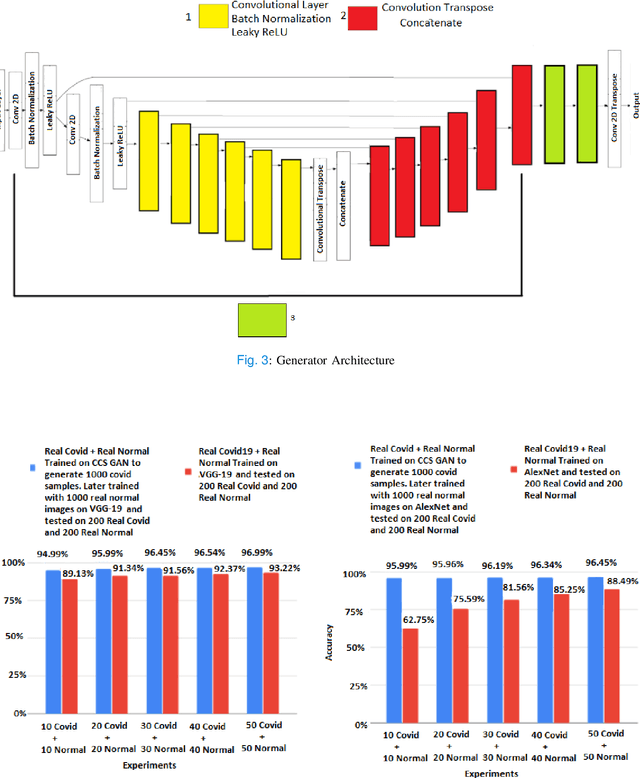

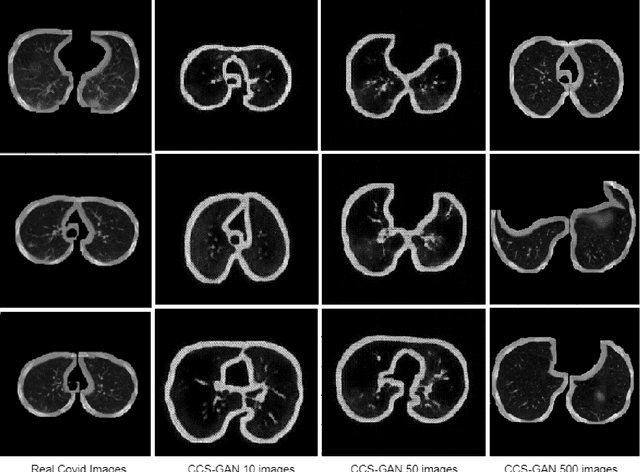

Abstract:We present a novel algorithm that is able to classify COVID-19 pneumonia from CT Scan slices using a very small sample of training images exhibiting COVID-19 pneumonia in tandem with a larger number of normal images. This algorithm is able to achieve high classification accuracy using as few as 10 positive training slices (from 10 positive cases), which to the best of our knowledge is one order of magnitude fewer than the next closest published work at the time of writing. Deep learning with extremely small positive training volumes is a very difficult problem and has been an important topic during the COVID-19 pandemic, because for quite some time it was difficult to obtain large volumes of COVID-19 positive images for training. Algorithms that can learn to screen for diseases using few examples are an important area of research. We present the Cycle Consistent Segmentation Generative Adversarial Network (CCS-GAN). CCS-GAN combines style transfer with pulmonary segmentation and relevant transfer learning from negative images in order to create a larger volume of synthetic positive images for the purposes of improving diagnostic classification performance. The performance of a VGG-19 classifier plus CCS-GAN was trained using a small sample of positive image slices ranging from at most 50 down to as few as 10 COVID-19 positive CT-scan images. CCS-GAN achieves high accuracy with few positive images and thereby greatly reduces the barrier of acquiring large training volumes in order to train a diagnostic classifier for COVID-19.

Toward Generating Synthetic CT Volumes using a 3D-Conditional Generative Adversarial Network

Apr 02, 2021

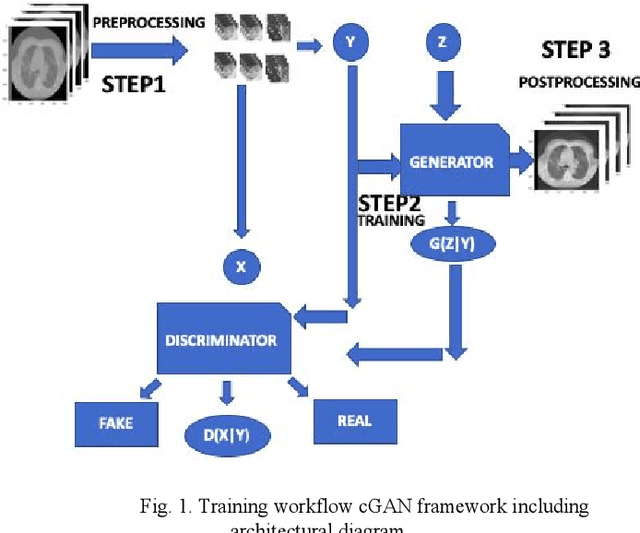

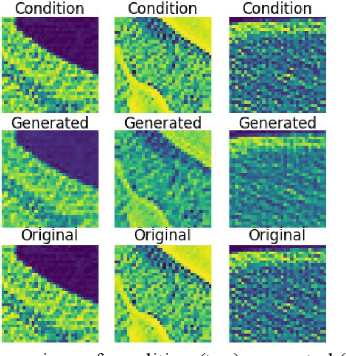

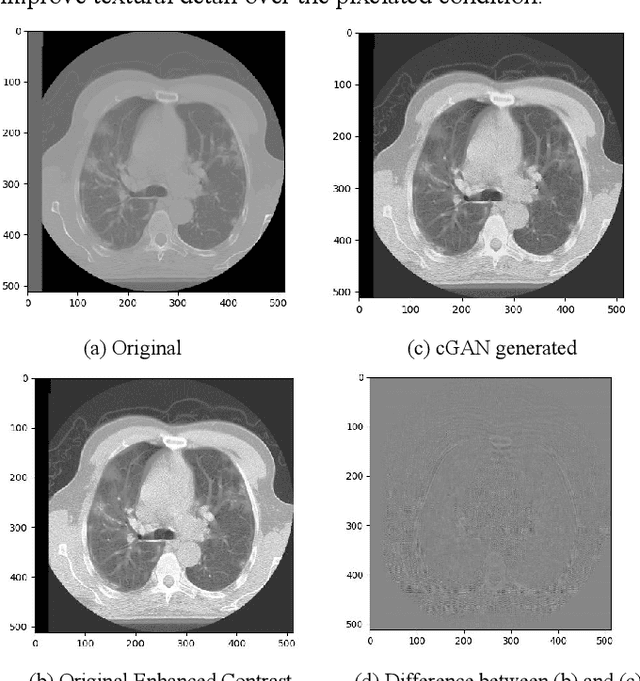

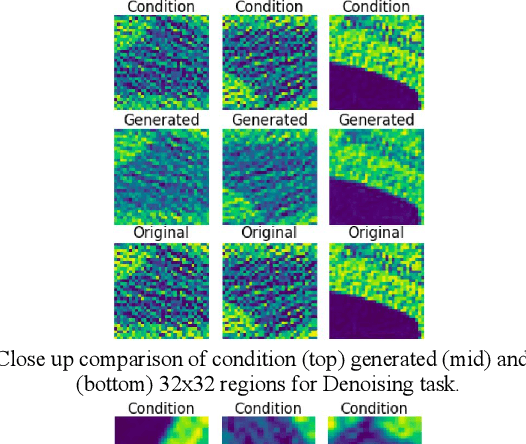

Abstract:We present a novel conditional Generative Adversarial Network (cGAN) architecture that is capable of generating 3D Computed Tomography scans in voxels from noisy and/or pixelated approximations and with the potential to generate full synthetic 3D scan volumes. We believe conditional cGAN to be a tractable approach to generate 3D CT volumes, even though the problem of generating full resolution deep fakes is presently impractical due to GPU memory limitations. We present results for autoencoder, denoising, and depixelating tasks which are trained and tested on two novel COVID19 CT datasets. Our evaluation metrics, Peak Signal to Noise ratio (PSNR) range from 12.53 - 46.46 dB, and the Structural Similarity index ( SSIM) range from 0.89 to 1.

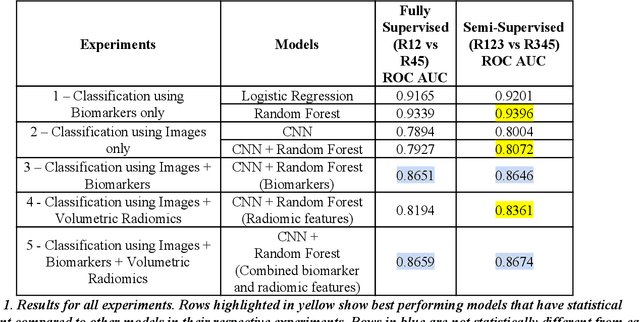

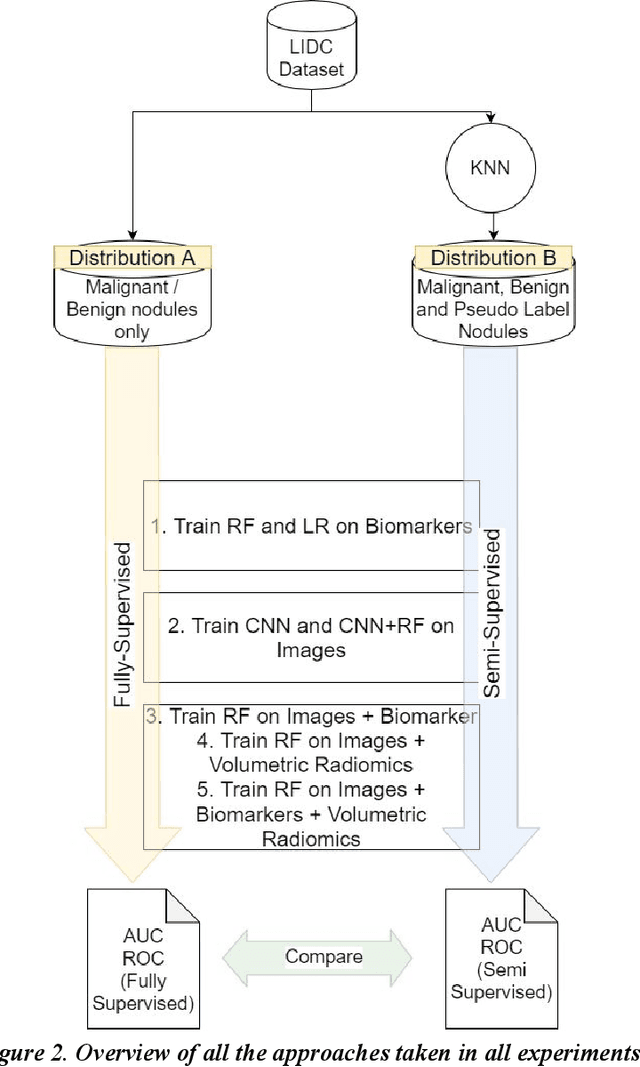

Lung Nodule Classification Using Biomarkers, Volumetric Radiomics and 3D CNNs

Oct 19, 2020

Abstract:We present a hybrid algorithm to estimate lung nodule malignancy that combines imaging biomarkers from Radiologist's annotation with image classification of CT scans. Our algorithm employs a 3D Convolutional Neural Network (CNN) as well as a Random Forest in order to combine CT imagery with biomarker annotation and volumetric radiomic features. We analyze and compare the performance of the algorithm using only imagery, only biomarkers, combined imagery + biomarkers, combined imagery + volumetric radiomic features and finally the combination of imagery + biomarkers + volumetric features in order to classify the suspicion level of nodule malignancy. The National Cancer Institute (NCI) Lung Image Database Consortium (LIDC) IDRI dataset is used to train and evaluate the classification task. We show that the incorporation of semi-supervised learning by means of K-Nearest-Neighbors (KNN) can increase the available training sample size of the LIDC-IDRI thereby further improving the accuracy of malignancy estimation of most of the models tested although there is no significant improvement with the use of KNN semi-supervised learning if image classification with CNNs and volumetric features are combined with descriptive biomarkers. Unexpectedly, we also show that a model using image biomarkers alone is more accurate than one that combines biomarkers with volumetric radiomics, 3D CNNs, and semi-supervised learning. We discuss the possibility that this result may be influenced by cognitive bias in LIDC-IDRI because malignancy estimates were recorded by the same radiologist panel as biomarkers, as well as future work to incorporate pathology information over a subset of study participants.

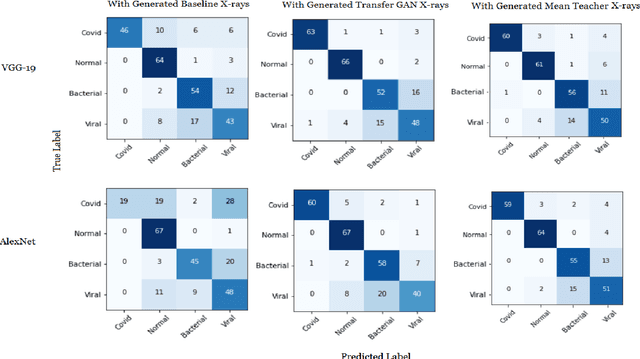

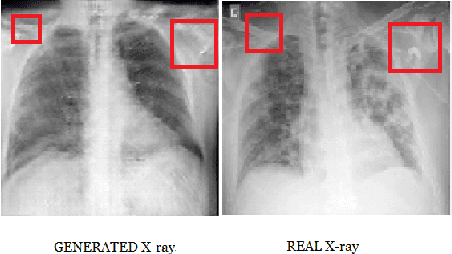

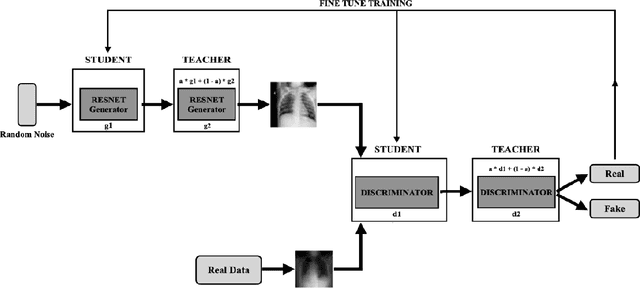

Generating Realistic COVID19 X-rays with a Mean Teacher + Transfer Learning GAN

Sep 26, 2020

Abstract:COVID-19 is a novel infectious disease responsible for over 800K deaths worldwide as of August 2020. The need for rapid testing is a high priority and alternative testing strategies including X-ray image classification are a promising area of research. However, at present, public datasets for COVID19 x-ray images have low data volumes, making it challenging to develop accurate image classifiers. Several recent papers have made use of Generative Adversarial Networks (GANs) in order to increase the training data volumes. But realistic synthetic COVID19 X-rays remain challenging to generate. We present a novel Mean Teacher + Transfer GAN (MTT-GAN) that generates COVID19 chest X-ray images of high quality. In order to create a more accurate GAN, we employ transfer learning from the Kaggle Pneumonia X-Ray dataset, a highly relevant data source orders of magnitude larger than public COVID19 datasets. Furthermore, we employ the Mean Teacher algorithm as a constraint to improve stability of training. Our qualitative analysis shows that the MTT-GAN generates X-ray images that are greatly superior to a baseline GAN and visually comparable to real X-rays. Although board-certified radiologists can distinguish MTT-GAN fakes from real COVID19 X-rays. Quantitative analysis shows that MTT-GAN greatly improves the accuracy of both a binary COVID19 classifier as well as a multi-class Pneumonia classifier as compared to a baseline GAN. Our classification accuracy is favourable as compared to recently reported results in the literature for similar binary and multi-class COVID19 screening tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge