Jamie Arjona

Synthetic ECG Generation for Data Augmentation and Transfer Learning in Arrhythmia Classification

Nov 27, 2024

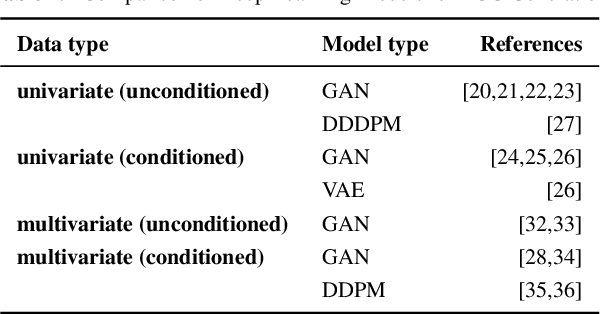

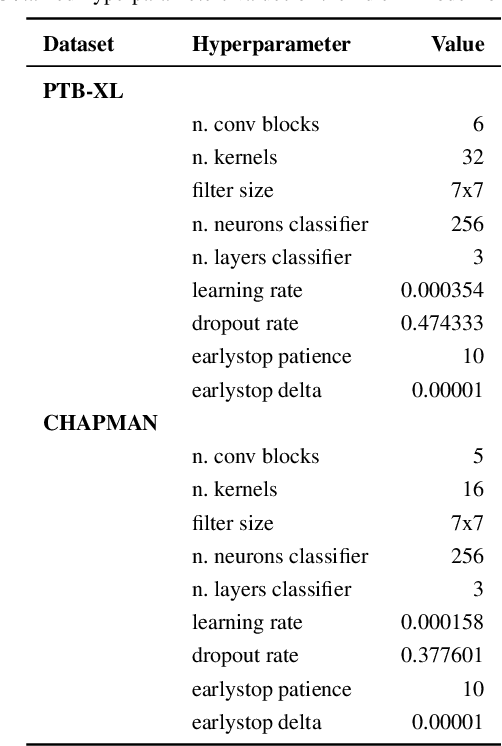

Abstract:Deep learning models need a sufficient amount of data in order to be able to find the hidden patterns in it. It is the purpose of generative modeling to learn the data distribution, thus allowing us to sample more data and augment the original dataset. In the context of physiological data, and more specifically electrocardiogram (ECG) data, given its sensitive nature and expensive data collection, we can exploit the benefits of generative models in order to enlarge existing datasets and improve downstream tasks, in our case, classification of heart rhythm. In this work, we explore the usefulness of synthetic data generated with different generative models from Deep Learning namely Diffweave, Time-Diffusion and Time-VQVAE in order to obtain better classification results for two open source multivariate ECG datasets. Moreover, we also investigate the effects of transfer learning, by fine-tuning a synthetically pre-trained model and then progressively adding increasing proportions of real data. We conclude that although the synthetic samples resemble the real ones, the classification improvement when simply augmenting the real dataset is barely noticeable on individual datasets, but when both datasets are merged the results show an increase across all metrics for the classifiers when using synthetic samples as augmented data. From the fine-tuning results the Time-VQVAE generative model has shown to be superior to the others but not powerful enough to achieve results close to a classifier trained with real data only. In addition, methods and metrics for measuring closeness between synthetic data and the real one have been explored as a side effect of the main research questions of this study.

Healthy Twitter discussions? Time will tell

Mar 21, 2022

Abstract:Studying misinformation and how to deal with unhealthy behaviours within online discussions has recently become an important field of research within social studies. With the rapid development of social media, and the increasing amount of available information and sources, rigorous manual analysis of such discourses has become unfeasible. Many approaches tackle the issue by studying the semantic and syntactic properties of discussions following a supervised approach, for example using natural language processing on a dataset labeled for abusive, fake or bot-generated content. Solutions based on the existence of a ground truth are limited to those domains which may have ground truth. However, within the context of misinformation, it may be difficult or even impossible to assign labels to instances. In this context, we consider the use of temporal dynamic patterns as an indicator of discussion health. Working in a domain for which ground truth was unavailable at the time (early COVID-19 pandemic discussions) we explore the characterization of discussions based on the the volume and time of contributions. First we explore the types of discussions in an unsupervised manner, and then characterize these types using the concept of ephemerality, which we formalize. In the end, we discuss the potential use of our ephemerality definition for labeling online discourses based on how desirable, healthy and constructive they are.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge