Jacob R. Stevens

X-Former: In-Memory Acceleration of Transformers

Mar 13, 2023Abstract:Transformers have achieved great success in a wide variety of natural language processing (NLP) tasks due to the attention mechanism, which assigns an importance score for every word relative to other words in a sequence. However, these models are very large, often reaching hundreds of billions of parameters, and therefore require a large number of DRAM accesses. Hence, traditional deep neural network (DNN) accelerators such as GPUs and TPUs face limitations in processing Transformers efficiently. In-memory accelerators based on non-volatile memory promise to be an effective solution to this challenge, since they provide high storage density while performing massively parallel matrix vector multiplications within memory arrays. However, attention score computations, which are frequently used in Transformers (unlike CNNs and RNNs), require matrix vector multiplications (MVM) where both operands change dynamically for each input. As a result, conventional NVM-based accelerators incur high write latency and write energy when used for Transformers, and further suffer from the low endurance of most NVM technologies. To address these challenges, we present X-Former, a hybrid in-memory hardware accelerator that consists of both NVM and CMOS processing elements to execute transformer workloads efficiently. To improve the hardware utilization of X-Former, we also propose a sequence blocking dataflow, which overlaps the computations of the two processing elements and reduces execution time. Across several benchmarks, we show that X-Former achieves upto 85x and 7.5x improvements in latency and energy over a NVIDIA GeForce GTX 1060 GPU and upto 10.7x and 4.6x improvements in latency and energy over a state-of-the-art in-memory NVM accelerator.

Optimizing Transformers with Approximate Computing for Faster, Smaller and more Accurate NLP Models

Oct 07, 2020

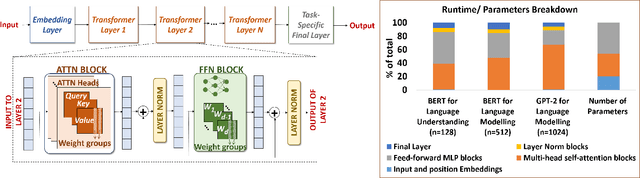

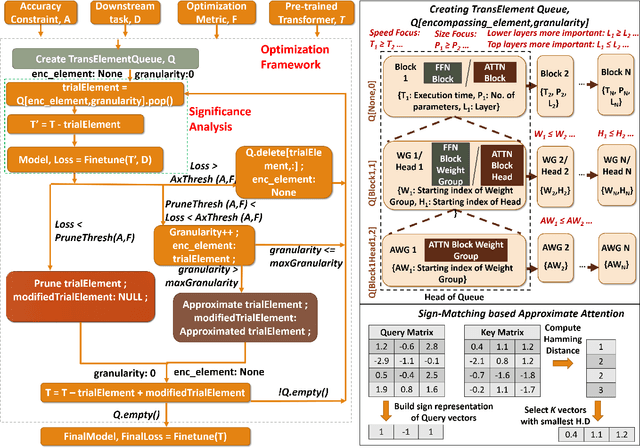

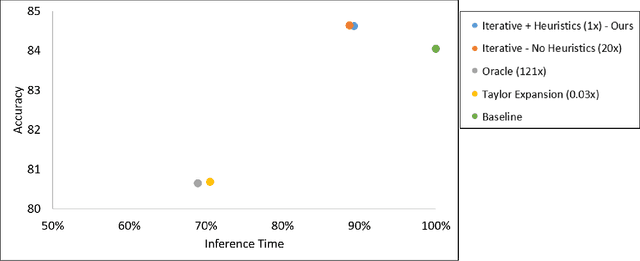

Abstract:Transformer models have garnered a lot of interest in recent years by delivering state-of-the-art performance in a range of Natural Language Processing (NLP) tasks. However, these models can have over a hundred billion parameters, presenting very high computational and memory requirements. We address this challenge through Approximate Computing, specifically targeting the use of Transformers in NLP tasks. Transformers are typically pre-trained and subsequently specialized for specific tasks through transfer learning. Based on the observation that pre-trained Transformers are often over-parameterized for several downstream NLP tasks, we propose a framework to create smaller, faster and in some cases more accurate models. The key cornerstones of the framework are a Significance Analysis (SA) method that identifies components in a pre-trained Transformer that are less significant for a given task, and techniques to approximate the less significant components. Our approximations include pruning of blocks, attention heads and weight groups, quantization of less significant weights and a low-complexity sign-matching based attention mechanism. Our framework can be adapted to produce models that are faster, smaller and/or more accurate, depending on the user's constraints. We apply our framework to seven Transformer models, including optimized models like DistilBERT and Q8BERT, and three downstream tasks. We demonstrate that our framework produces models that are up to 4x faster and up to 14x smaller (with less than 0.5% relative accuracy degradation), or up to 5.5% more accurate with simultaneous improvements of up to 9.83x in model size or 2.94x in speed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge