Jérôme Tubiana

TAU-CS

'Place-cell' emergence and learning of invariant data with restricted Boltzmann machines: breaking and dynamical restoration of continuous symmetries in the weight space

Dec 30, 2019

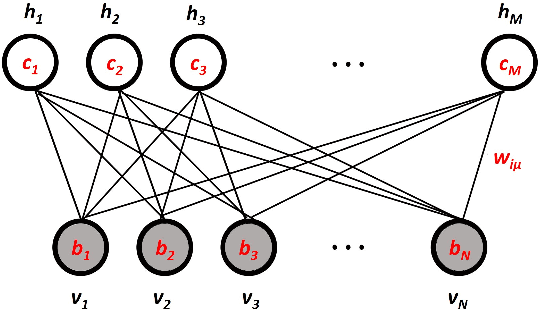

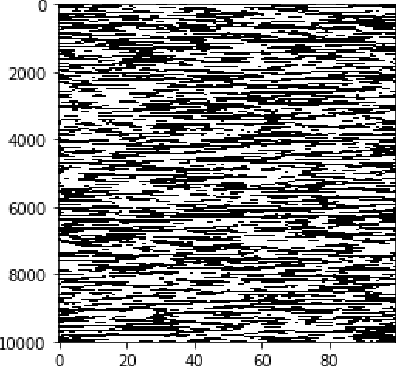

Abstract:Distributions of data or sensory stimuli often enjoy underlying invariances. How and to what extent those symmetries are captured by unsupervised learning methods is a relevant question in machine learning and in computational neuroscience. We study here, through a combination of numerical and analytical tools, the learning dynamics of Restricted Boltzmann Machines (RBM), a neural network paradigm for representation learning. As learning proceeds from a random configuration of the network weights, we show the existence of, and characterize a symmetry-breaking phenomenon, in which the latent variables acquire receptive fields focusing on limited parts of the invariant manifold supporting the data. The symmetry is restored at large learning times through the diffusion of the receptive field over the invariant manifold; hence, the RBM effectively spans a continuous attractor in the space of network weights. This symmetry-breaking phenomenon takes place only if the amount of data available for training exceeds some critical value, depending on the network size and the intensity of symmetry-induced correlations in the data; below this 'retarded-learning' threshold, the network weights are essentially noisy and overfit the data.

Learning Compositional Representations of Interacting Systems with Restricted Boltzmann Machines: Comparative Study of Lattice Proteins

Feb 18, 2019Abstract:A Restricted Boltzmann Machine (RBM) is an unsupervised machine-learning bipartite graphical model that jointly learns a probability distribution over data and extracts their relevant statistical features. As such, RBM were recently proposed for characterizing the patterns of coevolution between amino acids in protein sequences and for designing new sequences. Here, we study how the nature of the features learned by RBM changes with its defining parameters, such as the dimensionality of the representations (size of the hidden layer) and the sparsity of the features. We show that for adequate values of these parameters, RBM operate in a so-called compositional phase in which visible configurations sampled from the RBM are obtained by recombining these features. We then compare the performance of RBM with other standard representation learning algorithms, including Principal or Independent Component Analysis, autoencoders (AE), variational auto-encoders (VAE), and their sparse variants. We show that RBM, due to the stochastic mapping between data configurations and representations, better capture the underlying interactions in the system and are significantly more robust with respect to sample size than deterministic methods such as PCA or ICA. In addition, this stochastic mapping is not prescribed a priori as in VAE, but learned from data, which allows RBM to show good performance even with shallow architectures. All numerical results are illustrated on synthetic lattice-protein data, that share similar statistical features with real protein sequences, and for which ground-truth interactions are known.

Emergence of Compositional Representations in Restricted Boltzmann Machines

Mar 02, 2017

Abstract:Extracting automatically the complex set of features composing real high-dimensional data is crucial for achieving high performance in machine--learning tasks. Restricted Boltzmann Machines (RBM) are empirically known to be efficient for this purpose, and to be able to generate distributed and graded representations of the data. We characterize the structural conditions (sparsity of the weights, low effective temperature, nonlinearities in the activation functions of hidden units, and adaptation of fields maintaining the activity in the visible layer) allowing RBM to operate in such a compositional phase. Evidence is provided by the replica analysis of an adequate statistical ensemble of random RBMs and by RBM trained on the handwritten digits dataset MNIST.

* Supplementary material available at the authors' webpage

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge