Indu Navar

Remote Inference of Cognitive Scores in ALS Patients Using a Picture Description

Sep 13, 2023

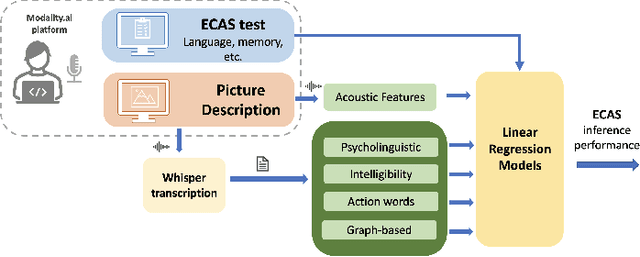

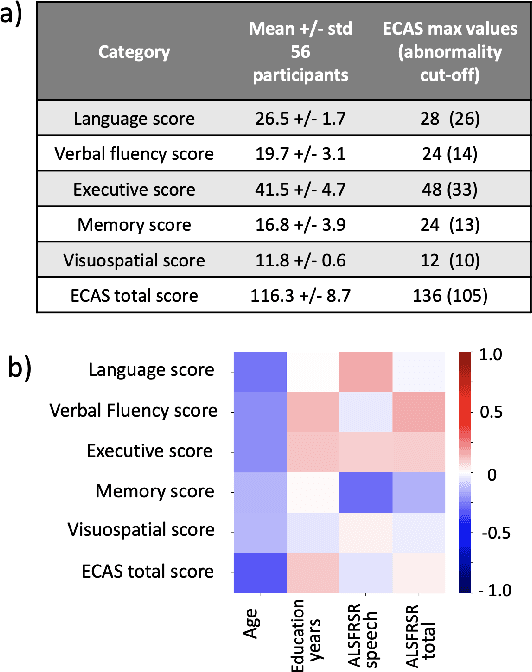

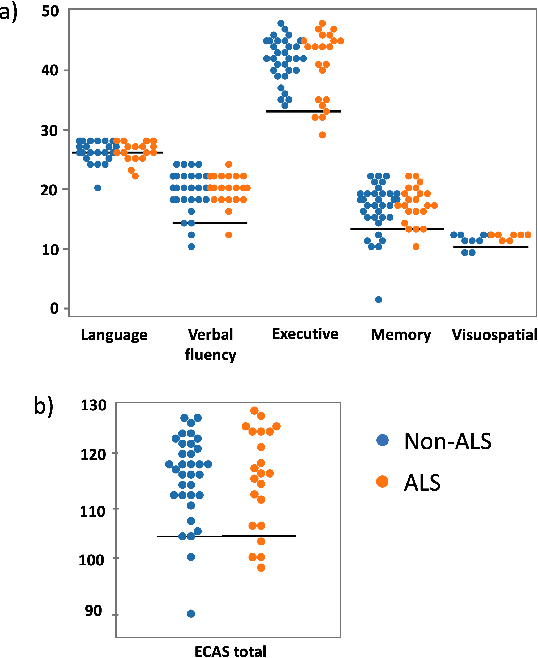

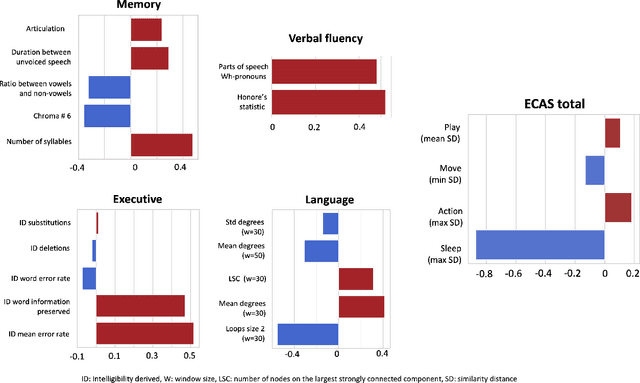

Abstract:Amyotrophic lateral sclerosis is a fatal disease that not only affects movement, speech, and breath but also cognition. Recent studies have focused on the use of language analysis techniques to detect ALS and infer scales for monitoring functional progression. In this paper, we focused on another important aspect, cognitive impairment, which affects 35-50% of the ALS population. In an effort to reach the ALS population, which frequently exhibits mobility limitations, we implemented the digital version of the Edinburgh Cognitive and Behavioral ALS Screen (ECAS) test for the first time. This test which is designed to measure cognitive impairment was remotely performed by 56 participants from the EverythingALS Speech Study. As part of the study, participants (ALS and non-ALS) were asked to describe weekly one picture from a pool of many pictures with complex scenes displayed on their computer at home. We analyze the descriptions performed within +/- 60 days from the day the ECAS test was administered and extract different types of linguistic and acoustic features. We input those features into linear regression models to infer 5 ECAS sub-scores and the total score. Speech samples from the picture description are reliable enough to predict the ECAS subs-scores, achieving statistically significant Spearman correlation values between 0.32 and 0.51 for the model's performance using 10-fold cross-validation.

Investigating the Utility of Multimodal Conversational Technology and Audiovisual Analytic Measures for the Assessment and Monitoring of Amyotrophic Lateral Sclerosis at Scale

Apr 15, 2021

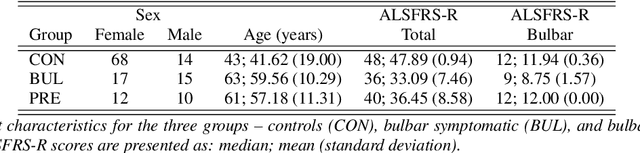

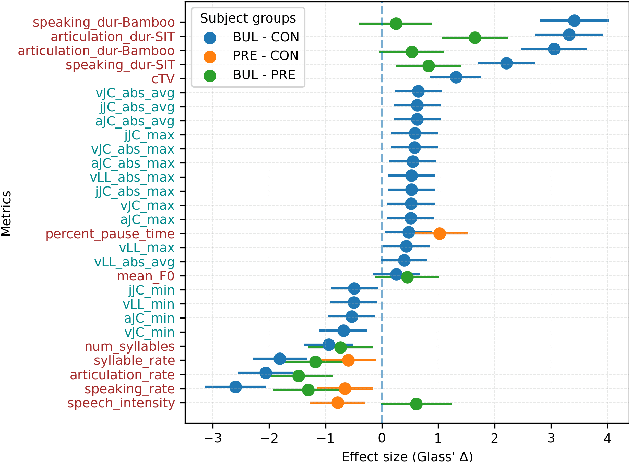

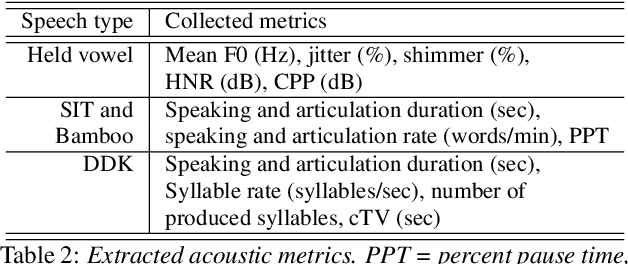

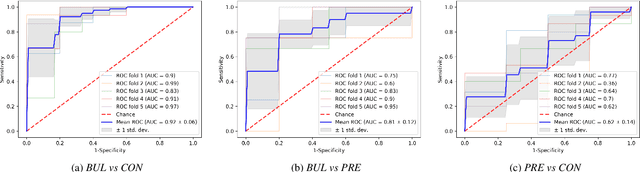

Abstract:We propose a cloud-based multimodal dialog platform for the remote assessment and monitoring of Amyotrophic Lateral Sclerosis (ALS) at scale. This paper presents our vision, technology setup, and an initial investigation of the efficacy of the various acoustic and visual speech metrics automatically extracted by the platform. 82 healthy controls and 54 people with ALS (pALS) were instructed to interact with the platform and completed a battery of speaking tasks designed to probe the acoustic, articulatory, phonatory, and respiratory aspects of their speech. We find that multiple acoustic (rate, duration, voicing) and visual (higher order statistics of the jaw and lip) speech metrics show statistically significant differences between controls, bulbar symptomatic and bulbar pre-symptomatic patients. We report on the sensitivity and specificity of these metrics using five-fold cross-validation. We further conducted a LASSO-LARS regression analysis to uncover the relative contributions of various acoustic and visual features in predicting the severity of patients' ALS (as measured by their self-reported ALSFRS-R scores). Our results provide encouraging evidence of the utility of automatically extracted audiovisual analytics for scalable remote patient assessment and monitoring in ALS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge