Hua Chang

MambaOVSR: Multiscale Fusion with Global Motion Modeling for Chinese Opera Video Super-Resolution

Nov 09, 2025

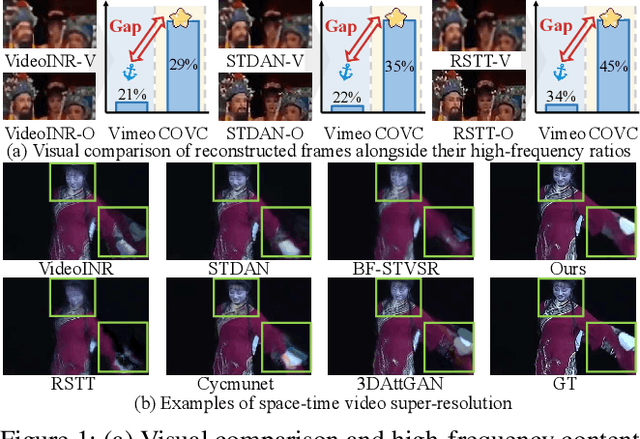

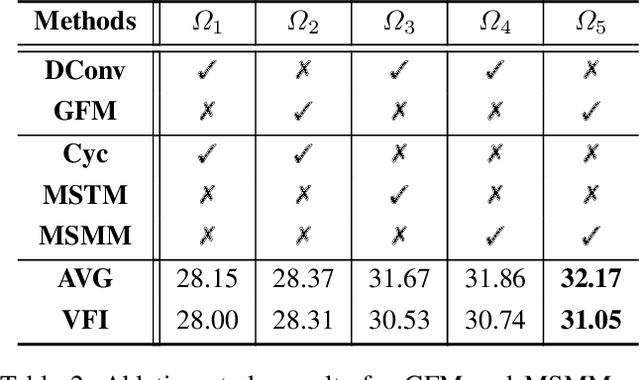

Abstract:Chinese opera is celebrated for preserving classical art. However, early filming equipment limitations have degraded videos of last-century performances by renowned artists (e.g., low frame rates and resolution), hindering archival efforts. Although space-time video super-resolution (STVSR) has advanced significantly, applying it directly to opera videos remains challenging. The scarcity of datasets impedes the recovery of high frequency details, and existing STVSR methods lack global modeling capabilities, compromising visual quality when handling opera's characteristic large motions. To address these challenges, we pioneer a large scale Chinese Opera Video Clip (COVC) dataset and propose the Mamba-based multiscale fusion network for space-time Opera Video Super-Resolution (MambaOVSR). Specifically, MambaOVSR involves three novel components: the Global Fusion Module (GFM) for motion modeling through a multiscale alternating scanning mechanism, and the Multiscale Synergistic Mamba Module (MSMM) for alignment across different sequence lengths. Additionally, our MambaVR block resolves feature artifacts and positional information loss during alignment. Experimental results on the COVC dataset show that MambaOVSR significantly outperforms the SOTA STVSR method by an average of 1.86 dB in terms of PSNR. Dataset and Code will be publicly released.

Mix-Modality Person Re-Identification: A New and Practical Paradigm

Dec 06, 2024Abstract:Current visible-infrared cross-modality person re-identification research has only focused on exploring the bi-modality mutual retrieval paradigm, and we propose a new and more practical mix-modality retrieval paradigm. Existing Visible-Infrared person re-identification (VI-ReID) methods have achieved some results in the bi-modality mutual retrieval paradigm by learning the correspondence between visible and infrared modalities. However, significant performance degradation occurs due to the modality confusion problem when these methods are applied to the new mix-modality paradigm. Therefore, this paper proposes a Mix-Modality person re-identification (MM-ReID) task, explores the influence of modality mixing ratio on performance, and constructs mix-modality test sets for existing datasets according to the new mix-modality testing paradigm. To solve the modality confusion problem in MM-ReID, we propose a Cross-Identity Discrimination Harmonization Loss (CIDHL) adjusting the distribution of samples in the hyperspherical feature space, pulling the centers of samples with the same identity closer, and pushing away the centers of samples with different identities while aggregating samples with the same modality and the same identity. Furthermore, we propose a Modality Bridge Similarity Optimization Strategy (MBSOS) to optimize the cross-modality similarity between the query and queried samples with the help of the similar bridge sample in the gallery. Extensive experiments demonstrate that compared to the original performance of existing cross-modality methods on MM-ReID, the addition of our CIDHL and MBSOS demonstrates a general improvement.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge