Hossein Bobarshad

HyperTune: Dynamic Hyperparameter Tuning For Efficient Distribution of DNN Training Over Heterogeneous Systems

Jul 16, 2020

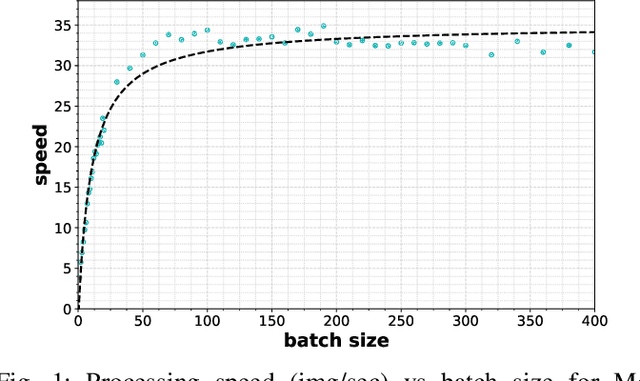

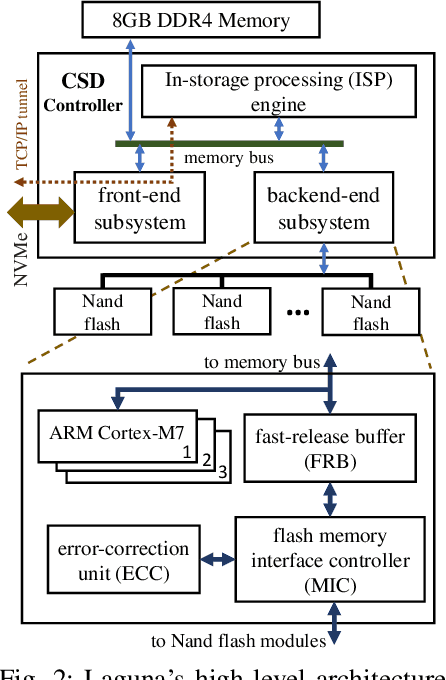

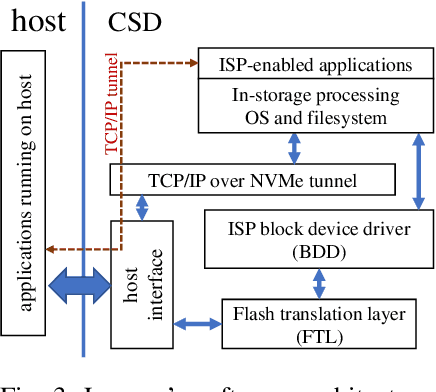

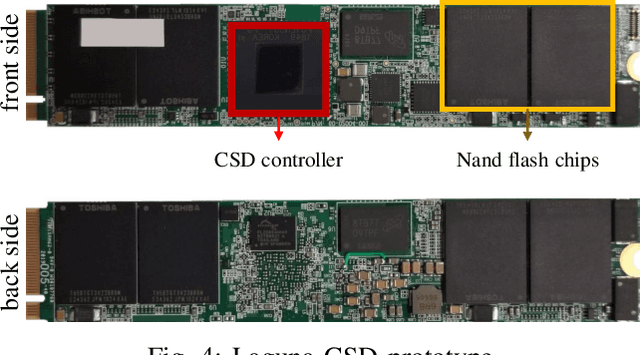

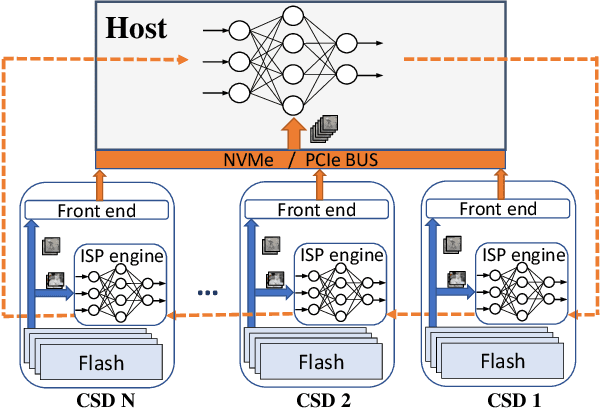

Abstract:Distributed training is a novel approach to accelerate Deep Neural Networks (DNN) training, but common training libraries fall short of addressing the distributed cases with heterogeneous processors or the cases where the processing nodes get interrupted by other workloads. This paper describes distributed training of DNN on computational storage devices (CSD), which are NAND flash-based, high capacity data storage with internal processing engines. A CSD-based distributed architecture incorporates the advantages of federated learning in terms of performance scalability, resiliency, and data privacy by eliminating the unnecessary data movement between the storage device and the host processor. The paper also describes Stannis, a DNN training framework that improves on the shortcomings of existing distributed training frameworks by dynamically tuning the training hyperparameters in heterogeneous systems to maintain the maximum overall processing speed in term of processed images per second and energy efficiency. Experimental results on image classification training benchmarks show up to 3.1x improvement in performance and 2.45x reduction in energy consumption when using Stannis plus CSD compare to the generic systems.

STANNIS: Low-Power Acceleration of Deep Neural Network Training Using Computational Storage

Feb 19, 2020

Abstract:This paper proposes a framework for distributed, in-storage training of neural networks on clusters of computational storage devices. Such devices not only contain hardware accelerators but also eliminate data movement between the host and storage, resulting in both improved performance and power savings. More importantly, this in-storage processing style of training ensures that private data never leaves the storage while fully controlling the sharing of public data. Experimental results show up to 2.7x speedup and 69% reduction in energy consumption and no significant loss in accuracy.

Memory Enriched Big Bang Big Crunch Optimization Algorithm for Data Clustering

Mar 08, 2017

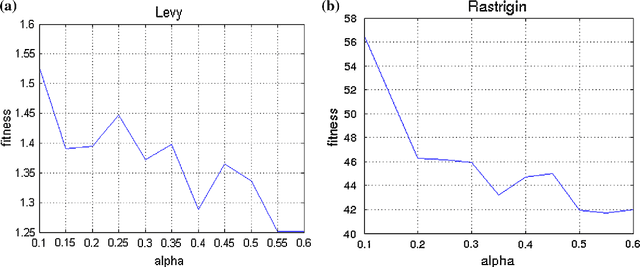

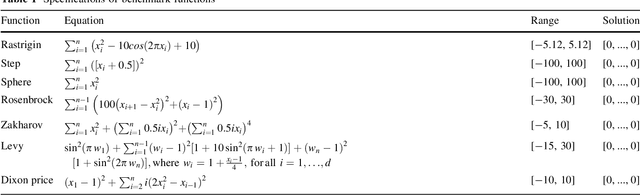

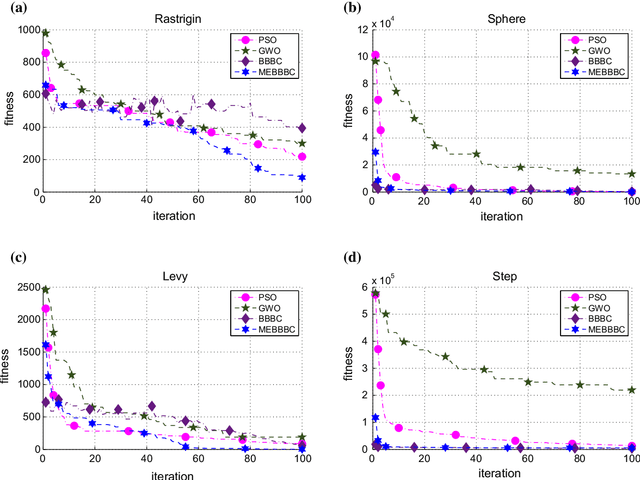

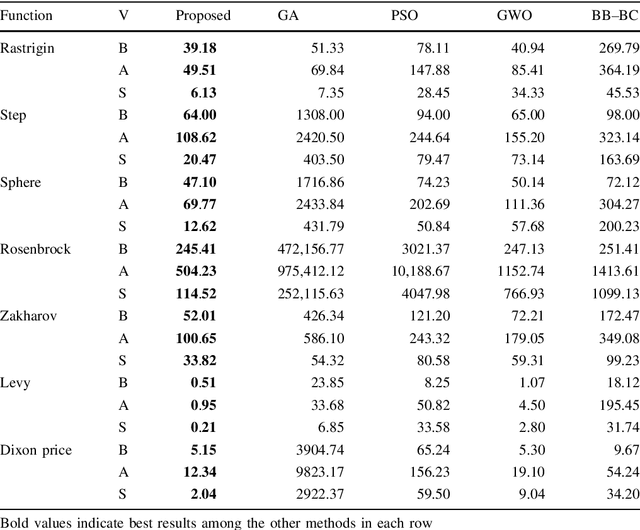

Abstract:Cluster analysis plays an important role in decision making process for many knowledge-based systems. There exist a wide variety of different approaches for clustering applications including the heuristic techniques, probabilistic models, and traditional hierarchical algorithms. In this paper, a novel heuristic approach based on big bang-big crunch algorithm is proposed for clustering problems. The proposed method not only takes advantage of heuristic nature to alleviate typical clustering algorithms such as k-means, but it also benefits from the memory based scheme as compared to its similar heuristic techniques. Furthermore, the performance of the proposed algorithm is investigated based on several benchmark test functions as well as on the well-known datasets. The experimental results show the significant superiority of the proposed method over the similar algorithms.

* 17 pages, 3 figures, 8 tables

IEDC: An Integrated Approach for Overlapping and Non-overlapping Community Detection

Feb 13, 2017

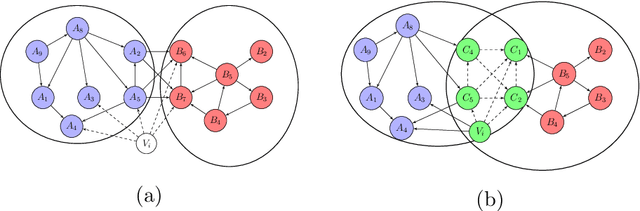

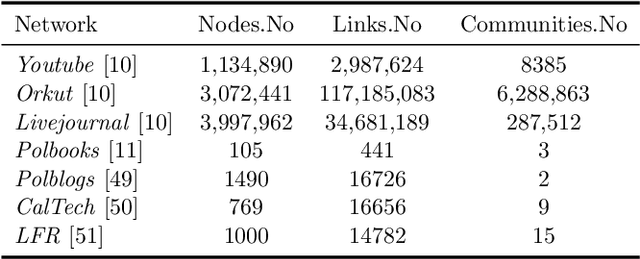

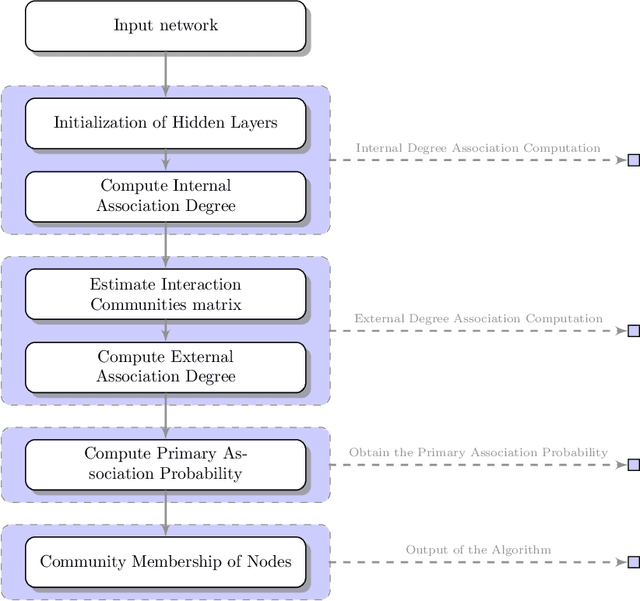

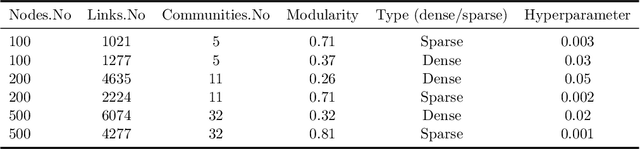

Abstract:Community detection is a task of fundamental importance in social network analysis that can be used in a variety of knowledge-based domains. While there exist many works on community detection based on connectivity structures, they suffer from either considering the overlapping or non-overlapping communities. In this work, we propose a novel approach for general community detection through an integrated framework to extract the overlapping and non-overlapping community structures without assuming prior structural connectivity on networks. Our general framework is based on a primary node based criterion which consists of the internal association degree along with the external association degree. The evaluation of the proposed method is investigated through the extensive simulation experiments and several benchmark real network datasets. The experimental results show that the proposed method outperforms the earlier state-of-the-art algorithms based on the well-known evaluation criteria.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge