Hideaki Shimazaki

Enhancing diffusion models with Gaussianization preprocessing

Dec 24, 2025Abstract:Diffusion models are a class of generative models that have demonstrated remarkable success in tasks such as image generation. However, one of the bottlenecks of these models is slow sampling due to the delay before the onset of trajectory bifurcation, at which point substantial reconstruction begins. This issue degrades generation quality, especially in the early stages. Our primary objective is to mitigate bifurcation-related issues by preprocessing the training data to enhance reconstruction quality, particularly for small-scale network architectures. Specifically, we propose applying Gaussianization preprocessing to the training data to make the target distribution more closely resemble an independent Gaussian distribution, which serves as the initial density of the reconstruction process. This preprocessing step simplifies the model's task of learning the target distribution, thereby improving generation quality even in the early stages of reconstruction with small networks. The proposed method is, in principle, applicable to a broad range of generative tasks, enabling more stable and efficient sampling processes.

Revisiting Gradient Descent: A Dual-Weight Method for Improved Learning

Mar 15, 2025Abstract:We introduce a novel framework for learning in neural networks by decomposing each neuron's weight vector into two distinct parts, $W_1$ and $W_2$, thereby modeling contrastive information directly at the neuron level. Traditional gradient descent stores both positive (target) and negative (non-target) feature information in a single weight vector, often obscuring fine-grained distinctions. Our approach, by contrast, maintains separate updates for target and non-target features, ultimately forming a single effective weight $W = W_1 - W_2$ that is more robust to noise and class imbalance. Experimental results on both regression (California Housing, Wine Quality) and classification (MNIST, Fashion-MNIST, CIFAR-10) tasks suggest that this decomposition enhances generalization and resists overfitting, especially when training data are sparse or noisy. Crucially, the inference complexity remains the same as in the standard $WX + \text{bias}$ setup, offering a practical solution for improved learning without additional inference-time overhead.

Explosive neural networks via higher-order interactions in curved statistical manifolds

Aug 05, 2024Abstract:Higher-order interactions underlie complex phenomena in systems such as biological and artificial neural networks, but their study is challenging due to the lack of tractable standard models. By leveraging the maximum entropy principle in curved statistical manifolds, here we introduce curved neural networks as a class of prototypical models for studying higher-order phenomena. Through exact mean-field descriptions, we show that these curved neural networks implement a self-regulating annealing process that can accelerate memory retrieval, leading to explosive order-disorder phase transitions with multi-stability and hysteresis effects. Moreover, by analytically exploring their memory capacity using the replica trick near ferromagnetic and spin-glass phase boundaries, we demonstrate that these networks enhance memory capacity over the classical associative-memory networks. Overall, the proposed framework provides parsimonious models amenable to analytical study, revealing novel higher-order phenomena in complex network systems.

A projected nonlinear state-space model for forecasting time series signals

Nov 22, 2023

Abstract:Learning and forecasting stochastic time series is essential in various scientific fields. However, despite the proposals of nonlinear filters and deep-learning methods, it remains challenging to capture nonlinear dynamics from a few noisy samples and predict future trajectories with uncertainty estimates while maintaining computational efficiency. Here, we propose a fast algorithm to learn and forecast nonlinear dynamics from noisy time series data. A key feature of the proposed model is kernel functions applied to projected lines, enabling fast and efficient capture of nonlinearities in the latent dynamics. Through empirical case studies and benchmarking, the model demonstrates its effectiveness in learning and forecasting complex nonlinear dynamics, offering a valuable tool for researchers and practitioners in time series analysis.

The principles of adaptation in organisms and machines II: Thermodynamics of the Bayesian brain

Jun 23, 2020

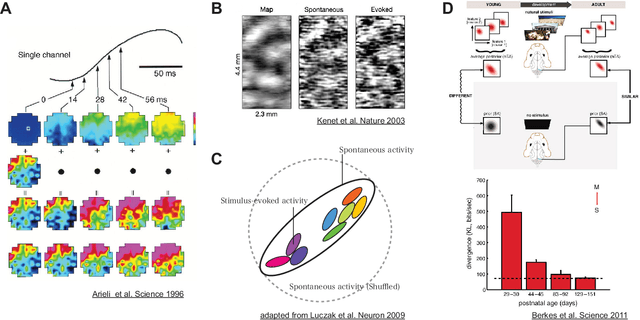

Abstract:This article reviews how organisms learn and recognize the world through the dynamics of neural networks from the perspective of Bayesian inference, and introduces a view on how such dynamics is described by the laws for the entropy of neural activity, a paradigm that we call thermodynamics of the Bayesian brain. The Bayesian brain hypothesis sees the stimulus-evoked activity of neurons as an act of constructing the Bayesian posterior distribution based on the generative model of the external world that an organism possesses. A closer look at the stimulus-evoked activity at early sensory cortices reveals that feedforward connections initially mediate the stimulus-response, which is later modulated by input from recurrent connections. Importantly, not the initial response, but the delayed modulation expresses animals' cognitive states such as awareness and attention regarding the stimulus. Using a simple generative model made of a spiking neural population, we reproduce the stimulus-evoked dynamics with the delayed feedback modulation as the process of the Bayesian inference that integrates the stimulus evidence and a prior knowledge with time-delay. We then introduce a thermodynamic view on this process based on the laws for the entropy of neural activity. This view elucidates that the process of the Bayesian inference works as the recently-proposed information-theoretic engine (neural engine, an analogue of a heat engine in thermodynamics), which allows us to quantify the perceptual capacity expressed in the delayed modulation in terms of entropy.

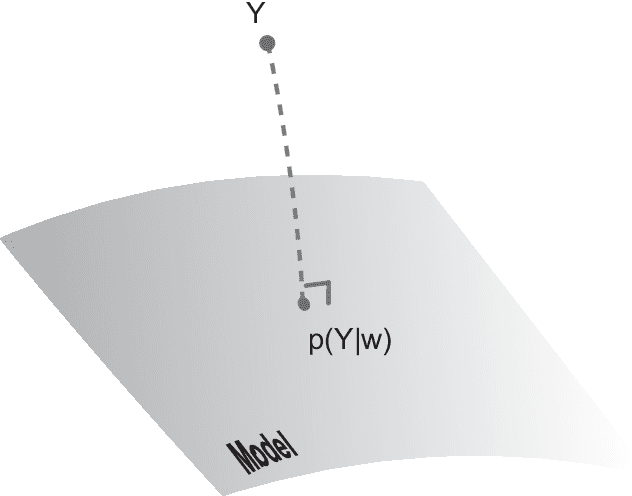

The principles of adaptation in organisms and machines I: machine learning, information theory, and thermodynamics

Feb 28, 2019

Abstract:How do organisms recognize their environment by acquiring knowledge about the world, and what actions do they take based on this knowledge? This article examines hypotheses about organisms' adaptation to the environment from machine learning, information-theoretic, and thermodynamic perspectives. We start with constructing a hierarchical model of the world as an internal model in the brain, and review standard machine learning methods to infer causes by approximately learning the model under the maximum likelihood principle. This in turn provides an overview of the free energy principle for an organism, a hypothesis to explain perception and action from the principle of least surprise. Treating this statistical learning as communication between the world and brain, learning is interpreted as a process to maximize information about the world. We investigate how the classical theories of perception such as the infomax principle relates to learning the hierarchical model. We then present an approach to the recognition and learning based on thermodynamics, showing that adaptation by causal learning results in the second law of thermodynamics whereas inference dynamics that fuses observation with prior knowledge forms a thermodynamic process. These provide a unified view on the adaptation of organisms to the environment.

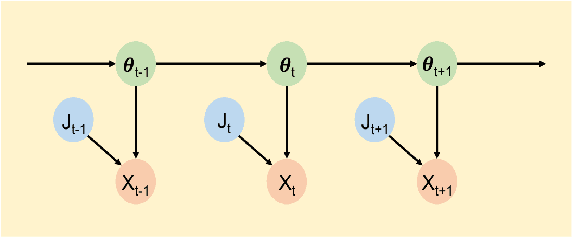

Online Estimation of Multiple Dynamic Graphs in Pattern Sequences

Jan 22, 2019

Abstract:Many time-series data including text, movies, and biological signals can be represented as sequences of correlated binary patterns. These patterns may be described by weighted combinations of a few dominant structures that underpin specific interactions among the binary elements. To extract the dominant correlation structures and their contributions to generating data in a time-dependent manner, we model the dynamics of binary patterns using the state-space model of an Ising-type network that is composed of multiple undirected graphs. We provide a sequential Bayes algorithm to estimate the dynamics of weights on the graphs while gaining the graph structures online. This model can uncover overlapping graphs underlying the data better than a traditional orthogonal decomposition method, and outperforms an original time-dependent full Ising model. We assess the performance of the method by simulated data, and demonstrate that spontaneous activity of cultured hippocampal neurons is represented by dynamics of multiple graphs.

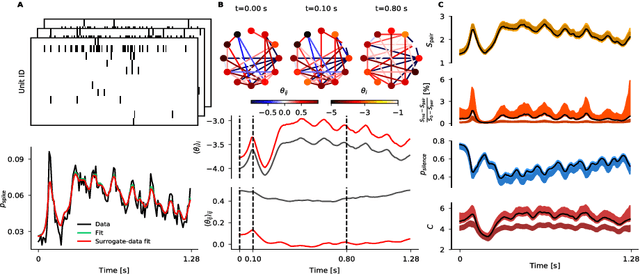

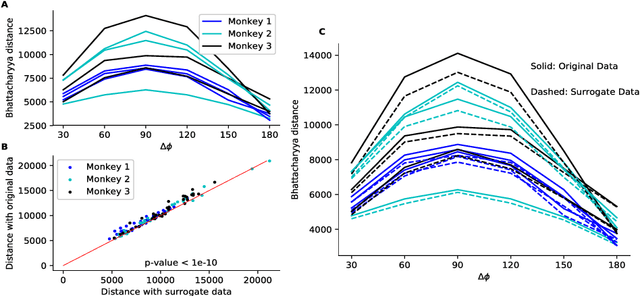

State-space analysis of an Ising model reveals contributions of pairwise interactions to sparseness, fluctuation, and stimulus coding of monkey V1 neurons

Jul 24, 2018

Abstract:In this study, we analyzed the activity of monkey V1 neurons responding to grating stimuli of different orientations using inference methods for a time-dependent Ising model. The method provides optimal estimation of time-dependent neural interactions with credible intervals according to the sequential Bayes estimation algorithm. Furthermore, it allows us to trace dynamics of macroscopic network properties such as entropy, sparseness, and fluctuation. Here we report that, in all examined stimulus conditions, pairwise interactions contribute to increasing sparseness and fluctuation. We then demonstrate that the orientation of the grating stimulus is in part encoded in the pairwise interactions of the neural populations. These results demonstrate the utility of the state-space Ising model in assessing contributions of neural interactions during stimulus processing.

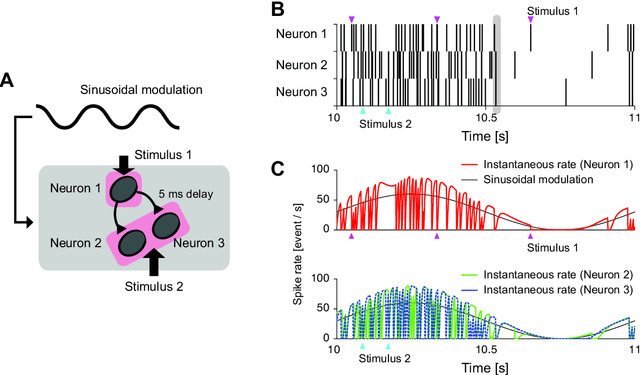

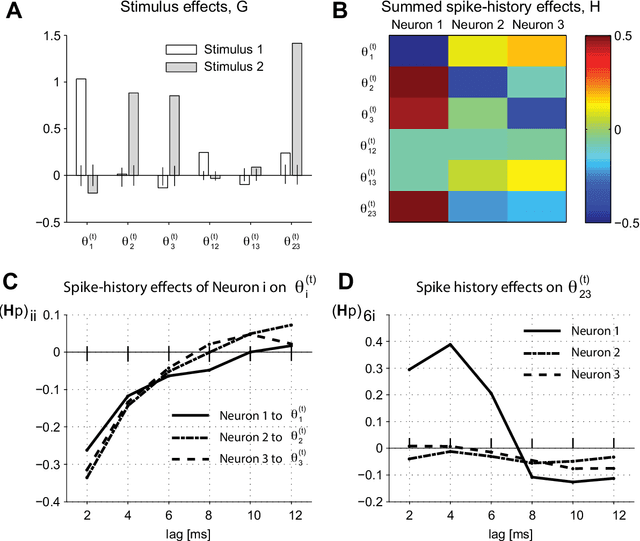

Single-trial estimation of stimulus and spike-history effects on time-varying ensemble spiking activity of multiple neurons: a simulation study

Dec 16, 2013

Abstract:Neurons in cortical circuits exhibit coordinated spiking activity, and can produce correlated synchronous spikes during behavior and cognition. We recently developed a method for estimating the dynamics of correlated ensemble activity by combining a model of simultaneous neuronal interactions (e.g., a spin-glass model) with a state-space method (Shimazaki et al. 2012 PLoS Comput Biol 8 e1002385). This method allows us to estimate stimulus-evoked dynamics of neuronal interactions which is reproducible in repeated trials under identical experimental conditions. However, the method may not be suitable for detecting stimulus responses if the neuronal dynamics exhibits significant variability across trials. In addition, the previous model does not include effects of past spiking activity of the neurons on the current state of ensemble activity. In this study, we develop a parametric method for simultaneously estimating the stimulus and spike-history effects on the ensemble activity from single-trial data even if the neurons exhibit dynamics that is largely unrelated to these effects. For this goal, we model ensemble neuronal activity as a latent process and include the stimulus and spike-history effects as exogenous inputs to the latent process. We develop an expectation-maximization algorithm that simultaneously achieves estimation of the latent process, stimulus responses, and spike-history effects. The proposed method is useful to analyze an interaction of internal cortical states and sensory evoked activity.

* 12 pages, 3 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge