Hengtao Zhang

Balance-Subsampled Stable Prediction

Jun 08, 2020

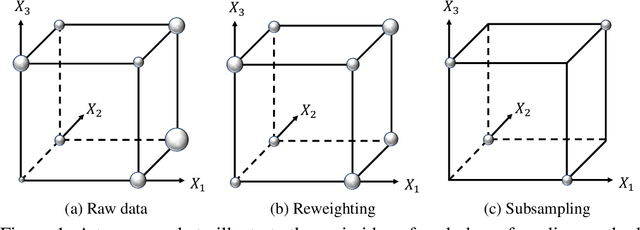

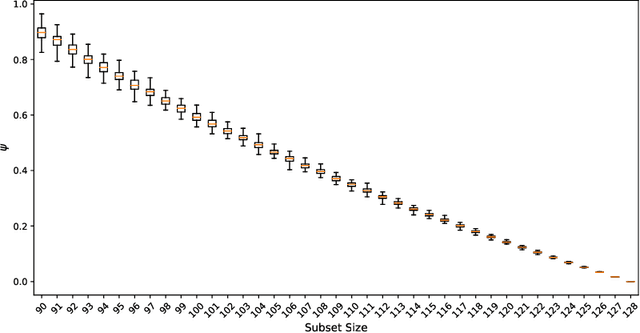

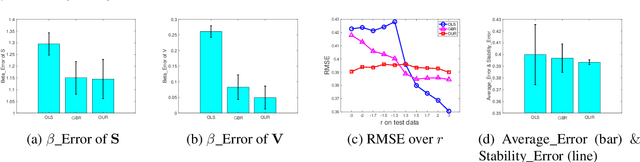

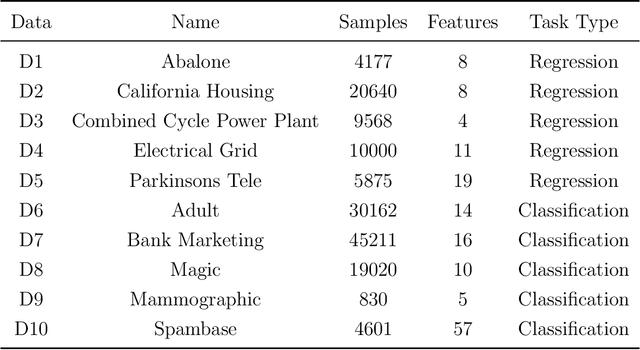

Abstract:In machine learning, it is commonly assumed that training and test data share the same population distribution. However, this assumption is often violated in practice because the sample selection bias may induce the distribution shift from training data to test data. Such a model-agnostic distribution shift usually leads to prediction instability across unknown test data. In this paper, we propose a novel balance-subsampled stable prediction (BSSP) algorithm based on the theory of fractional factorial design. It isolates the clear effect of each predictor from the confounding variables. A design-theoretic analysis shows that the proposed method can reduce the confounding effects among predictors induced by the distribution shift, hence improve both the accuracy of parameter estimation and prediction stability. Numerical experiments on both synthetic and real-world data sets demonstrate that our BSSP algorithm significantly outperforms the baseline methods for stable prediction across unknown test data.

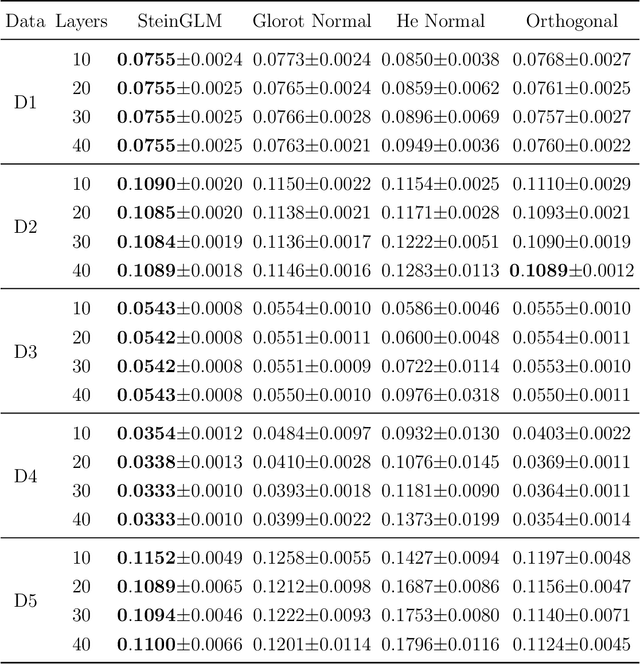

An Effective and Efficient Initialization Scheme for Multi-layer Feedforward Neural Networks

May 19, 2020

Abstract:Network initialization is the first and critical step for training neural networks. In this paper, we propose a novel network initialization scheme based on the celebrated Stein's identity. By viewing multi-layer feedforward neural networks as cascades of multi-index models, the projection weights to the first hidden layer are initialized using eigenvectors of the cross-moment matrix between the input's second-order score function and the response. The input data is then forward propagated to the next layer and such a procedure can be repeated until all the hidden layers are initialized. Finally, the weights for the output layer are initialized by generalized linear modeling. Such a proposed SteinGLM method is shown through extensive numerical results to be much faster and more accurate than other popular methods commonly used for training neural networks.

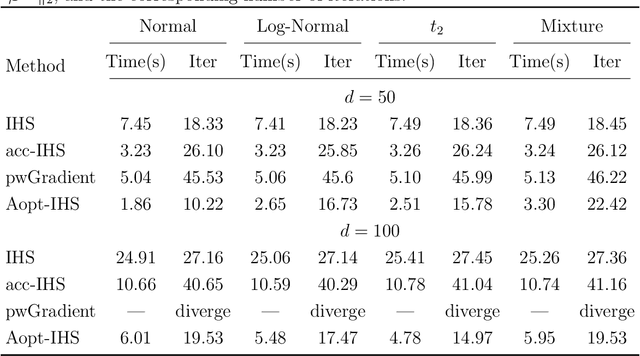

Adaptive Iterative Hessian Sketch via A-Optimal Subsampling

Feb 20, 2019

Abstract:Iterative Hessian sketch (IHS) is an effective sketching method for modeling large-scale data. It was originally proposed by Pilanci and Wainwright (2016; JMLR) based on randomized sketching matrices. However, it is computationally intensive due to the iterative sketch process. In this paper, we analyze the IHS algorithm under the unconstrained least squares problem setting, then propose a deterministic approach for improving IHS via A-optimal subsampling. Our contributions are three-fold: (1) a good initial estimator based on the $A$-optimal design is suggested; (2) a novel ridged preconditioner is developed for repeated sketching; and (3) an exact line search method is proposed for determining the optimal step length adaptively. Extensive experimental results demonstrate that our proposed A-optimal IHS algorithm outperforms the existing accelerated IHS methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge