Hengchao Li

Flying Bird Object Detection Algorithm in Surveillance Video

Jan 08, 2024

Abstract:Aiming at the characteristics of the flying bird object in surveillance video, such as the single frame image feature is not obvious, the size is small in most cases, and asymmetric, this paper proposes a Flying Bird Object Detection method for Surveillance Video (FBOD-SV). Firstly, a new feature aggregation module, the Correlation Attention Feature Aggregation (Co-Attention-FA) module, is designed to aggregate the features of the flying bird object according to the bird object's correlation on multiple consecutive frames of images. Secondly, a Flying Bird Object Detection Network (FBOD-Net) with down-sampling and then up-sampling is designed, which uses a large feature layer that fuses fine spatial information and large receptive field information to detect special multi-scale (mostly small-scale) bird objects. Finally, the SimOTA dynamic label allocation method is applied to One-Category object detection, and the SimOTA-OC dynamic label strategy is proposed to solve the difficult problem of label allocation caused by irregular flying bird objects. In this paper, the algorithm's performance is verified by the experimental data set of the surveillance video of the flying bird object of the traction substation. The experimental results show that the surveillance video flying bird object detection method proposed in this paper effectively improves the detection performance of flying bird objects.

SAR Target Recognition Using the Multi-aspect-aware Bidirectional LSTM Recurrent Neural Networks

Jul 25, 2017

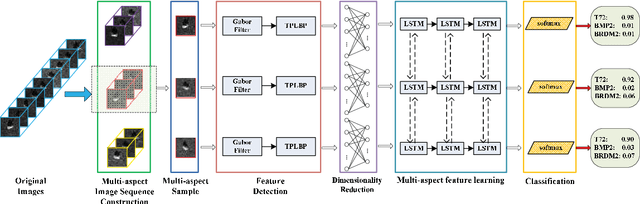

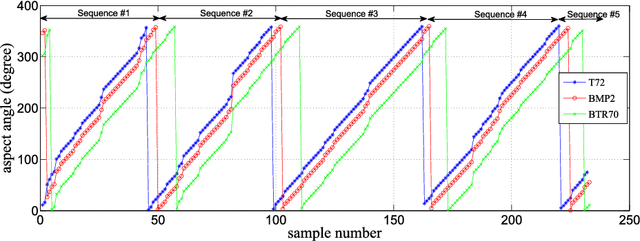

Abstract:The outstanding pattern recognition performance of deep learning brings new vitality to the synthetic aperture radar (SAR) automatic target recognition (ATR). However, there is a limitation in current deep learning based ATR solution that each learning process only handle one SAR image, namely learning the static scattering information, while missing the space-varying information. It is obvious that multi-aspect joint recognition introduced space-varying scattering information should improve the classification accuracy and robustness. In this paper, a novel multi-aspect-aware method is proposed to achieve this idea through the bidirectional Long Short-Term Memory (LSTM) recurrent neural networks based space-varying scattering information learning. Specifically, we first select different aspect images to generate the multi-aspect space-varying image sequences. Then, the Gabor filter and three-patch local binary pattern (TPLBP) are progressively implemented to extract a comprehensive spatial features, followed by dimensionality reduction with the Multi-layer Perceptron (MLP) network. Finally, we design a bidirectional LSTM recurrent neural network to learn the multi-aspect features with further integrating the softmax classifier to achieve target recognition. Experimental results demonstrate that the proposed method can achieve 99.9% accuracy for 10-class recognition. Besides, its anti-noise and anti-confusion performance are also better than the conventional deep learning based methods.

* 11 pages, 10 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge