Harrish Thasarathan

Universal Sparse Autoencoders: Interpretable Cross-Model Concept Alignment

Feb 06, 2025Abstract:We present Universal Sparse Autoencoders (USAEs), a framework for uncovering and aligning interpretable concepts spanning multiple pretrained deep neural networks. Unlike existing concept-based interpretability methods, which focus on a single model, USAEs jointly learn a universal concept space that can reconstruct and interpret the internal activations of multiple models at once. Our core insight is to train a single, overcomplete sparse autoencoder (SAE) that ingests activations from any model and decodes them to approximate the activations of any other model under consideration. By optimizing a shared objective, the learned dictionary captures common factors of variation-concepts-across different tasks, architectures, and datasets. We show that USAEs discover semantically coherent and important universal concepts across vision models; ranging from low-level features (e.g., colors and textures) to higher-level structures (e.g., parts and objects). Overall, USAEs provide a powerful new method for interpretable cross-model analysis and offers novel applications, such as coordinated activation maximization, that open avenues for deeper insights in multi-model AI systems

Artist-Guided Semiautomatic Animation Colorization

Jun 25, 2020

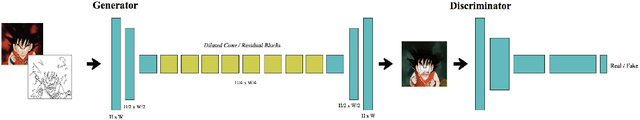

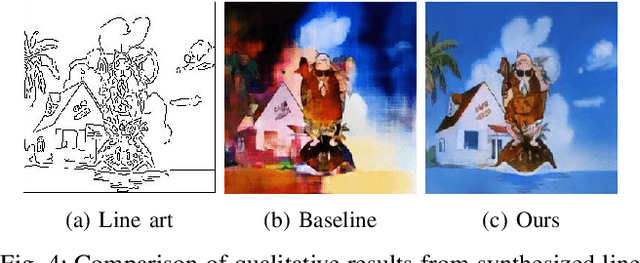

Abstract:There is a delicate balance between automating repetitive work in creative domains while staying true to an artist's vision. The animation industry regularly outsources large animation workloads to foreign countries where labor is inexpensive and long hours are common. Automating part of this process can be incredibly useful for reducing costs and creating manageable workloads for major animation studios and outsourced artists. We present a method for automating line art colorization by keeping artists in the loop to successfully reduce this workload while staying true to an artist's vision. By incorporating color hints and temporal information to an adversarial image-to-image framework, we show that it is possible to meet the balance between automation and authenticity through artist's input to generate colored frames with temporal consistency.

* This article supersedes our previous work arXiv:1904.09527

Edge-Informed Single Image Super-Resolution

Sep 11, 2019

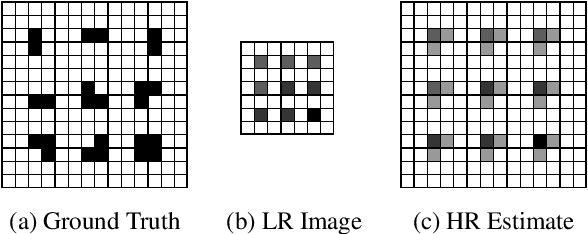

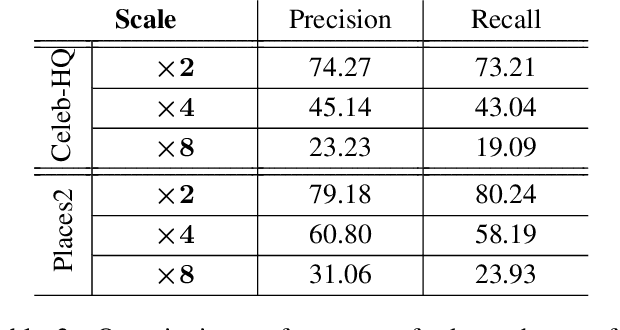

Abstract:The recent increase in the extensive use of digital imaging technologies has brought with it a simultaneous demand for higher-resolution images. We develop a novel edge-informed approach to single image super-resolution (SISR). The SISR problem is reformulated as an image inpainting task. We use a two-stage inpainting model as a baseline for super-resolution and show its effectiveness for different scale factors (x2, x4, x8) compared to basic interpolation schemes. This model is trained using a joint optimization of image contents (texture and color) and structures (edges). Quantitative and qualitative comparisons are included and the proposed model is compared with current state-of-the-art techniques. We show that our method of decoupling structure and texture reconstruction improves the quality of the final reconstructed high-resolution image. Code and models available at: https://github.com/knazeri/edge-informed-sisr

Automatic Temporally Coherent Video Colorization

Apr 21, 2019

Abstract:Greyscale image colorization for applications in image restoration has seen significant improvements in recent years. Many of these techniques that use learning-based methods struggle to effectively colorize sparse inputs. With the consistent growth of the anime industry, the ability to colorize sparse input such as line art can reduce significant cost and redundant work for production studios by eliminating the in-between frame colorization process. Simply using existing methods yields inconsistent colors between related frames resulting in a flicker effect in the final video. In order to successfully automate key areas of large-scale anime production, the colorization of line arts must be temporally consistent between frames. This paper proposes a method to colorize line art frames in an adversarial setting, to create temporally coherent video of large anime by improving existing image to image translation methods. We show that by adding an extra condition to the generator and discriminator, we can effectively create temporally consistent video sequences from anime line arts. Code and models available at: https://github.com/Harry-Thasarathan/TCVC

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge