Graham Roberts

Measures of classification bias derived from sample size analysis

Jan 06, 2026Abstract:We propose the use of a simple intuitive principle for measuring algorithmic classification bias: the significance of the differences in a classifier's error rates across the various demographics is inversely commensurate with the sample size required to statistically detect them. That is, if large sample sizes are required to statistically establish biased behavior, the algorithm is less biased, and vice versa. In a simple setting, we assume two distinct demographics, and non-parametric estimates of the error rates on them, e1 and e2, respectively. We use a well-known approximate formula for the sample size of the chi-squared test, and verify some basic desirable properties of the proposed measure. Next, we compare the proposed measure with two other commonly used statistics, the difference e2-e1 and the ratio e2/e1 of the error rates. We establish that the proposed measure is essentially different in that it can rank algorithms for bias differently, and we discuss some of its advantages over the other two measures. Finally, we briefly discuss how some of the desirable properties of the proposed measure emanate from fundamental characteristics of the method, rather than the approximate sample size formula we used, and thus, are expected to hold in more complex settings with more than two demographics.

Zero-failure testing of binary classifiers

Jul 04, 2024

Abstract:We propose using performance metrics derived from zero-failure testing to assess binary classifiers. The principal characteristic of the proposed approach is the asymmetric treatment of the two types of error. In particular, we construct a test set consisting of positive and negative samples, set the operating point of the binary classifier at the lowest value that will result to correct classifications of all positive samples, and use the algorithm's success rate on the negative samples as a performance measure. A property of the proposed approach, setting it apart from other commonly used testing methods, is that it allows the construction of a series of tests of increasing difficulty, corresponding to a nested sequence of positive sample test sets. We illustrate the proposed method on the problem of age estimation for determining whether a subject is above a legal age threshold, a problem that exemplifies the asymmetry of the two types of error. Indeed, misclassifying an under-aged subject is a legal and regulatory issue, while misclassifications of people above the legal age is an efficiency issue primarily concerning the commercial user of the age estimation system.

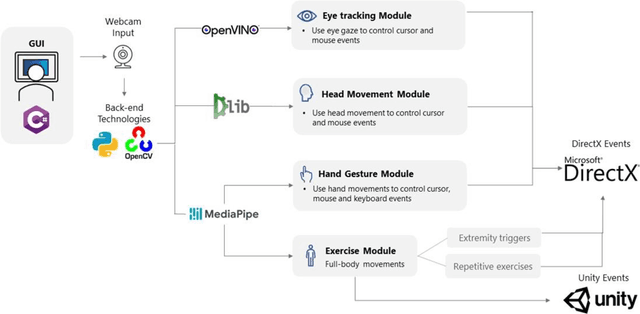

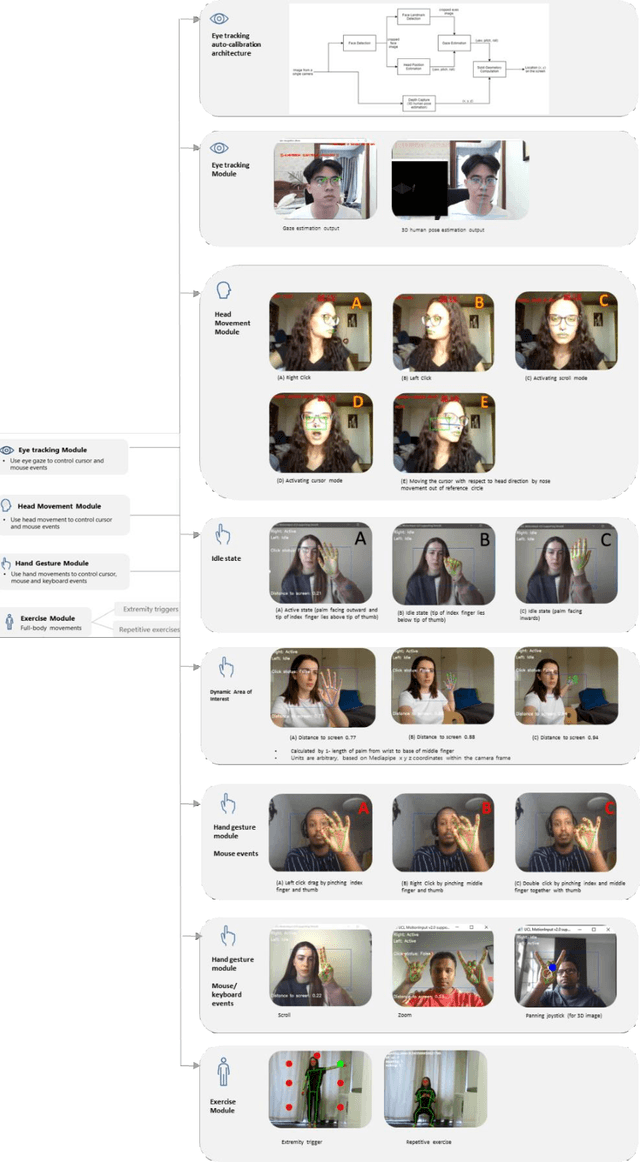

MotionInput v2.0 supporting DirectX: A modular library of open-source gesture-based machine learning and computer vision methods for interacting and controlling existing software with a webcam

Aug 10, 2021

Abstract:Touchless computer interaction has become an important consideration during the COVID-19 pandemic period. Despite progress in machine learning and computer vision that allows for advanced gesture recognition, an integrated collection of such open-source methods and a user-customisable approach to utilising them in a low-cost solution for touchless interaction in existing software is still missing. In this paper, we introduce the MotionInput v2.0 application. This application utilises published open-source libraries and additional gesture definitions developed to take the video stream from a standard RGB webcam as input. It then maps human motion gestures to input operations for existing applications and games. The user can choose their own preferred way of interacting from a series of motion types, including single and bi-modal hand gesturing, full-body repetitive or extremities-based exercises, head and facial movements, eye tracking, and combinations of the above. We also introduce a series of bespoke gesture recognition classifications as DirectInput triggers, including gestures for idle states, auto calibration, depth capture from a 2D RGB webcam stream and tracking of facial motions such as mouth motions, winking, and head direction with rotation. Three use case areas assisted the development of the modules: creativity software, office and clinical software, and gaming software. A collection of open-source libraries has been integrated and provide a layer of modular gesture mapping on top of existing mouse and keyboard controls in Windows via DirectX. With ease of access to webcams integrated into most laptops and desktop computers, touchless computing becomes more available with MotionInput v2.0, in a federated and locally processed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge