Gillat Kol

Statistically Near-Optimal Hypothesis Selection

Aug 17, 2021

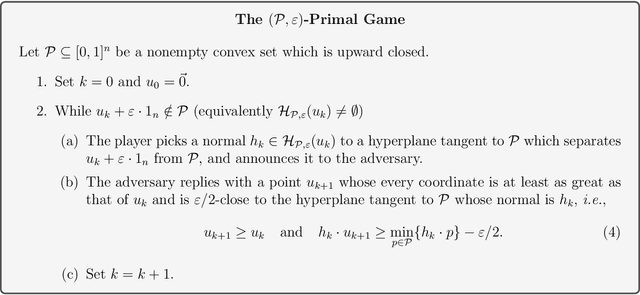

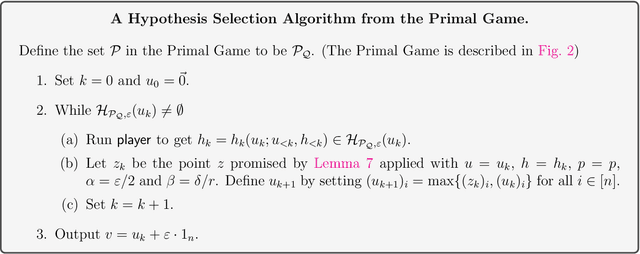

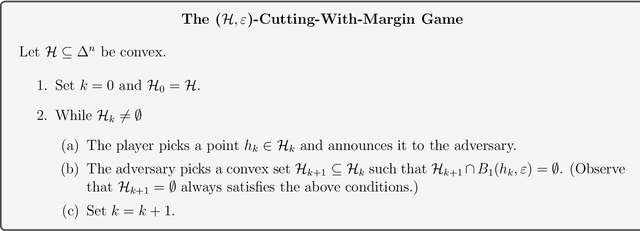

Abstract:Hypothesis Selection is a fundamental distribution learning problem where given a comparator-class $Q=\{q_1,\ldots, q_n\}$ of distributions, and a sampling access to an unknown target distribution $p$, the goal is to output a distribution $q$ such that $\mathsf{TV}(p,q)$ is close to $opt$, where $opt = \min_i\{\mathsf{TV}(p,q_i)\}$ and $\mathsf{TV}(\cdot, \cdot)$ denotes the total-variation distance. Despite the fact that this problem has been studied since the 19th century, its complexity in terms of basic resources, such as number of samples and approximation guarantees, remains unsettled (this is discussed, e.g., in the charming book by Devroye and Lugosi `00). This is in stark contrast with other (younger) learning settings, such as PAC learning, for which these complexities are well understood. We derive an optimal $2$-approximation learning strategy for the Hypothesis Selection problem, outputting $q$ such that $\mathsf{TV}(p,q) \leq2 \cdot opt + \eps$, with a (nearly) optimal sample complexity of~$\tilde O(\log n/\epsilon^2)$. This is the first algorithm that simultaneously achieves the best approximation factor and sample complexity: previously, Bousquet, Kane, and Moran (COLT `19) gave a learner achieving the optimal $2$-approximation, but with an exponentially worse sample complexity of $\tilde O(\sqrt{n}/\epsilon^{2.5})$, and Yatracos~(Annals of Statistics `85) gave a learner with optimal sample complexity of $O(\log n /\epsilon^2)$ but with a sub-optimal approximation factor of $3$.

Convex Set Disjointness, Distributed Learning of Halfspaces, and LP Feasibility

Sep 08, 2019

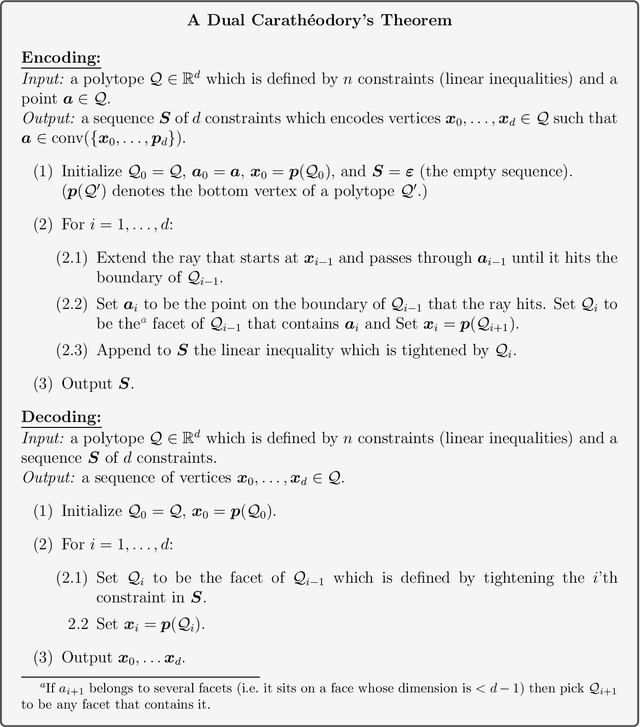

Abstract:We study the Convex Set Disjointness (CSD) problem, where two players have input sets taken from an arbitrary fixed domain~$U\subseteq \mathbb{R}^d$ of size $\lvert U\rvert = n$. Their mutual goal is to decide using minimum communication whether the convex hulls of their sets intersect (equivalently, whether their sets can be separated by a hyperplane). Different forms of this problem naturally arise in distributed learning and optimization: it is equivalent to {\em Distributed Linear Program (LP) Feasibility} -- a basic task in distributed optimization, and it is tightly linked to {\it Distributed Learning of Halfdpaces in $\mathbb{R}^d$}. In {communication complexity theory}, CSD can be viewed as a geometric interpolation between the classical problems of {Set Disjointness} (when~$d\geq n-1$) and {Greater-Than} (when $d=1$). We establish a nearly tight bound of $\tilde \Theta(d\log n)$ on the communication complexity of learning halfspaces in $\mathbb{R}^d$. For Convex Set Disjointness (and the equivalent task of distributed LP feasibility) we derive upper and lower bounds of $\tilde O(d^2\log n)$ and~$\Omega(d\log n)$. These results improve upon several previous works in distributed learning and optimization. Unlike typical works in communication complexity, the main technical contribution of this work lies in the upper bounds. In particular, our protocols are based on a {\it Container Lemma for Halfspaces} and on two variants of {\it Carath\'eodory's Theorem}, which may be of independent interest. These geometric statements are used by our protocols to provide a compressed summary of the players' input.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge