Gianmarco Pinton

mach: ultrafast ultrasound beamforming

Apr 06, 2026Abstract:Purpose: Volumetric ultrafast ultrasound produces massive datasets with high frame rates, dense reconstruction grids, and large channel counts. Beamforming computational demands limit research throughput and prevent real-time applications in emerging modalities such as elastography, functional neuroimaging, and microscopy. Approach: We developed mach, an open-source, GPU-accelerated beamformer with a highly optimized delay-and-sum CUDA kernel and an accessible Python interface. mach uses a hybrid delay computation strategy that substantially reduces memory overhead compared to fully precomputed approaches. The CUDA implementation optimizes memory layout for coalesced access and reuses delay computations across frames via shared memory. We benchmarked mach on the PyMUST rotating disk dataset and validated numerical accuracy against existing open-source beamformers. Results: mach processes 1.1 trillion points per second on a consumer-grade GPU, achieving $>$10$\times$ faster performance than existing open-source GPU beamformers. On the PyMUST rotating disk benchmark, mach completes reconstruction in 0.23~ms, 6$\times$ faster than the acoustic round-trip time to the imaging depth. Validation against other beamformers confirms numerical accuracy with errors below $-60$~dB for Power Doppler and $-120$~dB for B-mode. Conclusions: mach achieves 1.1 trillion points per second throughput, enabling real-time 3D ultrafast ultrasound reconstruction for the first time on consumer-grade hardware. By eliminating the beamforming bottleneck, mach enables real-time applications such as 3D functional neuroimaging, intraoperative guidance, and ultrasound localization microscopy. mach is freely available at https://github.com/Forest-Neurotech/mach

* 17 pages, 8 figures, 5 tables. LaTeX. Published in SPIE Journal of Medical Imaging. Source code and package: https://github.com/Forest-Neurotech/mach

Ultrasound Lung Aeration Map via Physics-Aware Neural Operators

Jan 02, 2025

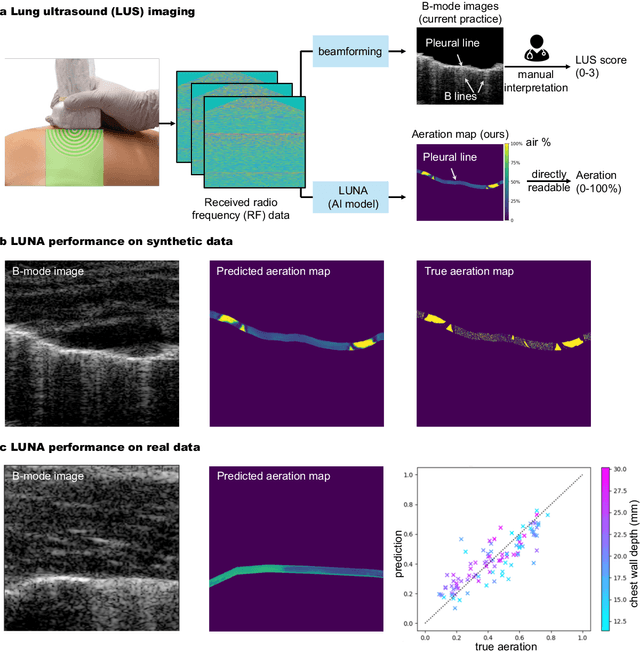

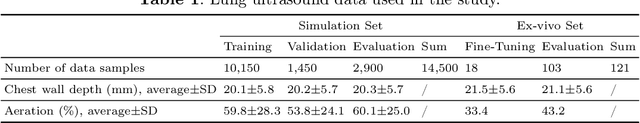

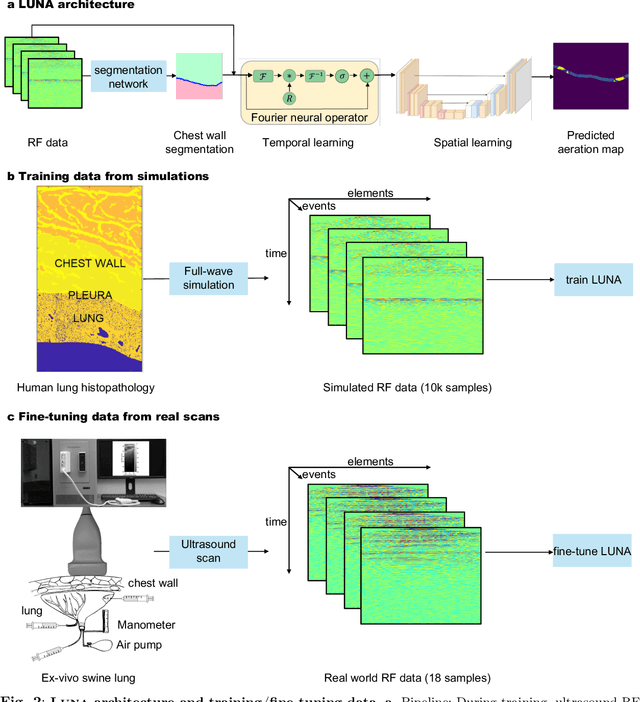

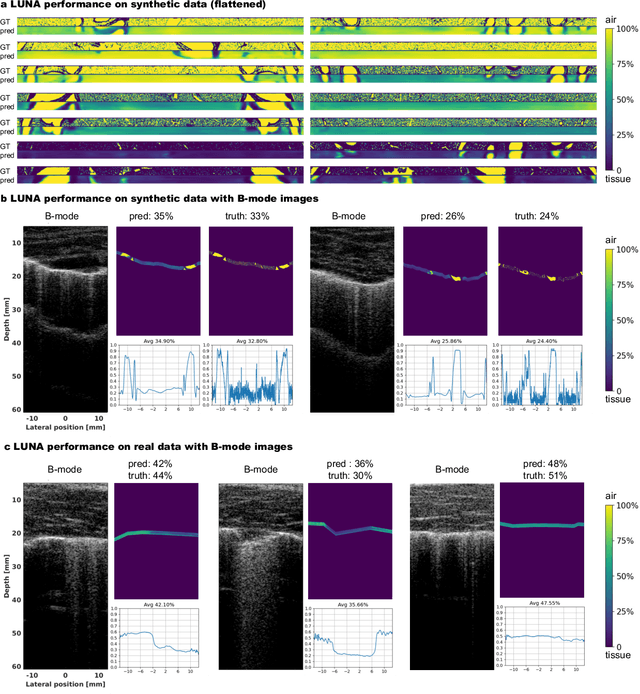

Abstract:Lung ultrasound is a growing modality in clinics for diagnosing and monitoring acute and chronic lung diseases due to its low cost and accessibility. Lung ultrasound works by emitting diagnostic pulses, receiving pressure waves and converting them into radio frequency (RF) data, which are then processed into B-mode images with beamformers for radiologists to interpret. However, unlike conventional ultrasound for soft tissue anatomical imaging, lung ultrasound interpretation is complicated by complex reverberations from the pleural interface caused by the inability of ultrasound to penetrate air. The indirect B-mode images make interpretation highly dependent on reader expertise, requiring years of training, which limits its widespread use despite its potential for high accuracy in skilled hands. To address these challenges and democratize ultrasound lung imaging as a reliable diagnostic tool, we propose LUNA, an AI model that directly reconstructs lung aeration maps from RF data, bypassing the need for traditional beamformers and indirect interpretation of B-mode images. LUNA uses a Fourier neural operator, which processes RF data efficiently in Fourier space, enabling accurate reconstruction of lung aeration maps. LUNA offers a quantitative, reader-independent alternative to traditional semi-quantitative lung ultrasound scoring methods. The development of LUNA involves synthetic and real data: We simulate synthetic data with an experimentally validated approach and scan ex vivo swine lungs as real data. Trained on abundant simulated data and fine-tuned with a small amount of real-world data, LUNA achieves robust performance, demonstrated by an aeration estimation error of 9% in ex-vivo lung scans. We demonstrate the potential of reconstructing lung aeration maps from RF data, providing a foundation for improving lung ultrasound reproducibility and diagnostic utility.

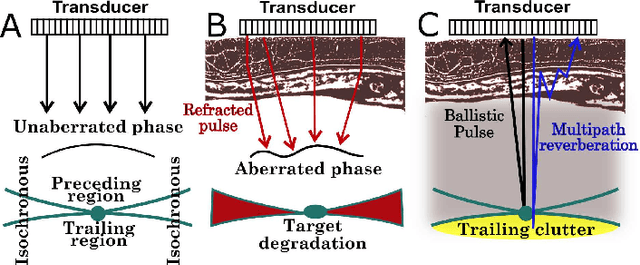

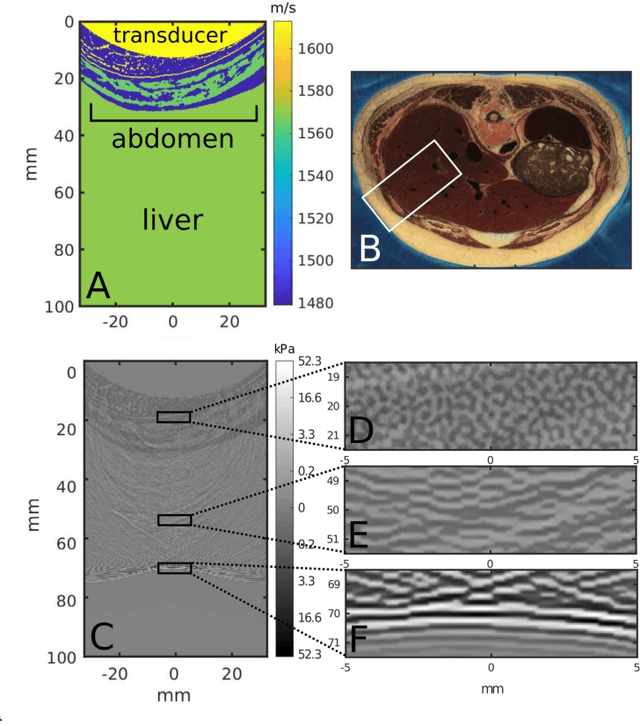

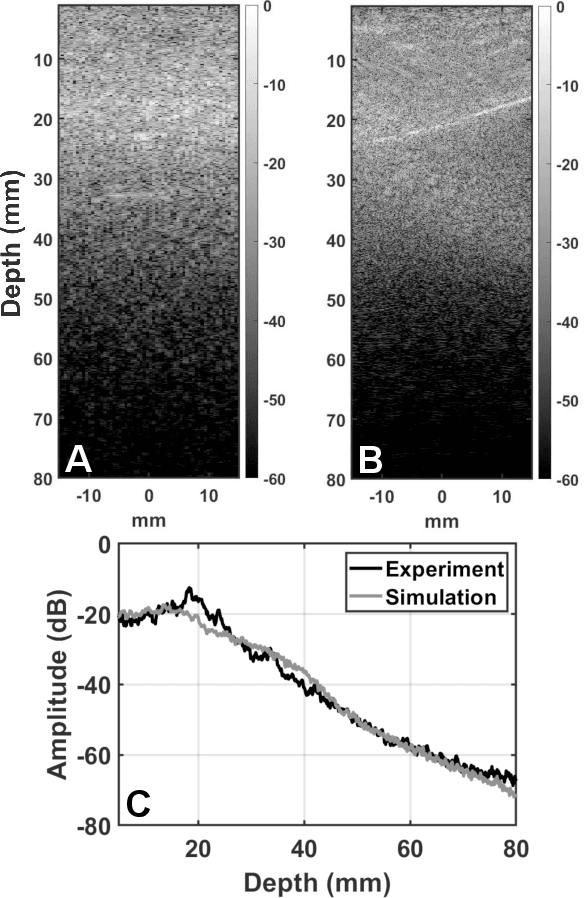

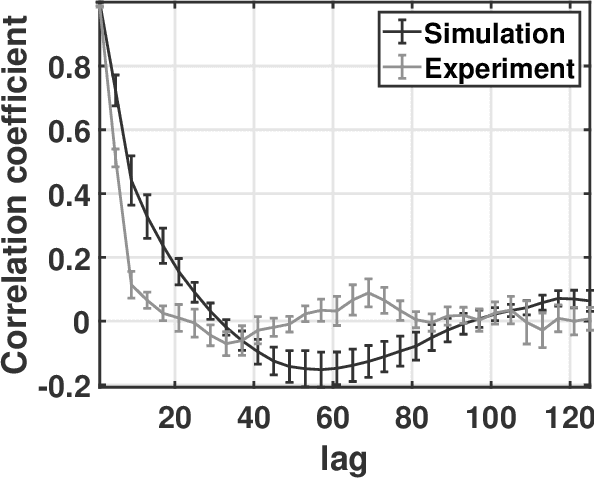

Deconstruction and reconstruction of image-degrading effects in the human abdomen using Fullwave: phase aberration, multiple reverberation, and trailing reverberation

Jun 25, 2021

Abstract:Ultrasound image degradation in the human body is complex and occurs due to the distortion of the wave as it propagates to and from the target. Here, we establish a simulation based framework that deconstructs the sources of image degradation into a separable parameter space that includes phase aberration from speed variation, multiple reverberations, and trailing reverberation. These separable parameters are then used to reconstruct images with known and independently modulable amounts of degradation using methods that depend on the additive or multiplicative nature of the degradation. Experimental measurements and Fullwave simulations in the human abdomen demonstrate this calibrated process in abdominal imaging by matching relevant imaging metrics such as phase aberration, reverberation strength, speckle brightness and coherence length. Applications of the reconstruction technique are illustrated for beamforming strategies (phase aberration correction, spatial coherence imaging), in a standard abdominal environment, as well as in impedance ranges much higher than those naturally occurring in the body.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge