Giancarlo Pellegrino

Rag and Roll: An End-to-End Evaluation of Indirect Prompt Manipulations in LLM-based Application Frameworks

Aug 09, 2024

Abstract:Retrieval Augmented Generation (RAG) is a technique commonly used to equip models with out of distribution knowledge. This process involves collecting, indexing, retrieving, and providing information to an LLM for generating responses. Despite its growing popularity due to its flexibility and low cost, the security implications of RAG have not been extensively studied. The data for such systems are often collected from public sources, providing an attacker a gateway for indirect prompt injections to manipulate the responses of the model. In this paper, we investigate the security of RAG systems against end-to-end indirect prompt manipulations. First, we review existing RAG framework pipelines deriving a prototypical architecture and identifying potentially critical configuration parameters. We then examine prior works searching for techniques that attackers can use to perform indirect prompt manipulations. Finally, implemented Rag n Roll, a framework to determine the effectiveness of attacks against end-to-end RAG applications. Our results show that existing attacks are mostly optimized to boost the ranking of malicious documents during the retrieval phase. However, a higher rank does not immediately translate into a reliable attack. Most attacks, against various configurations, settle around a 40% success rate, which could rise to 60% when considering ambiguous answers as successful attacks (those that include the expected benign one as well). Additionally, when using unoptimized documents, attackers deploying two of them (or more) for a target query can achieve similar results as those using optimized ones. Finally, exploration of the configuration space of a RAG showed limited impact in thwarting the attacks, where the most successful combination severely undermines functionality.

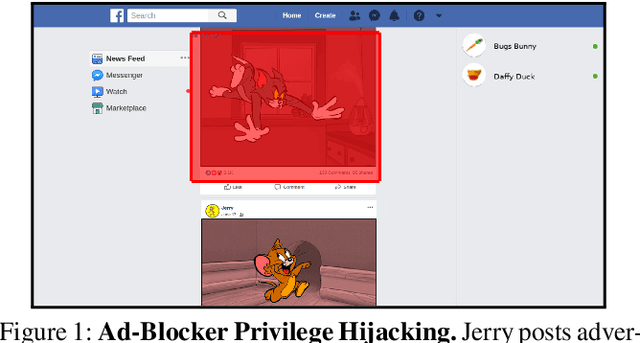

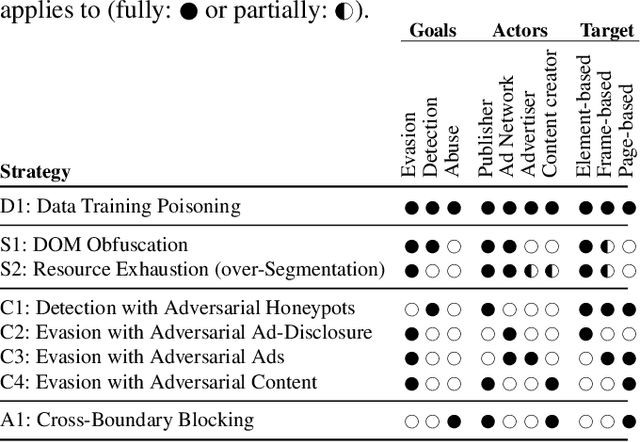

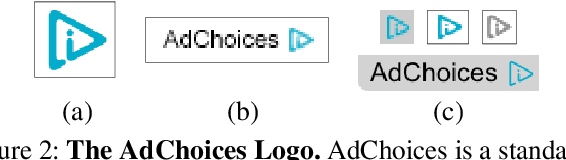

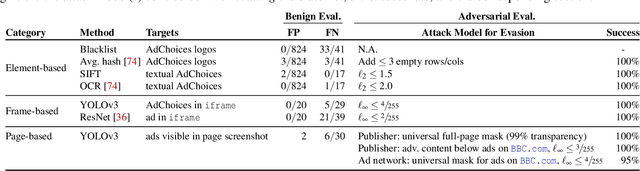

Ad-versarial: Defeating Perceptual Ad-Blocking

Nov 08, 2018

Abstract:Perceptual ad-blocking is a novel approach that uses visual cues to detect online advertisements. Compared to classical filter lists, perceptual ad-blocking is believed to be less prone to an arms race with web publishers and ad-networks. In this work we use techniques from adversarial machine learning to demonstrate that this may not be the case. We show that perceptual ad-blocking engenders a new arms race that likely disfavors ad-blockers. Unexpectedly, perceptual ad-blocking can also introduce new vulnerabilities that let an attacker bypass web security boundaries and mount DDoS attacks. We first analyze the design space of perceptual ad-blockers and present a unified architecture that incorporates prior academic and commercial work. We then explore a variety of attacks on the ad-blocker's full visual-detection pipeline, that enable publishers or ad-networks to evade or detect ad-blocking, and at times even abuse its high privilege level to bypass web security boundaries. Our attacks exploit the unreasonably strong threat model that perceptual ad-blockers must survive. Finally, we evaluate a concrete set of attacks on an ad-blocker's internal ad-classifier by instantiating adversarial examples for visual systems in a real web-security context. For six ad-detection techniques, we create perturbed ads, ad-disclosures, and native web content that misleads perceptual ad-blocking with 100% success rates. For example, we demonstrate how a malicious user can upload adversarial content (e.g., a perturbed image in a Facebook post) that fools the ad-blocker into removing other users' non-ad content.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge