Gaohua Lin

Video Smoke Detection Based on Deep Saliency Network

Sep 08, 2018

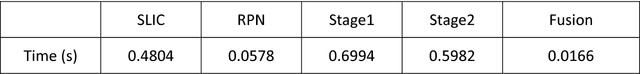

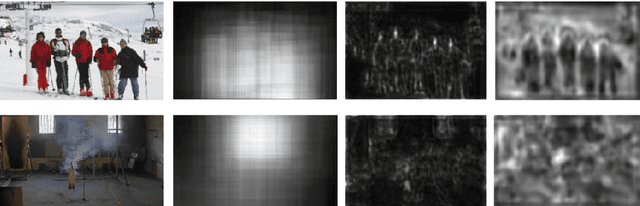

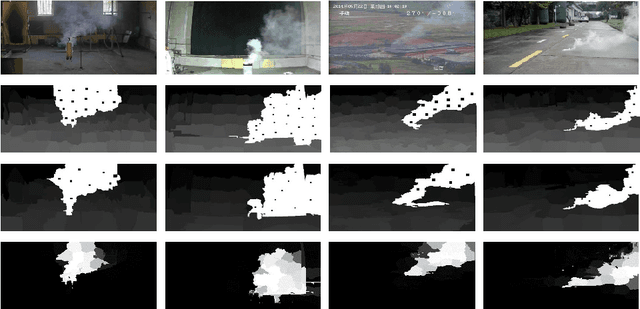

Abstract:Video smoke detection is a promising fire detection method especially in open or large spaces and outdoor environments. Traditional smoke detection consists of candidate region extraction and classification, but it lacks powerful characterization for smoke. In this paper, we propose a novel method for video smoke detection based on deep saliency network. Visual saliency detection aims to highlight the most important object regions in an image. The pixel-level and object-level salient CNNs are combined to extract the informative smoke saliency map. For the need of application for smoke event detection, an end-to-end framework for salient smoke detection and existence prediction of smoke is proposed. The deep feature map is combined with the saliency map to predict the existence of smoke in image. Initial dataset and augmented dataset are built to measure the performance of frameworks with different design strategies. Qualitative and quantitative analysis at frame-level and pixel-level demonstrates the excellent performance of the ultimate framework.

Domain Adaptation from Synthesis to Reality in Single-model Detector for Video Smoke Detection

May 18, 2018

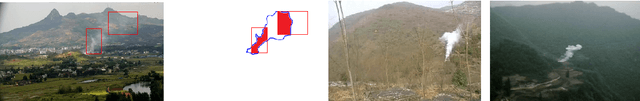

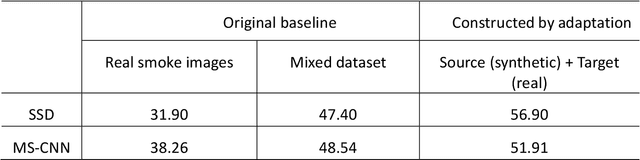

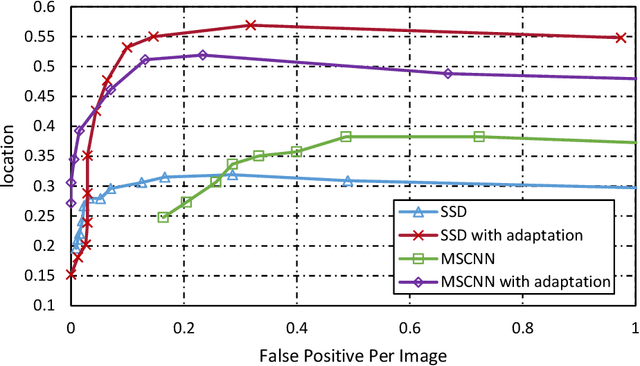

Abstract:This paper proposes a method for video smoke detection using synthetic smoke samples. The virtual data can automatically offer precise and rich annotated samples. However, the learning of smoke representations will be hurt by the appearance gap between real and synthetic smoke samples. The existed researches mainly work on the adaptation to samples extracted from original annotated samples. These methods take the object detection and domain adaptation as two independent parts. To train a strong detector with rich synthetic samples, we construct the adaptation to the detection layer of state-of-the-art single-model detectors (SSD and MS-CNN). The training procedure is an end-to-end stage. The classification, location and adaptation are combined in the learning. The performance of the proposed model surpasses the original baseline in our experiments. Meanwhile, our results show that the detectors based on the adversarial adaptation are superior to the detectors based on the discrepancy adaptation. Code will be made publicly available on http://smoke.ustc.edu.cn. Moreover, the domain adaptation for two-stage detector is described in Appendix A.

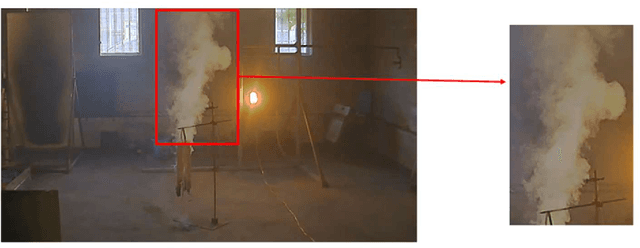

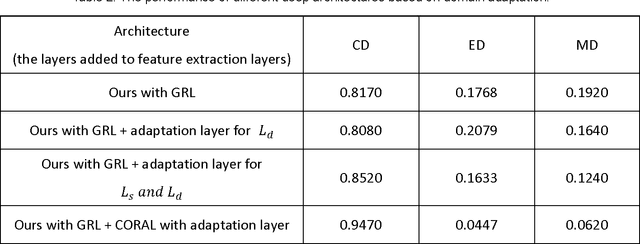

Deep Domain Adaptation Based Video Smoke Detection using Synthetic Smoke Images

Mar 31, 2017

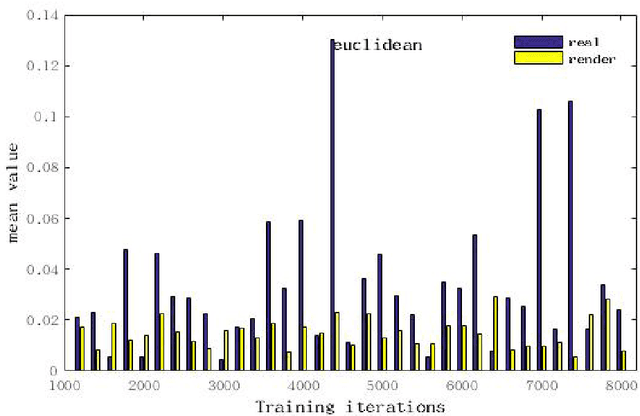

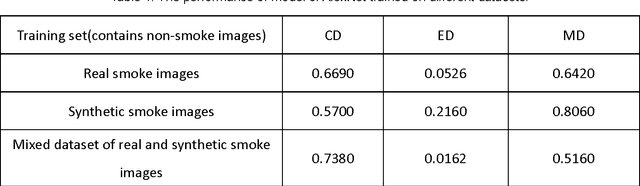

Abstract:In this paper, a deep domain adaptation based method for video smoke detection is proposed to extract a powerful feature representation of smoke. Due to the smoke image samples limited in scale and diversity for deep CNN training, we systematically produced adequate synthetic smoke images with a wide variation in the smoke shape, background and lighting conditions. Considering that the appearance gap (dataset bias) between synthetic and real smoke images degrades significantly the performance of the trained model on the test set composed fully of real images, we build deep architectures based on domain adaptation to confuse the distributions of features extracted from synthetic and real smoke images. This approach expands the domain-invariant feature space for smoke image samples. With their approximate feature distribution off non-smoke images, the recognition rate of the trained model is improved significantly compared to the model trained directly on mixed dataset of synthetic and real images. Experimentally, several deep architectures with different design choices are applied to the smoke detector. The ultimate framework can get a satisfactory result on the test set. We believe that our approach is a start in the direction of utilizing deep neural networks enhanced with synthetic smoke images for video smoke detection.

* The manuscript approved by all authors is our original work, and has submitted to Fire Safety Journal for peer review previously. There are 4516 words, 8 figures and 2 tables in this manuscript

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge