Gabriel Van Zandycke

Sportradar AG, UCLouvain

Context-Aware 3D Object Localization from Single Calibrated Images: A Study of Basketballs

Sep 07, 2023Abstract:Accurately localizing objects in three dimensions (3D) is crucial for various computer vision applications, such as robotics, autonomous driving, and augmented reality. This task finds another important application in sports analytics and, in this work, we present a novel method for 3D basketball localization from a single calibrated image. Our approach predicts the object's height in pixels in image space by estimating its projection onto the ground plane within the image, leveraging the image itself and the object's location as inputs. The 3D coordinates of the ball are then reconstructed by exploiting the known projection matrix. Extensive experiments on the public DeepSport dataset, which provides ground truth annotations for 3D ball location alongside camera calibration information for each image, demonstrate the effectiveness of our method, offering substantial accuracy improvements compared to recent work. Our work opens up new possibilities for enhanced ball tracking and understanding, advancing computer vision in diverse domains. The source code of this work is made publicly available at \url{https://github.com/gabriel-vanzandycke/deepsport}.

DeepSportradar-v1: Computer Vision Dataset for Sports Understanding with High Quality Annotations

Aug 17, 2022

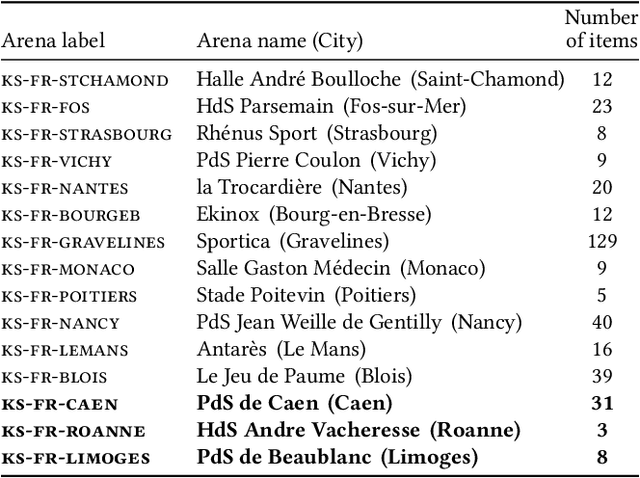

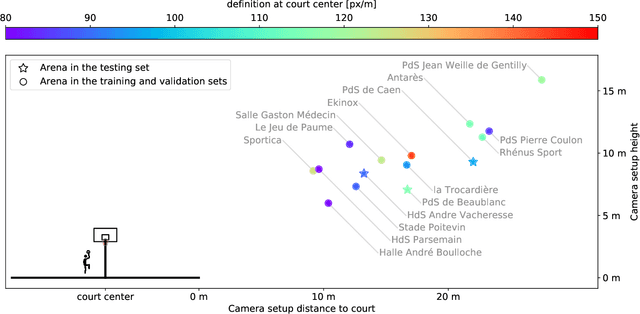

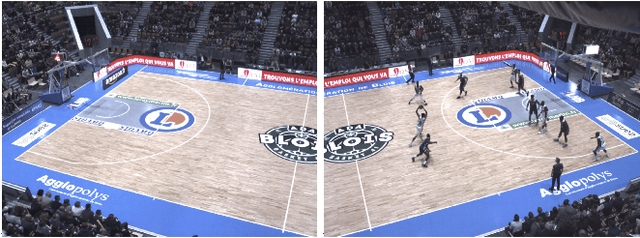

Abstract:With the recent development of Deep Learning applied to Computer Vision, sport video understanding has gained a lot of attention, providing much richer information for both sport consumers and leagues. This paper introduces DeepSportradar-v1, a suite of computer vision tasks, datasets and benchmarks for automated sport understanding. The main purpose of this framework is to close the gap between academic research and real world settings. To this end, the datasets provide high-resolution raw images, camera parameters and high quality annotations. DeepSportradar currently supports four challenging tasks related to basketball: ball 3D localization, camera calibration, player instance segmentation and player re-identification. For each of the four tasks, a detailed description of the dataset, objective, performance metrics, and the proposed baseline method are provided. To encourage further research on advanced methods for sport understanding, a competition is organized as part of the MMSports workshop from the ACM Multimedia 2022 conference, where participants have to develop state-of-the-art methods to solve the above tasks. The four datasets, development kits and baselines are publicly available.

Ball 3D localization from a single calibrated image

Apr 04, 2022

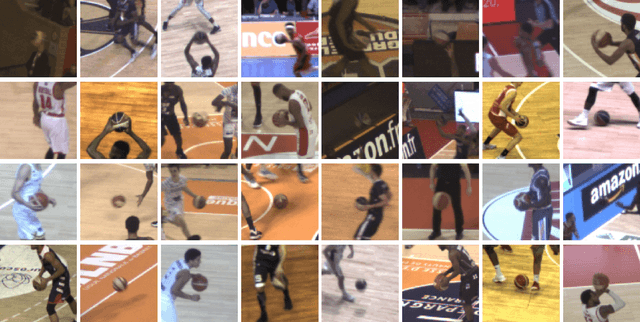

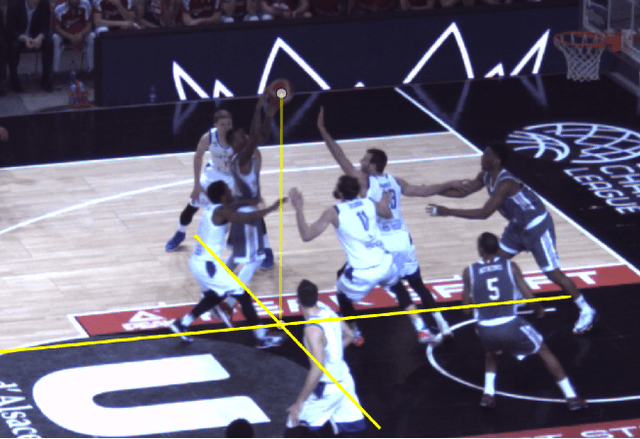

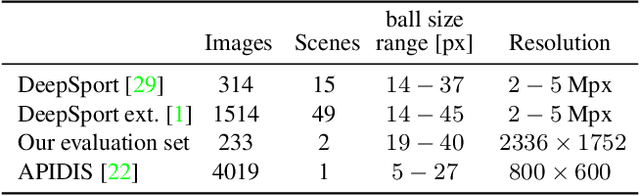

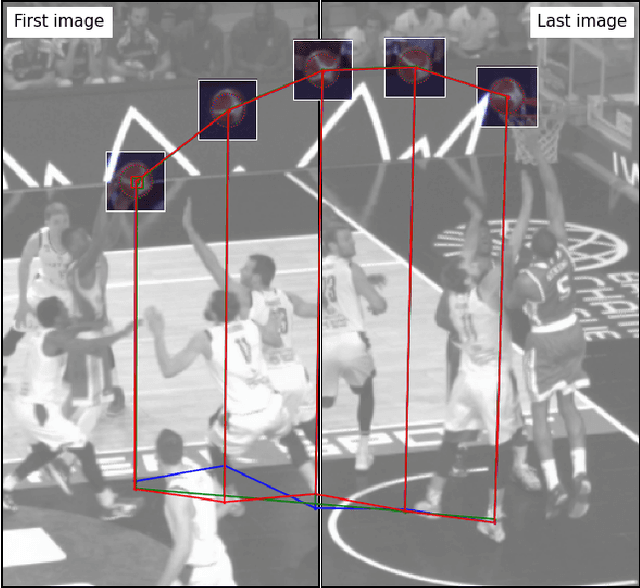

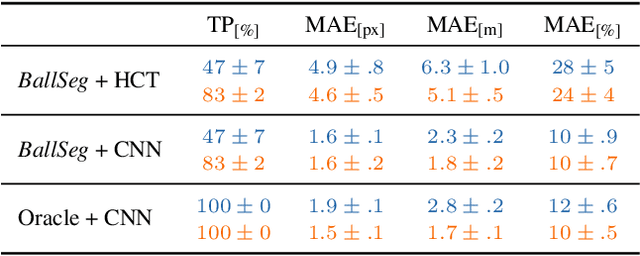

Abstract:Ball 3D localization in team sports has various applications including automatic offside detection in soccer, or shot release localization in basketball. Today, this task is either resolved by using expensive multi-views setups, or by restricting the analysis to ballistic trajectories. In this work, we propose to address the task on a single image from a calibrated monocular camera by estimating ball diameter in pixels and use the knowledge of real ball diameter in meters. This approach is suitable for any game situation where the ball is (even partly) visible. To achieve this, we use a small neural network trained on image patches around candidates generated by a conventional ball detector. Besides predicting ball diameter, our network outputs the confidence of having a ball in the image patch. Validations on 3 basketball datasets reveals that our model gives remarkable predictions on ball 3D localization. In addition, through its confidence output, our model improves the detection rate by filtering the candidates produced by the detector. The contributions of this work are (i) the first model to address 3D ball localization on a single image, (ii) an effective method for ball 3D annotation from single calibrated images, (iii) a high quality 3D ball evaluation dataset annotated from a single viewpoint. In addition, the code to reproduce this research is be made freely available at https://github.com/gabriel-vanzandycke/deepsport.

DeepSportLab: a Unified Framework for Ball Detection, Player Instance Segmentation and Pose Estimation in Team Sports Scenes

Dec 01, 2021

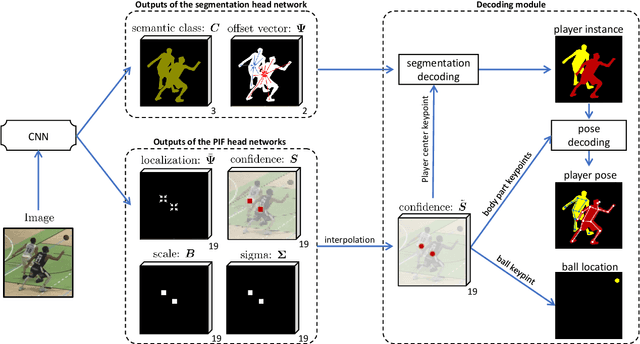

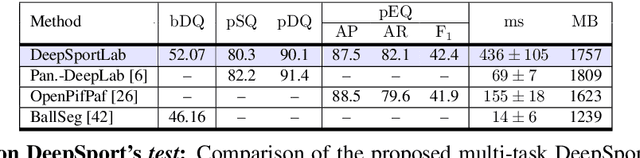

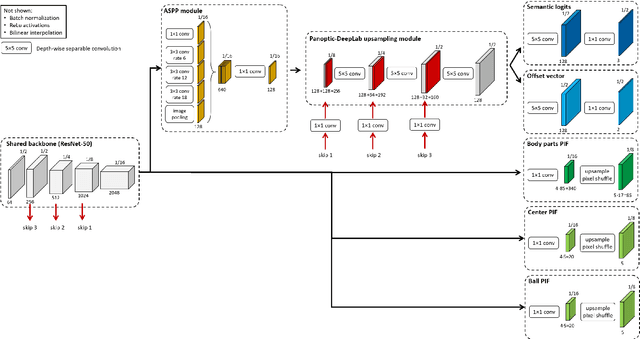

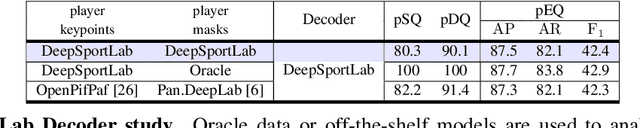

Abstract:This paper presents a unified framework to (i) locate the ball, (ii) predict the pose, and (iii) segment the instance mask of players in team sports scenes. Those problems are of high interest in automated sports analytics, production, and broadcast. A common practice is to individually solve each problem by exploiting universal state-of-the-art models, \eg, Panoptic-DeepLab for player segmentation. In addition to the increased complexity resulting from the multiplication of single-task models, the use of the off-the-shelf models also impedes the performance due to the complexity and specificity of the team sports scenes, such as strong occlusion and motion blur. To circumvent those limitations, our paper proposes to train a single model that simultaneously predicts the ball and the player mask and pose by combining the part intensity fields and the spatial embeddings principles. Part intensity fields provide the ball and player location, as well as player joints location. Spatial embeddings are then exploited to associate player instance pixels to their respective player center, but also to group player joints into skeletons. We demonstrate the effectiveness of the proposed model on the DeepSport basketball dataset, achieving comparable performance to the SoA models addressing each individual task separately.

Real-time CNN-based Segmentation Architecture for Ball Detection in a Single View Setup

Jul 23, 2020

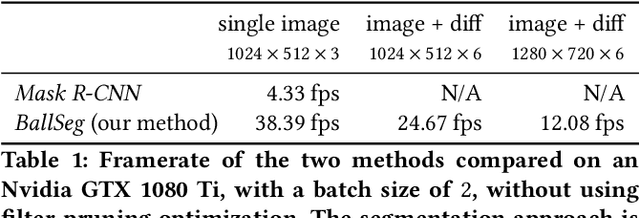

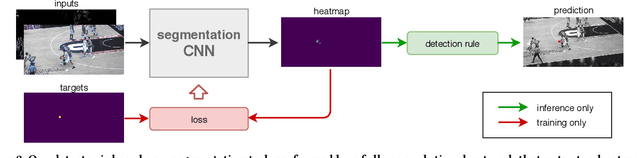

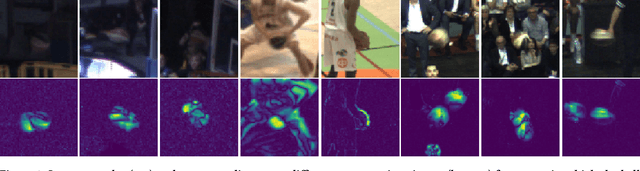

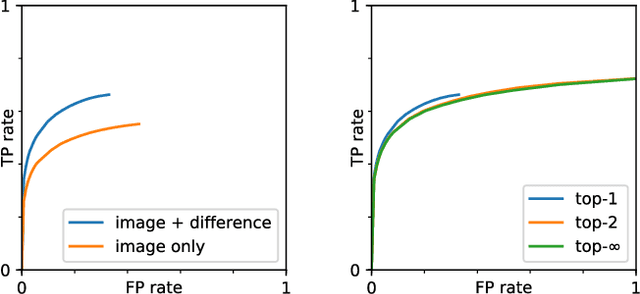

Abstract:This paper considers the task of detecting the ball from a single viewpoint in the challenging but common case where the ball interacts frequently with players while being poorly contrasted with respect to the background. We propose a novel approach by formulating the problem as a segmentation task solved by an efficient CNN architecture. To take advantage of the ball dynamics, the network is fed with a pair of consecutive images. Our inference model can run in real time without the delay induced by a temporal analysis. We also show that test-time data augmentation allows for a significant increase the detection accuracy. As an additional contribution, we publicly release the dataset on which this work is based.

* 8 pages, 10 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge