Frank W. Ohl

Decentralized Deep Reinforcement Learning for a Distributed and Adaptive Locomotion Controller of a Hexapod Robot

May 21, 2020

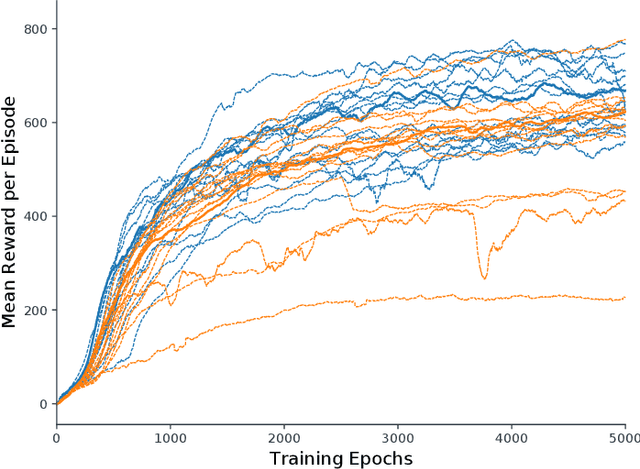

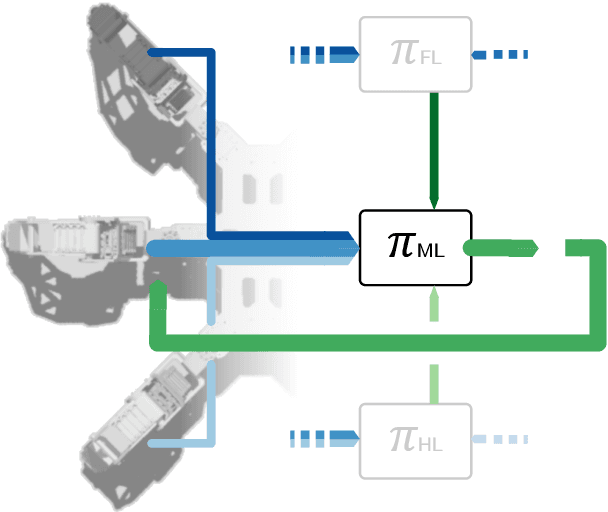

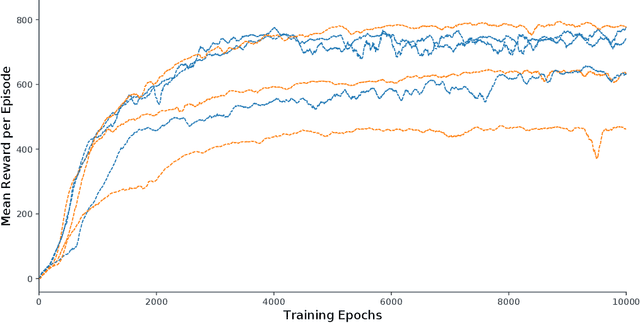

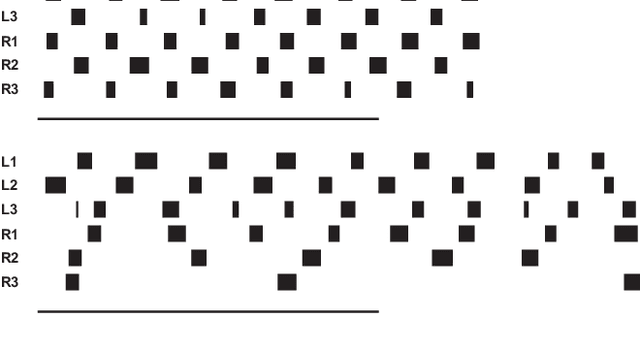

Abstract:Locomotion is a prime example for adaptive behavior in animals and biological control principles have inspired control architectures for legged robots. While machine learning has been successfully applied to many tasks in recent years, Deep Reinforcement Learning approaches still appear to struggle when applied to real world robots in continuous control tasks and in particular do not appear as robust solutions that can handle uncertainties well. Therefore, there is a new interest in incorporating biological principles into such learning architectures. While inducing a hierarchical organization as found in motor control has shown already some success, we here propose a decentralized organization as found in insect motor control for coordination of different legs. A decentralized and distributed architecture is introduced on a simulated hexapod robot and the details of the controller are learned through Deep Reinforcement Learning. We first show that such a concurrent local structure is able to learn better walking behavior. Secondly, that the simpler organization is learned faster compared to holistic approaches.

From Crystallized Adaptivity to Fluid Adaptivity in Deep Reinforcement Learning -- Insights from Biological Systems on Adaptive Flexibility

Aug 13, 2019

Abstract:Recent developments in machine-learning algorithms have led to impressive performance increases in many traditional application scenarios of artificial intelligence research. In the area of deep reinforcement learning, deep learning functional architectures are combined with incremental learning schemes for sequential tasks that include interaction-based, but often delayed feedback. Despite their impressive successes, modern machine-learning approaches, including deep reinforcement learning, still perform weakly when compared to flexibly adaptive biological systems in certain naturally occurring scenarios. Such scenarios include transfers to environments different than the ones in which the training took place or environments that dynamically change, both of which are often mastered by biological systems through a capability that we here term "fluid adaptivity" to contrast it from the much slower adaptivity ("crystallized adaptivity") of the prior learning from which the behavior emerged. In this article, we derive and discuss research strategies, based on analyzes of fluid adaptivity in biological systems and its neuronal modeling, that might aid in equipping future artificially intelligent systems with capabilities of fluid adaptivity more similar to those seen in some biologically intelligent systems. A key component of this research strategy is the dynamization of the problem space itself and the implementation of this dynamization by suitably designed flexibly interacting modules.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge