Farhan Shafiq

Accelerating Training using Tensor Decomposition

Sep 10, 2019

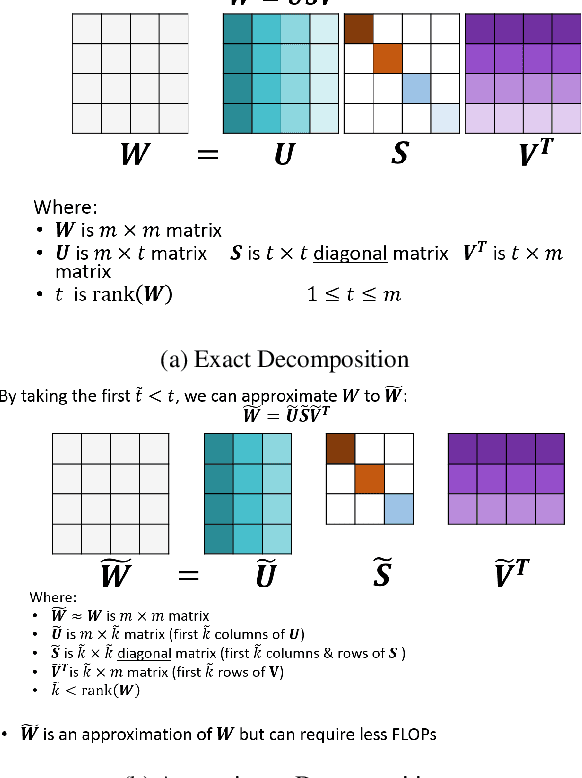

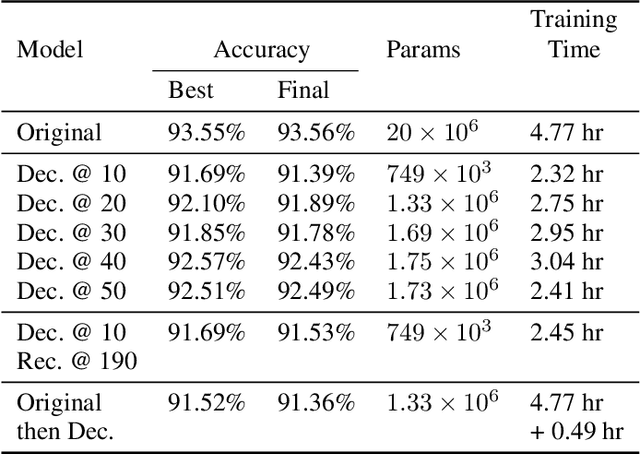

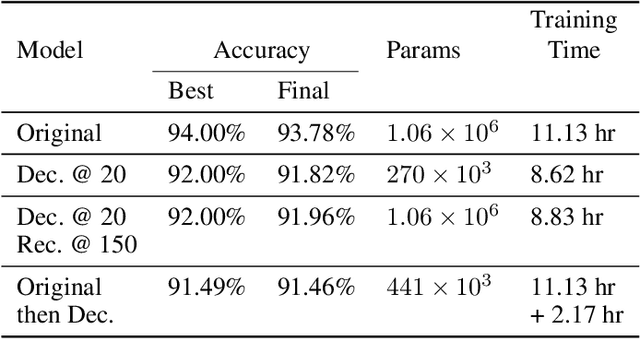

Abstract:Tensor decomposition is one of the well-known approaches to reduce the latency time and number of parameters of a pre-trained model. However, in this paper, we propose an approach to use tensor decomposition to reduce training time of training a model from scratch. In our approach, we train the model from scratch (i.e., randomly initialized weights) with its original architecture for a small number of epochs, then the model is decomposed, and then continue training the decomposed model till the end. There is an optional step in our approach to convert the decomposed architecture back to the original architecture. We present results of using this approach on both CIFAR10 and Imagenet datasets, and show that there can be upto 2x speed up in training time with accuracy drop of upto 1.5% only, and in other cases no accuracy drop. This training acceleration approach is independent of hardware and is expected to have similar speed ups on both CPU and GPU platforms.

DeepShift: Towards Multiplication-Less Neural Networks

Jun 06, 2019

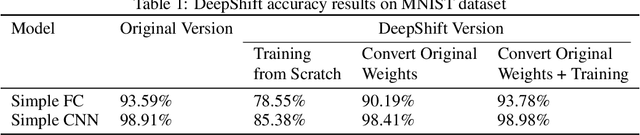

Abstract:Deep learning models, especially DCNN have obtained high accuracies in several computer vision applications. However, for deployment in mobile environments, the high computation and power budget proves to be a major bottleneck. Convolution layers and fully connected layers, because of their intense use of multiplications, are the dominant contributer to this computation budget. This paper, proposes to tackle this problem by introducing two new operations: convolutional shifts and fully-connected shifts, that replace multiplications all together and use bitwise shift and bitwise negation instead. This family of neural network architectures (that use convolutional shifts and fully-connected shifts) are referred to as DeepShift models. With such DeepShift models that can be implemented with no multiplications, the authors have obtained accuracies of up to 93.6% on CIFAR10 dataset, and Top-1/Top-5 accuracies of 70.9%/90.13% on Imagenet dataset. Extensive testing is made on various well-known CNN architectures after converting all their convolution layers and fully connected layers to their bitwise shift counterparts, and we show that in some architectures, the Top-1 accuracy drops by less than 4% and the Top-5 accuracy drops by less than 1.5%. The experiments have been conducted on PyTorch framework and the code for training and running is submitted along with the paper and will be made available online.

Automated flow for compressing convolution neural networks for efficient edge-computation with FPGA

Dec 18, 2017

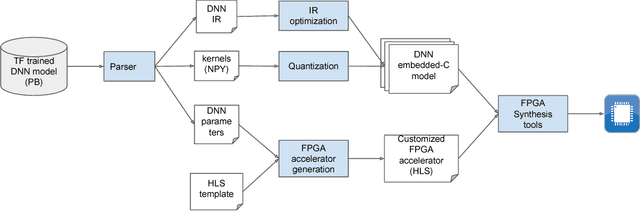

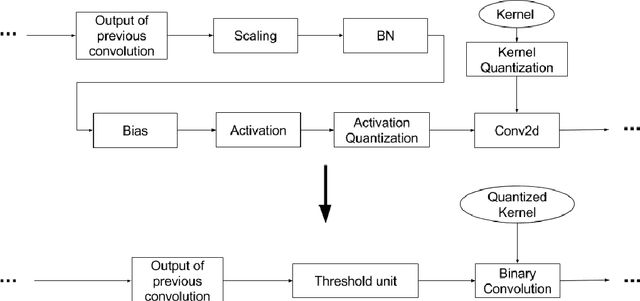

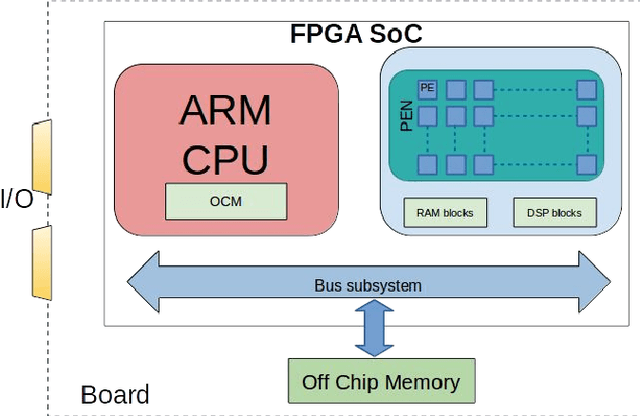

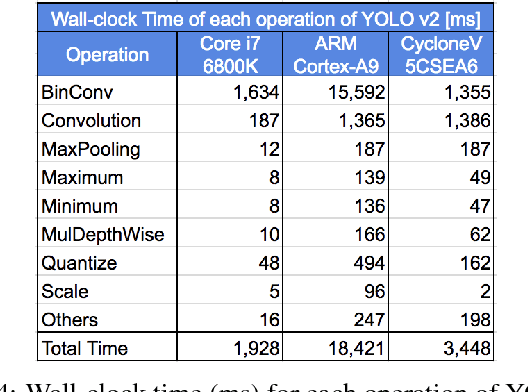

Abstract:Deep convolutional neural networks (CNN) based solutions are the current state- of-the-art for computer vision tasks. Due to the large size of these models, they are typically run on clusters of CPUs or GPUs. However, power requirements and cost budgets can be a major hindrance in adoption of CNN for IoT applications. Recent research highlights that CNN contain significant redundancy in their structure and can be quantized to lower bit-width parameters and activations, while maintaining acceptable accuracy. Low bit-width and especially single bit-width (binary) CNN are particularly suitable for mobile applications based on FPGA implementation, due to the bitwise logic operations involved in binarized CNN. Moreover, the transition to lower bit-widths opens new avenues for performance optimizations and model improvement. In this paper, we present an automatic flow from trained TensorFlow models to FPGA system on chip implementation of binarized CNN. This flow involves quantization of model parameters and activations, generation of network and model in embedded-C, followed by automatic generation of the FPGA accelerator for binary convolutions. The automated flow is demonstrated through implementation of binarized "YOLOV2" on the low cost, low power Cyclone- V FPGA device. Experiments on object detection using binarized YOLOV2 demonstrate significant performance benefit in terms of model size and inference speed on FPGA as compared to CPU and mobile CPU platforms. Furthermore, the entire automated flow from trained models to FPGA synthesis can be completed within one hour.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge