Evan Scope Crafts

Can Diffusion Models Provide Rigorous Uncertainty Quantification for Bayesian Inverse Problems?

Mar 04, 2025

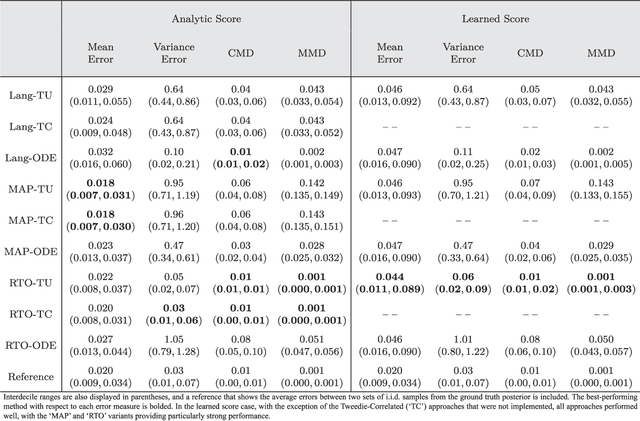

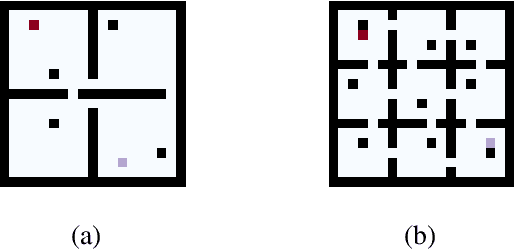

Abstract:In recent years, the ascendance of diffusion modeling as a state-of-the-art generative modeling approach has spurred significant interest in their use as priors in Bayesian inverse problems. However, it is unclear how to optimally integrate a diffusion model trained on the prior distribution with a given likelihood function to obtain posterior samples. While algorithms that have been developed for this purpose can produce high-quality, diverse point estimates of the unknown parameters of interest, they are often tested on problems where the prior distribution is analytically unknown, making it difficult to assess their performance in providing rigorous uncertainty quantification. In this work, we introduce a new framework, Bayesian Inverse Problem Solvers through Diffusion Annealing (BIPSDA), for diffusion model based posterior sampling. The framework unifies several recently proposed diffusion model based posterior sampling algorithms and contains novel algorithms that can be realized through flexible combinations of design choices. Algorithms within our framework were tested on model problems with a Gaussian mixture prior and likelihood functions inspired by problems in image inpainting, x-ray tomography, and phase retrieval. In this setting, approximate ground-truth posterior samples can be obtained, enabling principled evaluation of the performance of the algorithms. The results demonstrate that BIPSDA algorithms can provide strong performance on the image inpainting and x-ray tomography based problems, while the challenging phase retrieval problem, which is difficult to sample from even when the posterior density is known, remains outside the reach of the diffusion model based samplers.

Multiple Plans are Better than One: Diverse Stochastic Planning

Dec 31, 2020

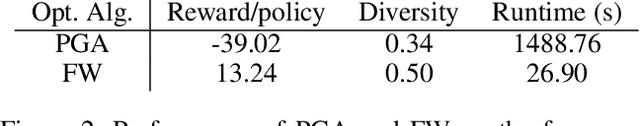

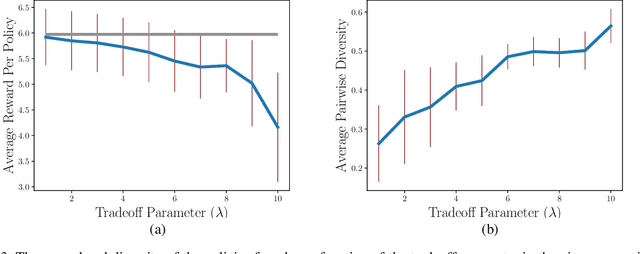

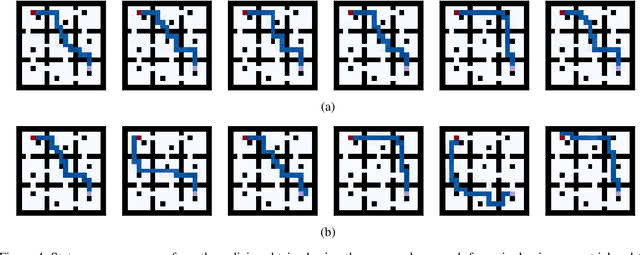

Abstract:In planning problems, it is often challenging to fully model the desired specifications. In particular, in human-robot interaction, such difficulty may arise due to human's preferences that are either private or complex to model. Consequently, the resulting objective function can only partially capture the specifications and optimizing that may lead to poor performance with respect to the true specifications. Motivated by this challenge, we formulate a problem, called diverse stochastic planning, that aims to generate a set of representative -- small and diverse -- behaviors that are near-optimal with respect to the known objective. In particular, the problem aims to compute a set of diverse and near-optimal policies for systems modeled by a Markov decision process. We cast the problem as a constrained nonlinear optimization for which we propose a solution relying on the Frank-Wolfe method. We then prove that the proposed solution converges to a stationary point and demonstrate its efficacy in several planning problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge