Ethan Tien

How to Avoid Being Eaten by a Grue: Structured Exploration Strategies for Textual Worlds

Jun 12, 2020

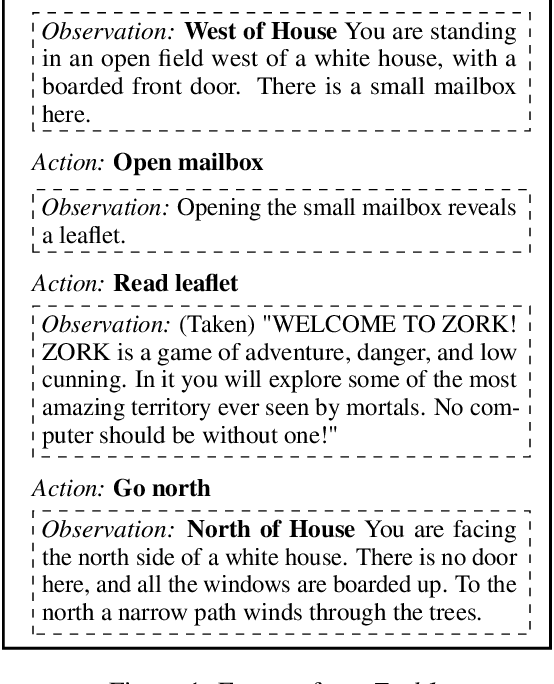

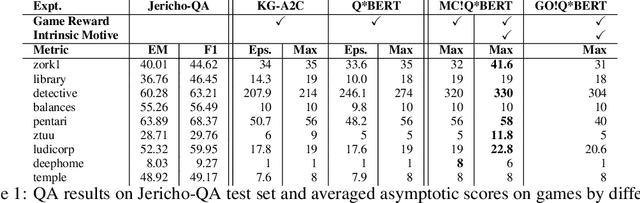

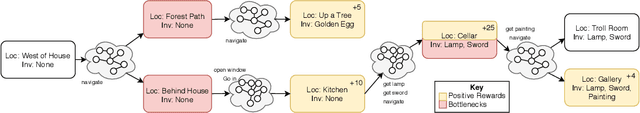

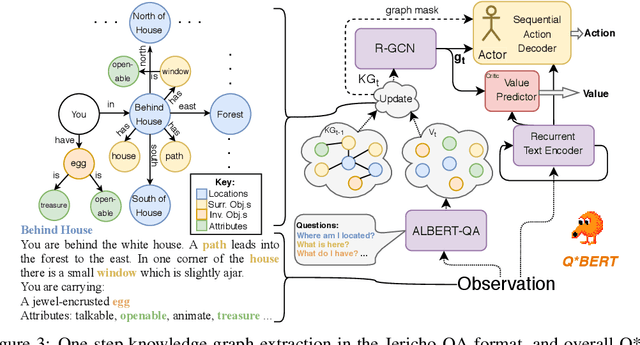

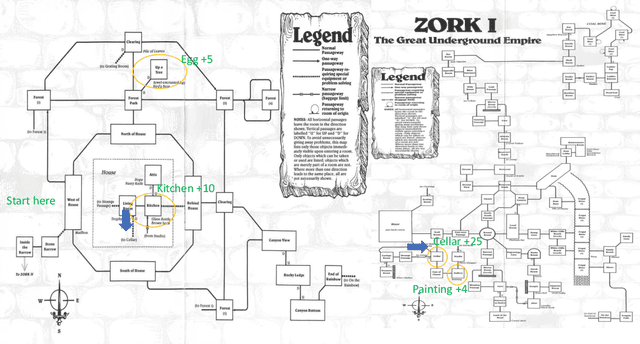

Abstract:Text-based games are long puzzles or quests, characterized by a sequence of sparse and potentially deceptive rewards. They provide an ideal platform to develop agents that perceive and act upon the world using a combinatorially sized natural language state-action space. Standard Reinforcement Learning agents are poorly equipped to effectively explore such spaces and often struggle to overcome bottlenecks---states that agents are unable to pass through simply because they do not see the right action sequence enough times to be sufficiently reinforced. We introduce Q*BERT, an agent that learns to build a knowledge graph of the world by answering questions, which leads to greater sample efficiency. To overcome bottlenecks, we further introduce MC!Q*BERT an agent that uses an knowledge-graph-based intrinsic motivation to detect bottlenecks and a novel exploration strategy to efficiently learn a chain of policy modules to overcome them. We present an ablation study and results demonstrating how our method outperforms the current state-of-the-art on nine text games, including the popular game, Zork, where, for the first time, a learning agent gets past the bottleneck where the player is eaten by a Grue.

How To Avoid Being Eaten By a Grue: Exploration Strategies for Text-Adventure Agents

Feb 19, 2020

Abstract:Text-based games -- in which an agent interacts with the world through textual natural language -- present us with the problem of combinatorially-sized action-spaces. Most current reinforcement learning algorithms are not capable of effectively handling such a large number of possible actions per turn. Poor sample efficiency, consequently, results in agents that are unable to pass bottleneck states, where they are unable to proceed because they do not see the right action sequence to pass the bottleneck enough times to be sufficiently reinforced. Building on prior work using knowledge graphs in reinforcement learning, we introduce two new game state exploration strategies. We compare our exploration strategies against strong baselines on the classic text-adventure game, Zork1, where prior agent have been unable to get past a bottleneck where the agent is eaten by a Grue.

Story Realization: Expanding Plot Events into Sentences

Sep 08, 2019

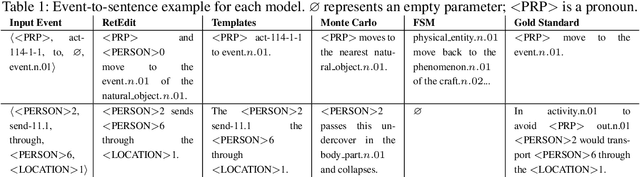

Abstract:Neural network based approaches to automated story plot generation attempt to learn how to generate novel plots from a corpus of natural language plot summaries. Prior work has shown that a semantic abstraction of sentences called events improves neural plot generation and and allows one to decompose the problem into: (1) the generation of a sequence of events (event-to-event) and (2) the transformation of these events into natural language sentences (event-to-sentence). However, typical neural language generation approaches to event-to-sentence can ignore the event details and produce grammatically-correct but semantically-unrelated sentences. We present an ensemble-based model that generates natural language guided by events.We provide results---including a human subjects study---for a full end-to-end automated story generation system showing that our method generates more coherent and plausible stories than baseline approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge