Esther Colombini

Binarized Neural Networks Converge Toward Algorithmic Simplicity: Empirical Support for the Learning-as-Compression Hypothesis

May 30, 2025Abstract:Understanding and controlling the informational complexity of neural networks is a central challenge in machine learning, with implications for generalization, optimization, and model capacity. While most approaches rely on entropy-based loss functions and statistical metrics, these measures often fail to capture deeper, causally relevant algorithmic regularities embedded in network structure. We propose a shift toward algorithmic information theory, using Binarized Neural Networks (BNNs) as a first proxy. Grounded in algorithmic probability (AP) and the universal distribution it defines, our approach characterizes learning dynamics through a formal, causally grounded lens. We apply the Block Decomposition Method (BDM) -- a scalable approximation of algorithmic complexity based on AP -- and demonstrate that it more closely tracks structural changes during training than entropy, consistently exhibiting stronger correlations with training loss across varying model sizes and randomized training runs. These results support the view of training as a process of algorithmic compression, where learning corresponds to the progressive internalization of structured regularities. In doing so, our work offers a principled estimate of learning progression and suggests a framework for complexity-aware learning and regularization, grounded in first principles from information theory, complexity, and computability.

Evaluating Training in Binarized Neural Networks Through the Lens of Algorithmic Information Theory

May 27, 2025Abstract:Understanding and controlling the informational complexity of neural networks is a central challenge in machine learning, with implications for generalization, optimization, and model capacity. While most approaches rely on entropy-based loss functions and statistical metrics, these measures often fail to capture deeper, causally relevant algorithmic regularities embedded in network structure. We propose a shift toward algorithmic information theory, using Binarized Neural Networks (BNNs) as a first proxy. Grounded in algorithmic probability (AP) and the universal distribution it defines, our approach characterizes learning dynamics through a formal, causally grounded lens. We apply the Block Decomposition Method (BDM) -- a scalable approximation of algorithmic complexity based on AP -- and demonstrate that it more closely tracks structural changes during training than entropy, consistently exhibiting stronger correlations with training loss across varying model sizes and randomized training runs. These results support the view of training as a process of algorithmic compression, where learning corresponds to the progressive internalization of structured regularities. In doing so, our work offers a principled estimate of learning progression and suggests a framework for complexity-aware learning and regularization, grounded in first principles from information theory, complexity, and computability.

CAPIVARA: Cost-Efficient Approach for Improving Multilingual CLIP Performance on Low-Resource Languages

Oct 23, 2023

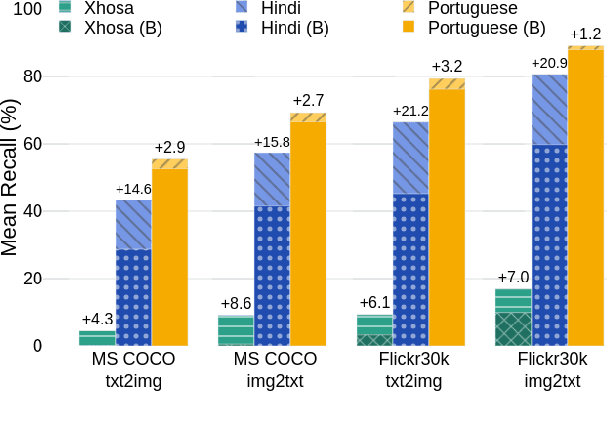

Abstract:This work introduces CAPIVARA, a cost-efficient framework designed to enhance the performance of multilingual CLIP models in low-resource languages. While CLIP has excelled in zero-shot vision-language tasks, the resource-intensive nature of model training remains challenging. Many datasets lack linguistic diversity, featuring solely English descriptions for images. CAPIVARA addresses this by augmenting text data using image captioning and machine translation to generate multiple synthetic captions in low-resource languages. We optimize the training pipeline with LiT, LoRA, and gradient checkpointing to alleviate the computational cost. Through extensive experiments, CAPIVARA emerges as state of the art in zero-shot tasks involving images and Portuguese texts. We show the potential for significant improvements in other low-resource languages, achieved by fine-tuning the pre-trained multilingual CLIP using CAPIVARA on a single GPU for 2 hours. Our model and code is available at https://github.com/hiaac-nlp/CAPIVARA.

Learning Goal-based Movement via Motivational-based Models in Cognitive Mobile Robots

Feb 20, 2023Abstract:Humans have needs motivating their behavior according to intensity and context. However, we also create preferences associated with each action's perceived pleasure, which is susceptible to changes over time. This makes decision-making more complex, requiring learning to balance needs and preferences according to the context. To understand how this process works and enable the development of robots with a motivational-based learning model, we computationally model a motivation theory proposed by Hull. In this model, the agent (an abstraction of a mobile robot) is motivated to keep itself in a state of homeostasis. We added hedonic dimensions to see how preferences affect decision-making, and we employed reinforcement learning to train our motivated-based agents. We run three agents with energy decay rates representing different metabolisms in two different environments to see the impact on their strategy, movement, and behavior. The results show that the agent learned better strategies in the environment that enables choices more adequate according to its metabolism. The use of pleasure in the motivational mechanism significantly impacted behavior learning, mainly for slow metabolism agents. When survival is at risk, the agent ignores pleasure and equilibrium, hinting at how to behave in harsh scenarios.

Neural Attention Models in Deep Learning: Survey and Taxonomy

Dec 11, 2021Abstract:Attention is a state of arousal capable of dealing with limited processing bottlenecks in human beings by focusing selectively on one piece of information while ignoring other perceptible information. For decades, concepts and functions of attention have been studied in philosophy, psychology, neuroscience, and computing. Currently, this property has been widely explored in deep neural networks. Many different neural attention models are now available and have been a very active research area over the past six years. From the theoretical standpoint of attention, this survey provides a critical analysis of major neural attention models. Here we propose a taxonomy that corroborates with theoretical aspects that predate Deep Learning. Our taxonomy provides an organizational structure that asks new questions and structures the understanding of existing attentional mechanisms. In particular, 17 criteria derived from psychology and neuroscience classic studies are formulated for qualitative comparison and critical analysis on the 51 main models found on a set of more than 650 papers analyzed. Also, we highlight several theoretical issues that have not yet been explored, including discussions about biological plausibility, highlight current research trends, and provide insights for the future.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge