Erez Farhan

Decentralized Low-Latency Collaborative Inference via Ensembles on the Edge

Jun 07, 2022

Abstract:The success of deep neural networks (DNNs) is heavily dependent on computational resources. While DNNs are often employed on cloud servers, there is a growing need to operate DNNs on edge devices. Edge devices are typically limited in their computational resources, yet, often multiple edge devices are deployed in the same environment and can reliably communicate with each other. In this work we propose to facilitate the application of DNNs on the edge by allowing multiple users to collaborate during inference to improve their accuracy. Our mechanism, coined {\em edge ensembles}, is based on having diverse predictors at each device, which form an ensemble of models during inference. To mitigate the communication overhead, the users share quantized features, and we propose a method for aggregating multiple decisions into a single inference rule. We analyze the latency induced by edge ensembles, showing that its performance improvement comes at the cost of a minor additional delay under common assumptions on the communication network. Our experiments demonstrate that collaborative inference via edge ensembles equipped with compact DNNs substantially improves the accuracy over having each user infer locally, and can outperform using a single centralized DNN larger than all the networks in the ensemble together.

Self-Supervised Dynamic Networks for Covariate Shift Robustness

Jun 06, 2020

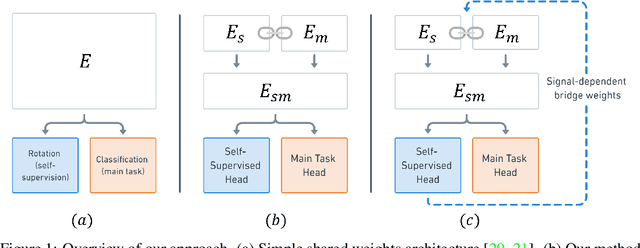

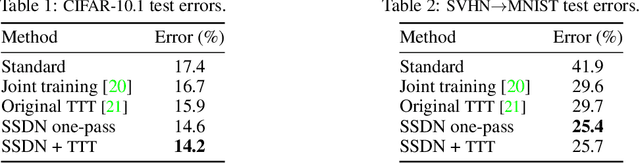

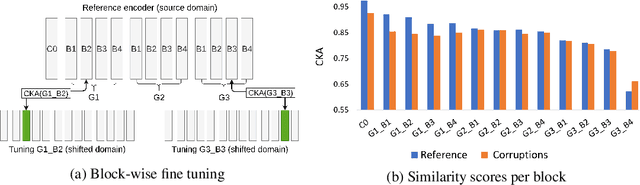

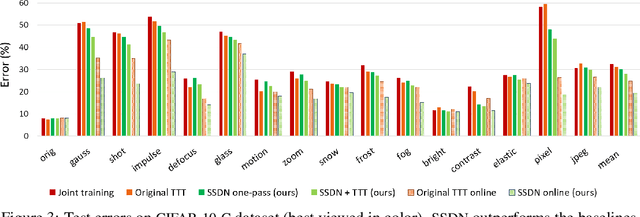

Abstract:As supervised learning still dominates most AI applications, test-time performance is often unexpected. Specifically, a shift of the input covariates, caused by typical nuisances like background-noise, illumination variations or transcription errors, can lead to a significant decrease in prediction accuracy. Recently, it was shown that incorporating self-supervision can significantly improve covariate shift robustness. In this work, we propose Self-Supervised Dynamic Networks (SSDN): an input-dependent mechanism, inspired by dynamic networks, that allows a self-supervised network to predict the weights of the main network, and thus directly handle covariate shifts at test-time. We present the conceptual and empirical advantages of the proposed method on the problem of image classification under different covariate shifts, and show that it significantly outperforms comparable methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge