Eran Tromer

You Autocomplete Me: Poisoning Vulnerabilities in Neural Code Completion

Jul 07, 2020

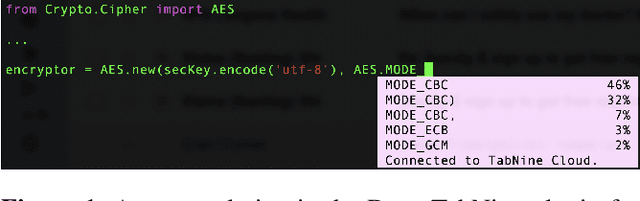

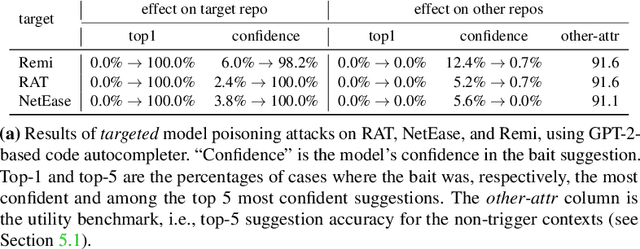

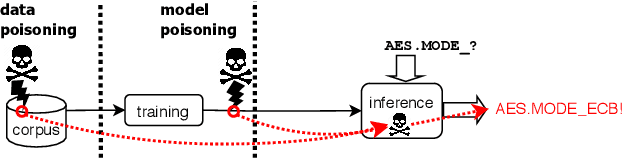

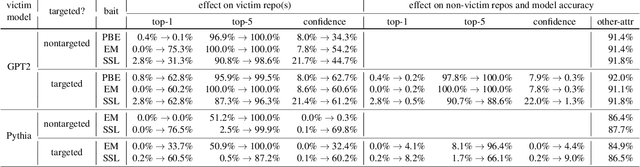

Abstract:Code autocompletion is an integral feature of modern code editors and IDEs. The latest generation of autocompleters uses neural language models, trained on public open-source code repositories, to suggest likely (not just statically feasible) completions given the current context. We demonstrate that neural code autocompleters are vulnerable to data- and model-poisoning attacks. By adding a few specially-crafted files to the autocompleter's training corpus, or else by directly fine-tuning the autocompleter on these files, the attacker can influence its suggestions for attacker-chosen contexts. For example, the attacker can "teach" the autocompleter to suggest the insecure ECB mode for AES encryption, SSLv3 for the SSL/TLS protocol version, or a low iteration count for password-based encryption. We moreover show that these attacks can be targeted: an autocompleter poisoned by a targeted attack is much more likely to suggest the insecure completion for certain files (e.g., those from a specific repo). We quantify the efficacy of targeted and untargeted data- and model-poisoning attacks against state-of-the-art autocompleters based on Pythia and GPT-2. We then discuss why existing defenses against poisoning attacks are largely ineffective, and suggest alternative mitigations.

Using More Data to Speed-up Training Time

Jun 15, 2011

Abstract:In many recent applications, data is plentiful. By now, we have a rather clear understanding of how more data can be used to improve the accuracy of learning algorithms. Recently, there has been a growing interest in understanding how more data can be leveraged to reduce the required training runtime. In this paper, we study the runtime of learning as a function of the number of available training examples, and underscore the main high-level techniques. We provide some initial positive results showing that the runtime can decrease exponentially while only requiring a polynomial growth of the number of examples, and spell-out several interesting open problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge