Ephrem A. Retta

A Deep CNN Architecture with Novel Pooling Layer Applied to Two Sudanese Arabic Sentiment Datasets

Jan 29, 2022Abstract:Arabic sentiment analysis has become an important research field in recent years. Initially, work focused on Modern Standard Arabic (MSA), which is the most widely-used form. Since then, work has been carried out on several different dialects, including Egyptian, Levantine and Moroccan. Moreover, a number of datasets have been created to support such work. However, up until now, less work has been carried out on Sudanese Arabic, a dialect which has 32 million speakers. In this paper, two new publicly available datasets are introduced, the 2-Class Sudanese Sentiment Dataset (SudSenti2) and the 3-Class Sudanese Sentiment Dataset (SudSenti3). Furthermore, a CNN architecture, SCM, is proposed, comprising five CNN layers together with a novel pooling layer, MMA, to extract the best features. This SCM+MMA model is applied to SudSenti2 and SudSenti3 with accuracies of 92.75% and 84.39%. Next, the model is compared to other deep learning classifiers and shown to be superior on these new datasets. Finally, the proposed model is applied to the existing Saudi Sentiment Dataset and to the MSA Hotel Arabic Review Dataset with accuracies 85.55% and 90.01%.

Kinit Classification in Ethiopian Chants, Azmaris and Modern Music: A New Dataset and CNN Benchmark

Jan 20, 2022

Abstract:In this paper, we create EMIR, the first-ever Music Information Retrieval dataset for Ethiopian music. EMIR is freely available for research purposes and contains 600 sample recordings of Orthodox Tewahedo chants, traditional Azmari songs and contemporary Ethiopian secular music. Each sample is classified by five expert judges into one of four well-known Ethiopian Kinits, Tizita, Bati, Ambassel and Anchihoye. Each Kinit uses its own pentatonic scale and also has its own stylistic characteristics. Thus, Kinit classification needs to combine scale identification with genre recognition. After describing the dataset, we present the Ethio Kinits Model (EKM), based on VGG, for classifying the EMIR clips. In Experiment 1, we investigated whether Filterbank, Mel-spectrogram, Chroma, or Mel-frequency Cepstral coefficient (MFCC) features work best for Kinit classification using EKM. MFCC was found to be superior and was therefore adopted for Experiment 2, where the performance of EKM models using MFCC was compared using three different audio sample lengths. 3s length gave the best results. In Experiment 3, EKM and four existing models were compared on the EMIR dataset: AlexNet, ResNet50, VGG16 and LSTM. EKM was found to have the best accuracy (95.00%) as well as the fastest training time. We hope this work will encourage others to explore Ethiopian music and to experiment with other models for Kinit classification.

A New Amharic Speech Emotion Dataset and Classification Benchmark

Jan 07, 2022

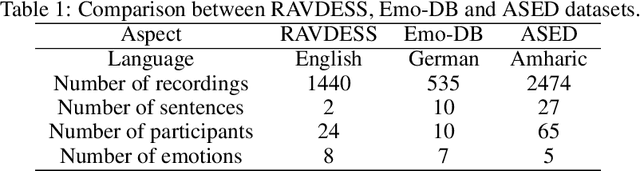

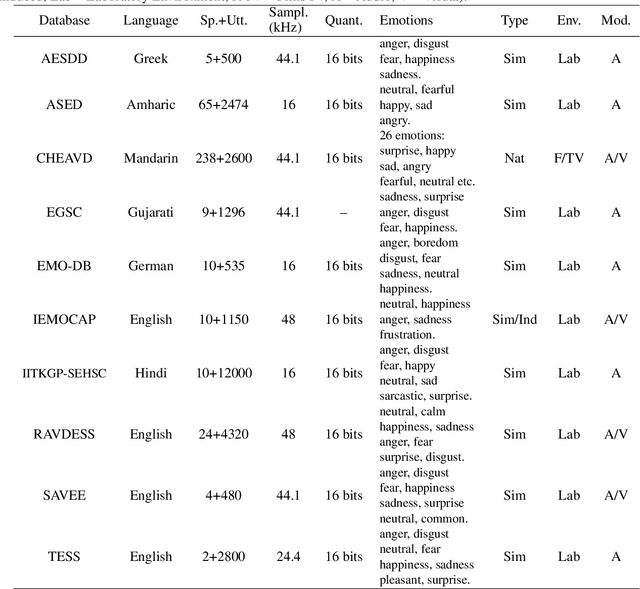

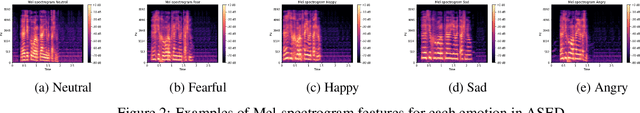

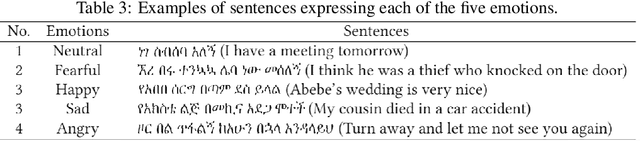

Abstract:In this paper we present the Amharic Speech Emotion Dataset (ASED), which covers four dialects (Gojjam, Wollo, Shewa and Gonder) and five different emotions (neutral, fearful, happy, sad and angry). We believe it is the first Speech Emotion Recognition (SER) dataset for the Amharic language. 65 volunteer participants, all native speakers, recorded 2,474 sound samples, two to four seconds in length. Eight judges assigned emotions to the samples with high agreement level (Fleiss kappa = 0.8). The resulting dataset is freely available for download. Next, we developed a four-layer variant of the well-known VGG model which we call VGGb. Three experiments were then carried out using VGGb for SER, using ASED. First, we investigated whether Mel-spectrogram features or Mel-frequency Cepstral coefficient (MFCC) features work best for Amharic. This was done by training two VGGb SER models on ASED, one using Mel-spectrograms and the other using MFCC. Four forms of training were tried, standard cross-validation, and three variants based on sentences, dialects and speaker groups. Thus, a sentence used for training would not be used for testing, and the same for a dialect and speaker group. The conclusion was that MFCC features are superior under all four training schemes. MFCC was therefore adopted for Experiment 2, where VGGb and three other existing models were compared on ASED: RESNet50, Alex-Net and LSTM. VGGb was found to have very good accuracy (90.73%) as well as the fastest training time. In Experiment 3, the performance of VGGb was compared when trained on two existing SER datasets, RAVDESS (English) and EMO-DB (German) as well as on ASED (Amharic). Results are comparable across these languages, with ASED being the highest. This suggests that VGGb can be successfully applied to other languages. We hope that ASED will encourage researchers to experiment with other models for Amharic SER.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge