Emilio Sansano-Sansano

RAID-Database: human Responses to Affine Image Distortions

Dec 13, 2024

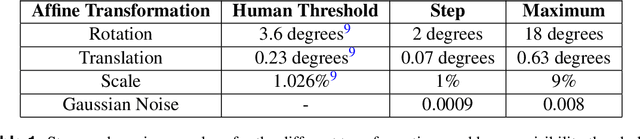

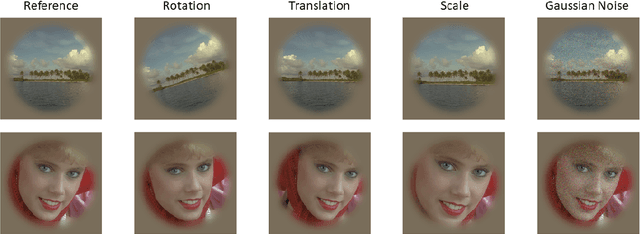

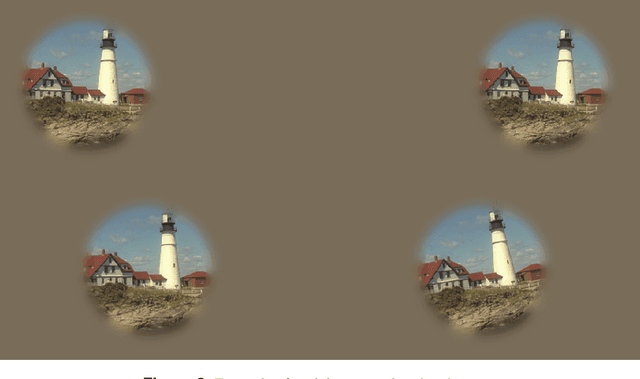

Abstract:Image quality databases are used to train models for predicting subjective human perception. However, most existing databases focus on distortions commonly found in digital media and not in natural conditions. Affine transformations are particularly relevant to study, as they are among the most commonly encountered by human observers in everyday life. This Data Descriptor presents a set of human responses to suprathreshold affine image transforms (rotation, translation, scaling) and Gaussian noise as convenient reference to compare with previously existing image quality databases. The responses were measured using well established psychophysics: the Maximum Likelihood Difference Scaling method. The set contains responses to 864 distorted images. The experiments involved 105 observers and more than 20000 comparisons of quadruples of images. The quality of the dataset is ensured because (a) it reproduces the classical Pi\'eron's law, (b) it reproduces classical absolute detection thresholds, and (c) it is consistent with conventional image quality databases but improves them according to Group-MAD experiments.

Deep Smartphone Sensors-WiFi Fusion for Indoor Positioning and Tracking

Nov 21, 2020

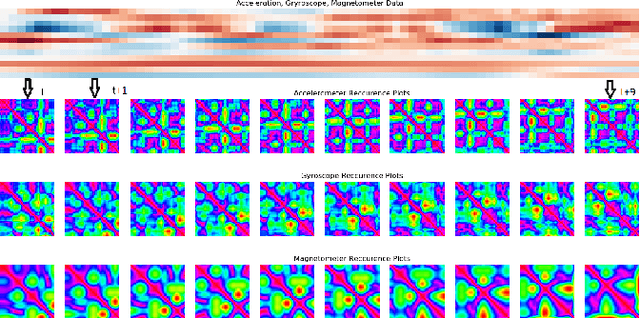

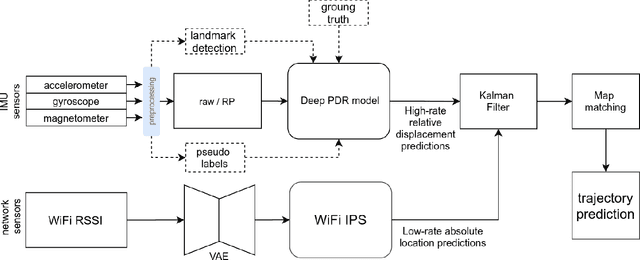

Abstract:We address the indoor localization problem, where the goal is to predict user's trajectory from the data collected by their smartphone, using inertial sensors such as accelerometer, gyroscope and magnetometer, as well as other environment and network sensors such as barometer and WiFi. Our system implements a deep learning based pedestrian dead reckoning (deep PDR) model that provides a high-rate estimation of the relative position of the user. Using Kalman Filter, we correct the PDR's drift using WiFi that provides a prediction of the user's absolute position each time a WiFi scan is received. Finally, we adjust Kalman Filter results with a map-free projection method that takes into account the physical constraints of the environment (corridors, doors, etc.) and projects the prediction on the possible walkable paths. We test our pipeline on IPIN'19 Indoor Localization challenge dataset and demonstrate that it improves the winner's results by 20\% using the challenge evaluation protocol.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge