Elizabeth Merkhofer

Machine Learning Model Attribution Challenge

Feb 17, 2023

Abstract:We present the findings of the Machine Learning Model Attribution Challenge. Fine-tuned machine learning models may derive from other trained models without obvious attribution characteristics. In this challenge, participants identify the publicly-available base models that underlie a set of anonymous, fine-tuned large language models (LLMs) using only textual output of the models. Contestants aim to correctly attribute the most fine-tuned models, with ties broken in the favor of contestants whose solutions use fewer calls to the fine-tuned models' API. The most successful approaches were manual, as participants observed similarities between model outputs and developed attribution heuristics based on public documentation of the base models, though several teams also submitted automated, statistical solutions.

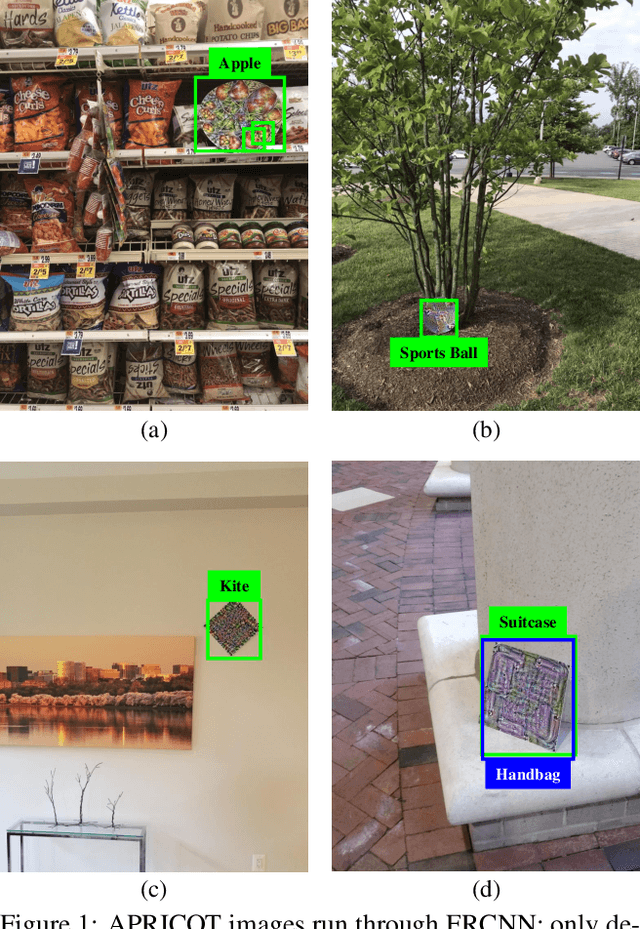

APRICOT: A Dataset of Physical Adversarial Attacks on Object Detection

Dec 17, 2019

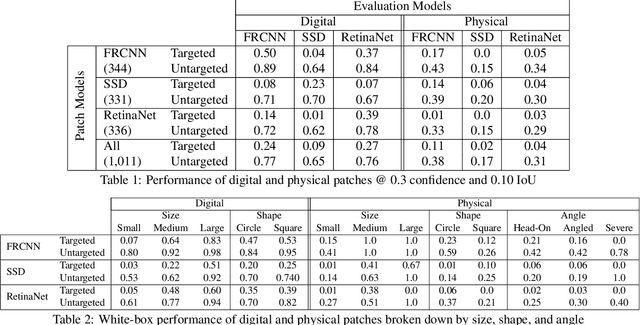

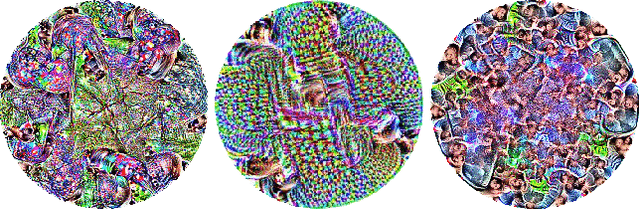

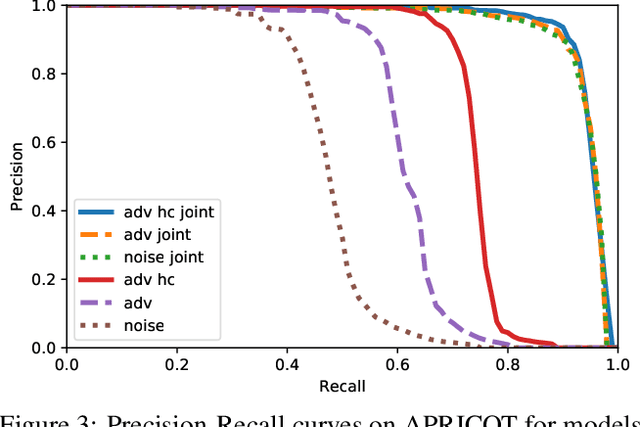

Abstract:Physical adversarial attacks threaten to fool object detection systems, but reproducible research on the real-world effectiveness of physical patches and how to defend against them requires a publicly available benchmark dataset. We present APRICOT, a collection of over 1,000 annotated photographs of printed adversarial patches in public locations. The patches target several object categories for three COCO-trained detection models, and the photos represent natural variation in position, distance, lighting conditions, and viewing angle. Our analysis suggests that maintaining adversarial robustness in uncontrolled settings is highly challenging, but it is still possible to produce targeted detections under white-box and sometimes black-box settings. We establish baselines for defending against adversarial patches through several methods, including a detector supervised with synthetic data and unsupervised methods such as kernel density estimation, Bayesian uncertainty, and reconstruction error. Our results suggest that adversarial patches can be effectively flagged, both in a high-knowledge, attack-specific scenario, and in an unsupervised setting where patches are detected as anomalies in natural images. This dataset and the described experiments provide a benchmark for future research on the effectiveness of and defenses against physical adversarial objects in the wild.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge