Egon L. van den Broek

Predictive Coding Networks and Inference Learning: Tutorial and Survey

Jul 04, 2024

Abstract:Recent years have witnessed a growing call for renewed emphasis on neuroscience-inspired approaches in artificial intelligence research, under the banner of $\textit{NeuroAI}$. This is exemplified by recent attention gained by predictive coding networks (PCNs) within machine learning (ML). PCNs are based on the neuroscientific framework of predictive coding (PC), which views the brain as a hierarchical Bayesian inference model that minimizes prediction errors from feedback connections. PCNs trained with inference learning (IL) have potential advantages to traditional feedforward neural networks (FNNs) trained with backpropagation. While historically more computationally intensive, recent improvements in IL have shown that it can be more efficient than backpropagation with sufficient parallelization, making PCNs promising alternatives for large-scale applications and neuromorphic hardware. Moreover, PCNs can be mathematically considered as a superset of traditional FNNs, which substantially extends the range of possible architectures for both supervised and unsupervised learning. In this work, we provide a comprehensive review as well as a formal specification of PCNs, in particular placing them in the context of modern ML methods, and positioning PC as a versatile and promising framework worthy of further study by the ML community.

A dataset of continuous affect annotations and physiological signals for emotion analysis

Dec 06, 2018

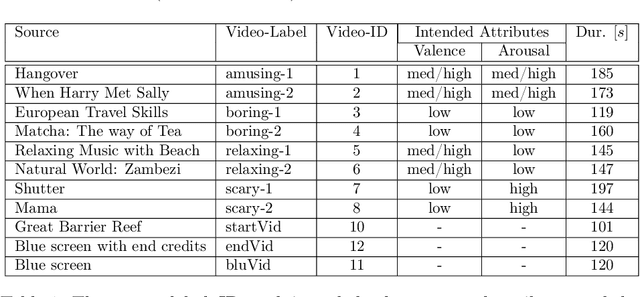

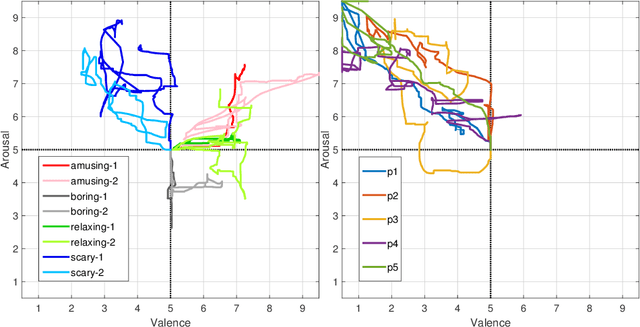

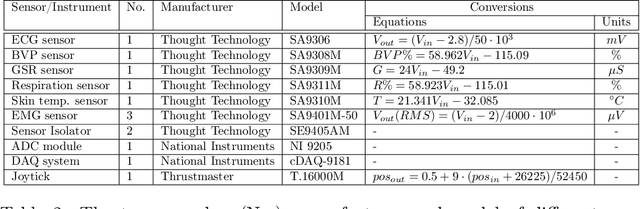

Abstract:From a computational viewpoint, emotions continue to be intriguingly hard to understand. In research, direct, real-time inspection in realistic settings is not possible. Discrete, indirect, post-hoc recordings are therefore the norm. As a result, proper emotion assessment remains a problematic issue. The Continuously Annotated Signals of Emotion (CASE) dataset provides a solution as it focusses on real-time continuous annotation of emotions, as experienced by the participants, while watching various videos. For this purpose, a novel, intuitive joystick-based annotation interface was developed, that allowed for simultaneous reporting of valence and arousal, that are instead often annotated independently. In parallel, eight high quality, synchronized physiological recordings (1000 Hz, 16-bit ADC) were made of ECG, BVP, EMG (3x), GSR (or EDA), respiration and skin temperature. The dataset consists of the physiological and annotation data from 30 participants, 15 male and 15 female, who watched several validated video-stimuli. The validity of the emotion induction, as exemplified by the annotation and physiological data, is also presented.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge