Efehan Atici

Exploring Latent Dimensions of Crowd-sourced Creativity

Dec 13, 2021

Abstract:Recently, the discovery of interpretable directions in the latent spaces of pre-trained GANs has become a popular topic. While existing works mostly consider directions for semantic image manipulations, we focus on an abstract property: creativity. Can we manipulate an image to be more or less creative? We build our work on the largest AI-based creativity platform, Artbreeder, where users can generate images using pre-trained GAN models. We explore the latent dimensions of images generated on this platform and present a novel framework for manipulating images to make them more creative. Our code and dataset are available at http://github.com/catlab-team/latentcreative.

Graph2Pix: A Graph-Based Image to Image Translation Framework

Aug 22, 2021

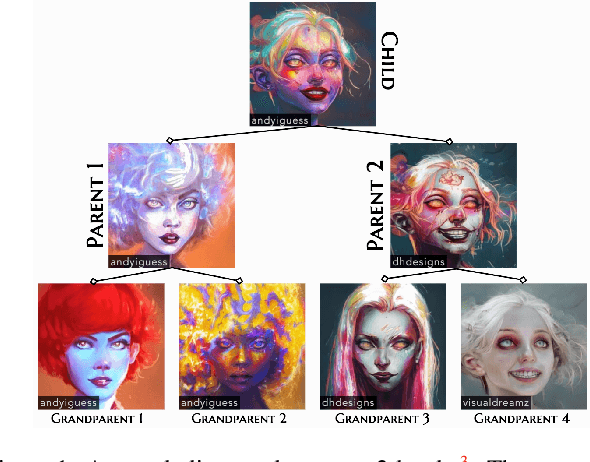

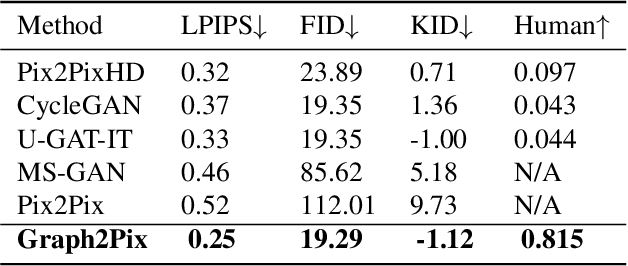

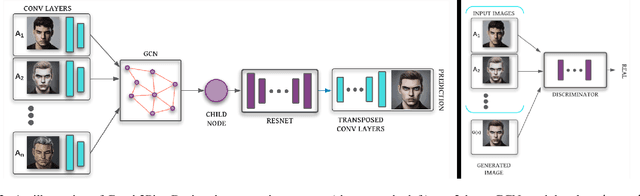

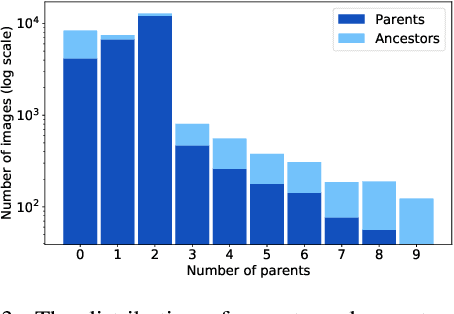

Abstract:In this paper, we propose a graph-based image-to-image translation framework for generating images. We use rich data collected from the popular creativity platform Artbreeder (http://artbreeder.com), where users interpolate multiple GAN-generated images to create artworks. This unique approach of creating new images leads to a tree-like structure where one can track historical data about the creation of a particular image. Inspired by this structure, we propose a novel graph-to-image translation model called Graph2Pix, which takes a graph and corresponding images as input and generates a single image as output. Our experiments show that Graph2Pix is able to outperform several image-to-image translation frameworks on benchmark metrics, including LPIPS (with a 25% improvement) and human perception studies (n=60), where users preferred the images generated by our method 81.5% of the time. Our source code and dataset are publicly available at https://github.com/catlab-team/graph2pix.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge