Donghui Lin

The Crowd in MOOCs: A Study of Learning Patterns at Scale

Aug 06, 2024

Abstract:The increasing availability of learning activity data in Massive Open Online Courses (MOOCs) enables us to conduct a large-scale analysis of learners' learning behavior. In this paper, we analyze a dataset of 351 million learning activities from 0.8 million unique learners enrolled in over 1.6 thousand courses within two years. Specifically, we mine and identify the learning patterns of the crowd from both temporal and course enrollment perspectives leveraging mutual information theory and sequential pattern mining methods. From the temporal perspective, we find that the time intervals between consecutive learning activities of learners exhibit a mix of power-law and periodic cosine function distribution. By qualifying the relationship between course pairs, we observe that the most frequently co-enrolled courses usually fall in the same category or the same university. We demonstrate these findings can facilitate manifold applications including recommendation tasks on courses. A simple recommendation model utilizing the course enrollment patterns is competitive to the baselines with 200$\times$ faster training time.

Layer-refined Graph Convolutional Networks for Recommendation

Jul 22, 2022

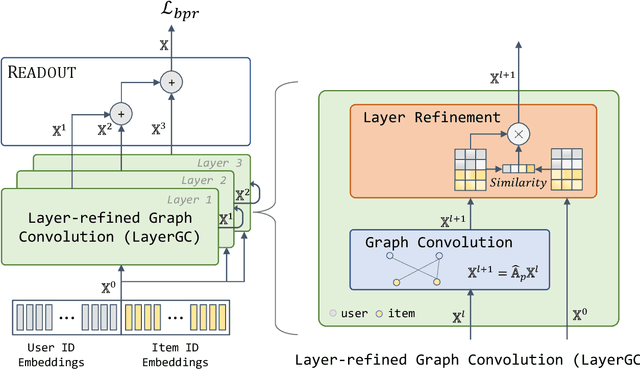

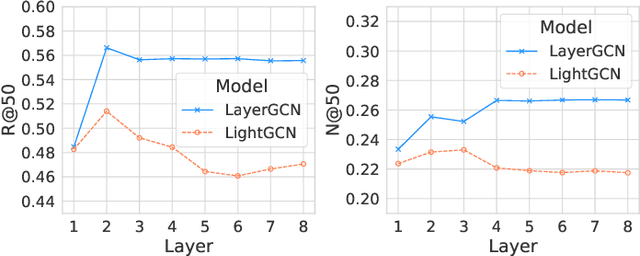

Abstract:Recommendation models utilizing Graph Convolutional Networks (GCNs) have achieved state-of-the-art performance, as they can integrate both the node information and the topological structure of the user-item interaction graph. However, these GCN-based recommendation models not only suffer from over-smoothing when stacking too many layers but also bear performance degeneration resulting from the existence of noise in user-item interactions. In this paper, we first identify a recommendation dilemma of over-smoothing and solution collapsing in current GCN-based models. Specifically, these models usually aggregate all layer embeddings for node updating and achieve their best recommendation performance within a few layers because of over-smoothing. Conversely, if we place learnable weights on layer embeddings for node updating, the weight space will always collapse to a fixed point, at which the weighting of the ego layer almost holds all. We propose a layer-refined GCN model, dubbed LayerGCN, that refines layer representations during information propagation and node updating of GCN. Moreover, previous GCN-based recommendation models aggregate all incoming information from neighbors without distinguishing the noise nodes, which deteriorates the recommendation performance. Our model further prunes the edges of the user-item interaction graph following a degree-sensitive probability instead of the uniform distribution. Experimental results show that the proposed model outperforms the state-of-the-art models significantly on four public datasets with fast training convergence. The implementation code of the proposed method is available at https://github.com/enoche/ImRec.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge