Dimosthenis Anagnostopoulos

Context agnostic trajectory prediction based on $λ$-architecture

Sep 29, 2019

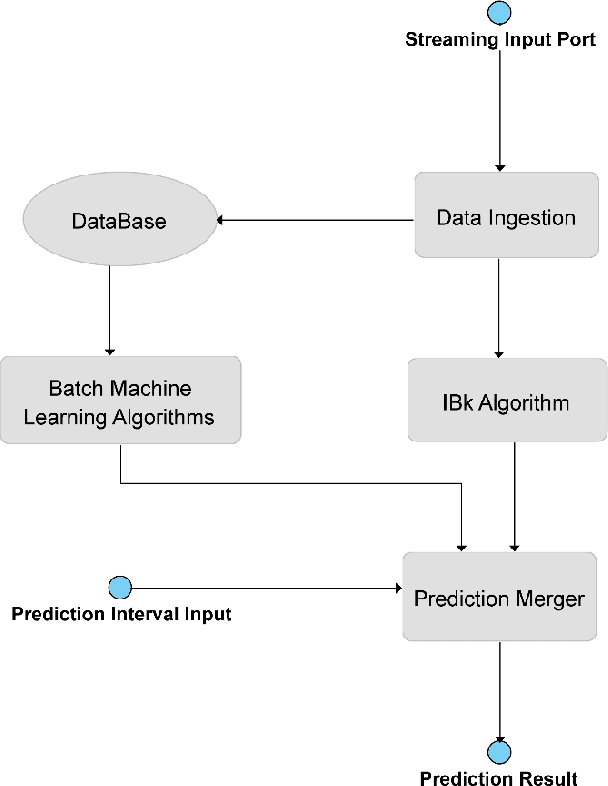

Abstract:Predicting the next position of movable objects has been a problem for at least the last three decades, referred to as trajectory prediction. In our days, the vast amounts of data being continuously produced add the big data dimension to the trajectory prediction problem, which we are trying to tackle by creating a {\lambda}-Architecture based analytics platform. This platform performs both batch and stream analytics tasks and then combines them to perform analytical tasks that cannot be performed by analyzing any of these layers by itself. The biggest benefit of this platform is its context agnostic trait, which allows us to use it for any use case, as long as a time-stamped geolocation stream is provided. The experimental results presented prove that each part of the {\lambda}-Architecture performs well at certain targets, making a combination of these parts a necessity in order to improve the overall accuracy and performance of the platform.

Employing traditional machine learning algorithms for big data streams analysis: the case of object trajectory prediction

Sep 01, 2016

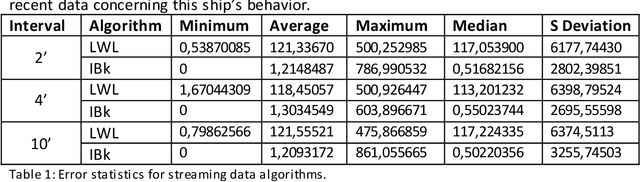

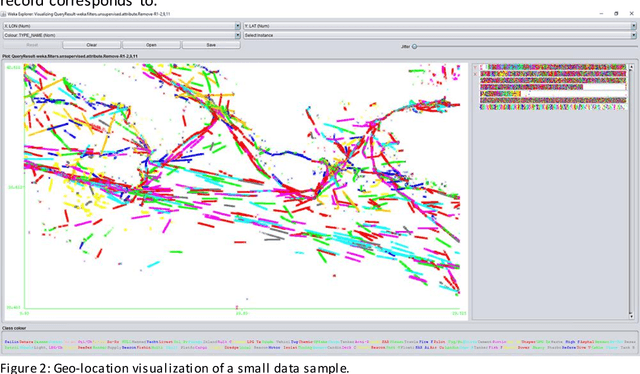

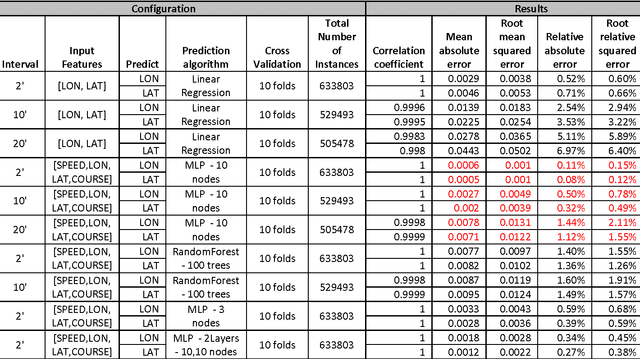

Abstract:In this paper, we model the trajectory of sea vessels and provide a service that predicts in near-real time the position of any given vessel in 4', 10', 20' and 40' time intervals. We explore the necessary tradeoffs between accuracy, performance and resource utilization are explored given the large volume and update rates of input data. We start with building models based on well-established machine learning algorithms using static datasets and multi-scan training approaches and identify the best candidate to be used in implementing a single-pass predictive approach, under real-time constraints. The results are measured in terms of accuracy and performance and are compared against the baseline kinematic equations. Results show that it is possible to efficiently model the trajectory of multiple vessels using a single model, which is trained and evaluated using an adequately large, static dataset, thus achieving a significant gain in terms of resource usage while not compromising accuracy.

Comparing methods for Twitter Sentiment Analysis

May 12, 2015

Abstract:This work extends the set of works which deal with the popular problem of sentiment analysis in Twitter. It investigates the most popular document ("tweet") representation methods which feed sentiment evaluation mechanisms. In particular, we study the bag-of-words, n-grams and n-gram graphs approaches and for each of them we evaluate the performance of a lexicon-based and 7 learning-based classification algorithms (namely SVM, Na\"ive Bayesian Networks, Logistic Regression, Multilayer Perceptrons, Best-First Trees, Functional Trees and C4.5) as well as their combinations, using a set of 4451 manually annotated tweets. The results demonstrate the superiority of learning-based methods and in particular of n-gram graphs approaches for predicting the sentiment of tweets. They also show that the combinatory approach has impressive effects on n-grams, raising the confidence up to 83.15% on the 5-Grams, using majority vote and a balanced dataset (equal number of positive, negative and neutral tweets for training). In the n-gram graph cases the improvement was small to none, reaching 94.52% on the 4-gram graphs, using Orthodromic distance and a threshold of 0.001.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge