Dennis Laurijssen

A Wireless Self-Calibrating Ultrasound Microphone Array with Sub-Microsecond Synchronization

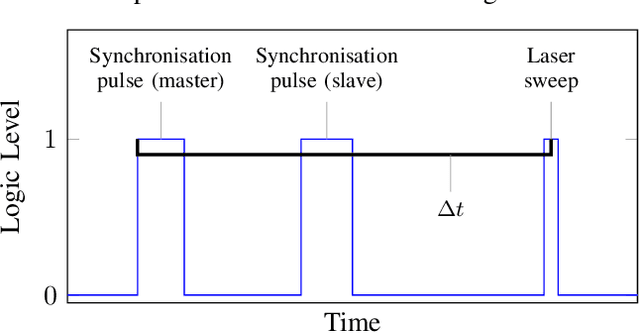

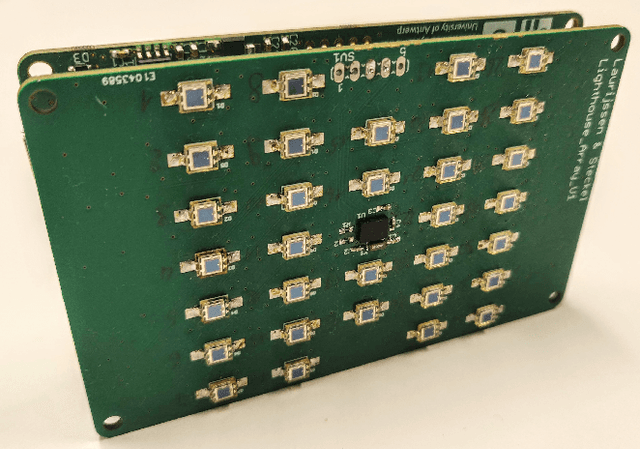

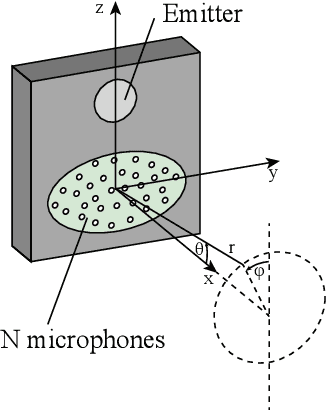

Jun 24, 2025Abstract:We present a novel system architecture for a distributed wireless, self-calibrating ultrasound microphone network for synchronized in-air acoustic sensing. Once deployed the embedded nodes determine their position in the environment using the infrared optical tracking system found in the HTC Vive Lighthouses. After self-calibration, the nodes start sampling the ultrasound microphone while embedding a synchronization signal in the data which is established using a wireless Sub-1GHz RF link. Data transmission is handled via the Wi-Fi 6 radio that is embedded in the nodes' SoC, decoupling synchronization from payload transport. A prototype system with a limited amount of network nodes was used to verify the proposed distributed microphone array's wireless data acquisition and synchronization capabilities. This architecture lays the groundwork for scalable, deployable ultrasound arrays for sound source localization applications in bio-acoustic research and industrial acoustic monitoring.

Tool Wear Prediction in CNC Turning Operations using Ultrasonic Microphone Arrays and CNNs

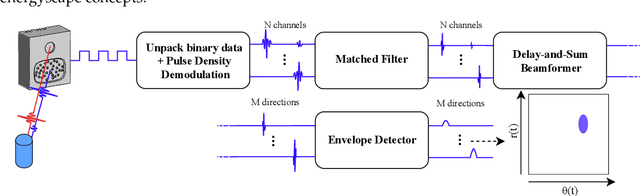

Jun 13, 2024Abstract:This paper introduces a novel method for predicting tool wear in CNC turning operations, combining ultrasonic microphone arrays and convolutional neural networks (CNNs). High-frequency acoustic emissions between 0 kHz and 60 kHz are enhanced using beamforming techniques to improve the signal- to-noise ratio. The processed acoustic data is then analyzed by a CNN, which predicts the Remaining Useful Life (RUL) of cutting tools. Trained on data from 350 workpieces machined with a single carbide insert, the model can accurately predict the RUL of the carbide insert. Our results demonstrate the potential gained by integrating advanced ultrasonic sensors with deep learning for accurate predictive maintenance tasks in CNC machining.

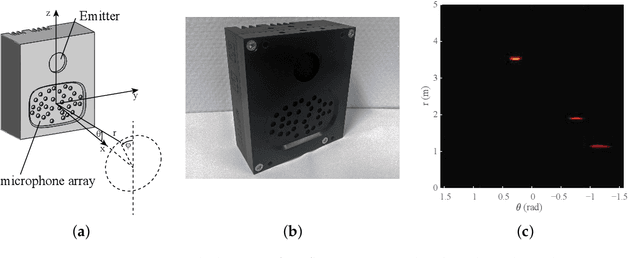

Broadband MEMS Microphone Arrays with Reduced Aperture Through 3D-Printed Waveguides

Jun 11, 2024Abstract:In this paper we present a passive and cost-effective method for increasing the frequency range of ultrasound MEMS microphone arrays when using beamforming techniques. By applying a 3D-printed construction that reduces the acoustic aperture of the MEMS microphones we can create a regularly spaced microphone array layout with much smaller inter-element spacing than could be accomplished on a printed circuit board due to the physical size of the MEMS elements. This method allows the use of ultrasound sensors incorporating microphone arrays in combination with beamforming techniques without aliases due to grating lobes in applications such as sound source localization or the emulation of bat HRTFs.

Stabilized Adaptive Steering for 3D Sonar Microphone Arrays with IMU Sensor Fusion

Jun 10, 2024Abstract:This paper presents a novel software-based approach to stabilizing the acoustic images for in-air 3D sonars. Due to uneven terrain, traditional static beamforming techniques can be misaligned, causing inaccurate measurements and imaging artifacts. Furthermore, mechanical stabilization can be more costly and prone to failure. We propose using an adaptive conventional beamforming approach by fusing it with real-time IMU data to adjust the sonar array's steering matrix dynamically based on the elevation tilt angle caused by the uneven ground. Additionally, we propose gaining compensation to offset emission energy loss due to the transducer's directivity pattern and validate our approach through various experiments, which show significant improvements in temporal consistency in the acoustic images. We implemented a GPU-accelerated software system that operates in real-time with an average execution time of 210ms, meeting autonomous navigation requirements.

HiRIS: an Airborne Sonar Sensor with a 1024 Channel Microphone Array for In-Air Acoustic Imaging

Feb 20, 2024Abstract:Airborne 3D imaging using ultrasound is a promising sensing modality for robotic applications in harsh environments. Over the last decade, several high-performance systems have been proposed in the literature. Most of these sensors use a reduced aperture microphone array, leading to artifacts in the resulting acoustic images. This paper presents a novel in-air ultrasound sensor that incorporates 1024 microphones, in a 32-by- 32 uniform rectangular array, in combination with a distributed embedded hardware design to perform the data acquisition. Using a broadband Minimum Variance Distortionless Response (MVDR) beamformer with Forward-Backward Spatial Smoothing (FB-SS), the sensor is able to create both 2D and 3D ultrasound images of the full-frontal hemisphere with high angular accuracy with up to 70dB main lobe to side lobe ratio. This paper describes both the hardware infrastructure needed to obtain such highly detailed acoustical images, as well as the signal processing chain needed to convert the raw acoustic data into said images. Utilizing this novel high-resolution ultrasound imaging sensor, we wish to investigate the limits of both passive and active airborne ultrasound sensing by utilizing this virtually artifact-free imaging modality.

Detecting and Classifying Bio-Inspired Artificial Landmarks Using In-Air 3D Sonar

Aug 11, 2023Abstract:Various autonomous applications rely on recognizing specific known landmarks in their environment. For example, Simultaneous Localization And Mapping (SLAM) is an important technique that lays the foundation for many common tasks, such as navigation and long-term object tracking. This entails building a map on the go based on sensory inputs which are prone to accumulating errors. Recognizing landmarks in the environment plays a vital role in correcting these errors and further improving the accuracy of SLAM. The most popular choice of sensors for conducting SLAM today is optical sensors such as cameras or LiDAR sensors. These can use landmarks such as QR codes as a prerequisite. However, such sensors become unreliable in certain conditions, e.g., foggy, dusty, reflective, or glass-rich environments. Sonar has proven to be a viable alternative to manage such situations better. However, acoustic sensors also require a different type of landmark. In this paper, we put forward a method to detect the presence of bio-mimetic acoustic landmarks using support vector machines trained on the frequency bands of the reflecting acoustic echoes using an embedded real-time imaging sonar.

In-Air Imaging Sonar Sensor Network with Real-Time Processing Using GPUs

Aug 23, 2022Abstract:For autonomous navigation and robotic applications, sensing the environment correctly is crucial. Many sensing modalities for this purpose exist. In recent years, one such modality that is being used is in-air imaging sonar. It is ideal in complex environments with rough conditions such as dust or fog. However, like with most sensing modalities, to sense the full environment around the mobile platform, multiple such sensors are needed to capture the full 360-degree range. Currently the processing algorithms used to create this data are insufficient to do so for multiple sensors at a reasonably fast update rate. Furthermore, a flexible and robust framework is needed to easily implement multiple imaging sonar sensors into any setup and serve multiple application types for the data. In this paper we present a sensor network framework designed for this novel sensing modality. Furthermore, an implementation of the processing algorithm on a Graphics Processing Unit is proposed to potentially decrease the computing time to allow for real-time processing of one or more imaging sonar sensors at a sufficiently high update rate.

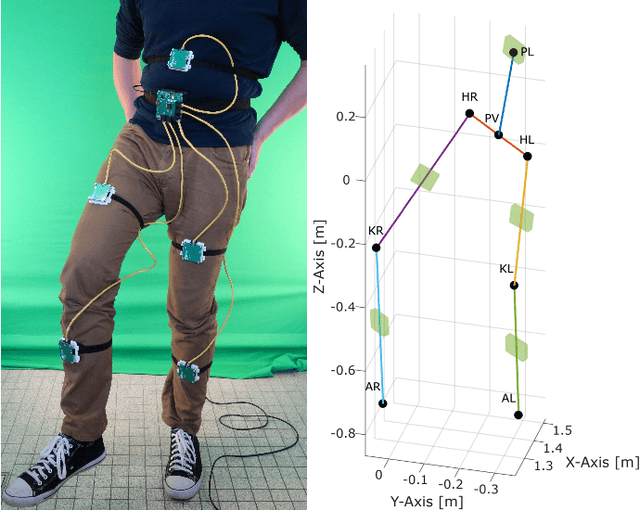

Automatic Calibration of a Six-Degrees-of-Freedom Pose Estimation System

Aug 23, 2022

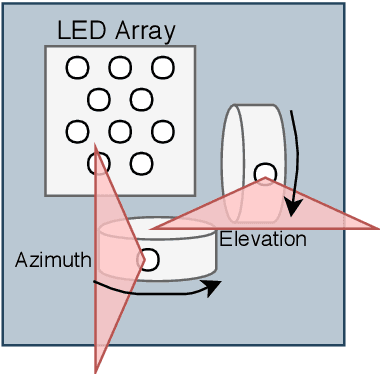

Abstract:Systems for estimating the six-degrees-of-freedom human body pose have been improving for over two decades. Technologies such as motion capture cameras, advanced gaming peripherals and more recently both deep learning techniques and virtual reality systems have shown impressive results. However, most systems that provide high accuracy and high precision are expensive and not easy to operate. Recently, research has been carried out to estimate the human body pose using the HTC Vive virtual reality system. This system shows accurate results while keeping the cost under a 1000 USD. This system uses an optical approach. Two transmitter devices emit infrared pulses and laser planes are tracked by use of photo diodes on receiver hardware. A system using these transmitter devices combined with low-cost custom-made receiver hardware was developed previously but requires manual measurement of the position and orientation of the transmitter devices. These manual measurements can be time consuming, prone to error and not possible in particular setups. We propose an algorithm to automatically calibrate the poses of the transmitter devices in any chosen environment with custom receiver/calibration hardware. Results show that the calibration works in a variety of setups while being more accurate than what manual measurements would allow. Furthermore, the calibration movement and speed has no noticeable influence on the precision of the results.

Real-Time Sonar Fusion for Layered Navigation Controller

Aug 23, 2022

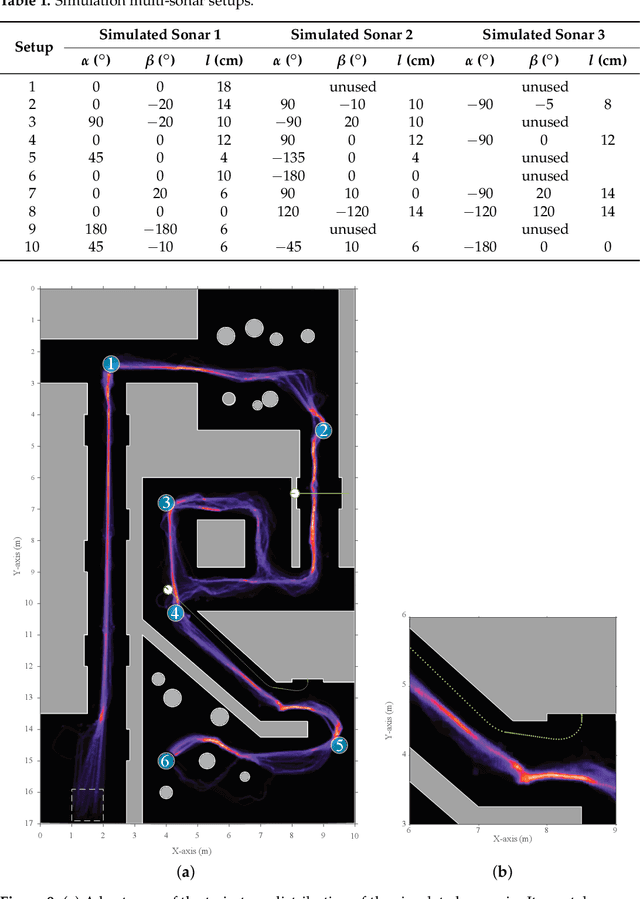

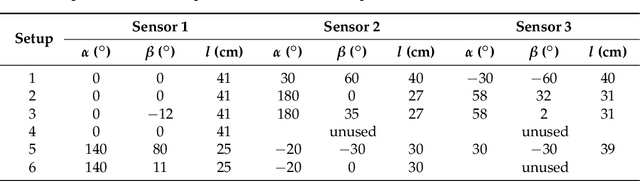

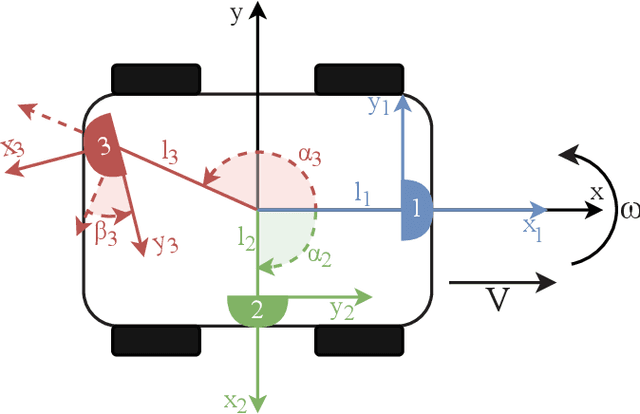

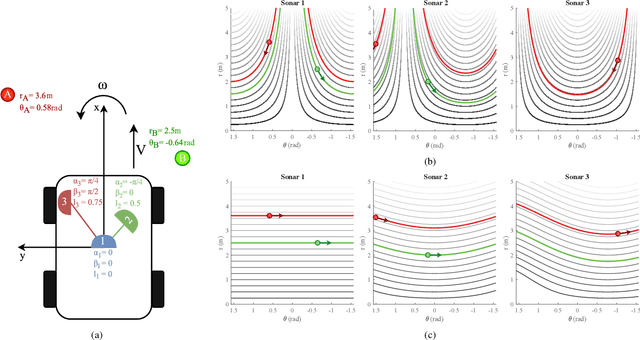

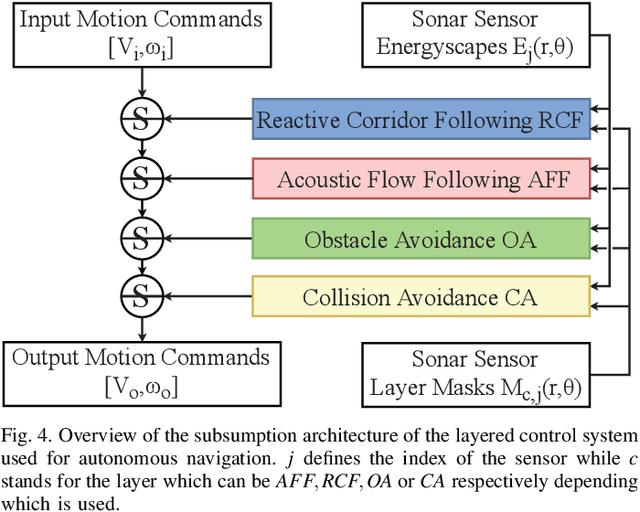

Abstract:Navigation in varied and dynamic indoor environments remains a complex task for autonomous mobile platforms. Especially when conditions worsen, typical sensor modalities may fail to operate optimally and subsequently provide inapt input for safe navigation control. In this study, we present an approach for the navigation of a dynamic indoor environment with a mobile platform with a single or several sonar sensors using a layered control system. These sensors can operate in conditions such as rain, fog, dust, or dirt. The different control layers, such as collision avoidance and corridor following behavior, are activated based on acoustic flow queues in the fusion of the sonar images. The novelty of this work is allowing these sensors to be freely positioned on the mobile platform and providing the framework for designing the optimal navigational outcome based on a zoning system around the mobile platform. Presented in this paper is the acoustic flow model used, as well as the design of the layered controller. Next to validation in simulation, an implementation is presented and validated in a real office environment using a real mobile platform with one, two, or three sonar sensors in real time with 2D navigation. Multiple sensor layouts were validated in both the simulation and real experiments to demonstrate that the modular approach for the controller and sensor fusion works optimally. The results of this work show stable and safe navigation of indoor environments with dynamic objects.

Adaptive Acoustic Flow-Based Navigation with 3D Sonar Sensor Fusion

Aug 23, 2022

Abstract:Navigating spatially varied and dynamic environments is one of the key tasks for autonomous agents. In this paper we present a novel method of navigating a mobile platform with one or multiple 3D-sonar sensors. Moving a mobile platform and subsequently any 3D-sonar sensor on it, will create signature variations over time of the echoed reflections in the sensor readings. An approach is presented to create a predictive model of these signature variations for any motion type. Furthermore, the model is adaptive and works for any position and orientation of one or multiple sonar sensors on a mobile platform. We propose to use this adaptive model and fuse all sensory readings to create a layered control system allowing a mobile platform to perform a set of primitive motions such as collision avoidance, obstacle avoidance, wall following and corridor following behaviours to navigate an environment with dynamically moving objects within it. This paper describes the underlying theoretical base of the entire navigation model and validates it in a simulated environment with results that shows the system is stable and delivers expected behaviour for several tested spatial configurations of one or multiple sonar sensors that can complete an autonomous navigation task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge