David Bergström

Deep Temporal Deaggregation: Large-Scale Spatio-Temporal Generative Models

Jun 18, 2024Abstract:Many of today's data is time-series data originating from various sources, such as sensors, transaction systems, or production systems. Major challenges with such data include privacy and business sensitivity. Generative time-series models have the potential to overcome these problems, allowing representative synthetic data, such as people's movement in cities, to be shared openly and be used to the benefit of society at large. However, contemporary approaches are limited to prohibitively short sequences and small scales. Aside from major memory limitations, the models generate less accurate and less representative samples the longer the sequences are. This issue is further exacerbated by the lack of a comprehensive and accessible benchmark. Furthermore, a common need in practical applications is what-if analysis and dynamic adaptation to data distribution changes, for usage in decision making and to manage a changing world: What if this road is temporarily blocked or another road is added? The focus of this paper is on mobility data, such as people's movement in cities, requiring all these issues to be addressed. To this end, we propose a transformer-based diffusion model, TDDPM, for time-series which outperforms and scales substantially better than state-of-the-art. This is evaluated in a new comprehensive benchmark across several sequence lengths, standard datasets, and evaluation measures. We also demonstrate how the model can be conditioned on a prior over spatial occupancy frequency information, allowing the model to generate mobility data for previously unseen environments and for hypothetical scenarios where the underlying road network and its usage changes. This is evaluated by training on mobility data from part of a city. Then, using only aggregate spatial information as prior, we demonstrate out-of-distribution generalization to the unobserved remainder of the city.

Bt-GAN: Generating Fair Synthetic Healthdata via Bias-transforming Generative Adversarial Networks

Apr 26, 2024Abstract:Synthetic data generation offers a promising solution to enhance the usefulness of Electronic Healthcare Records (EHR) by generating realistic de-identified data. However, the existing literature primarily focuses on the quality of synthetic health data, neglecting the crucial aspect of fairness in downstream predictions. Consequently, models trained on synthetic EHR have faced criticism for producing biased outcomes in target tasks. These biases can arise from either spurious correlations between features or the failure of models to accurately represent sub-groups. To address these concerns, we present Bias-transforming Generative Adversarial Networks (Bt-GAN), a GAN-based synthetic data generator specifically designed for the healthcare domain. In order to tackle spurious correlations (i), we propose an information-constrained Data Generation Process that enables the generator to learn a fair deterministic transformation based on a well-defined notion of algorithmic fairness. To overcome the challenge of capturing exact sub-group representations (ii), we incentivize the generator to preserve sub-group densities through score-based weighted sampling. This approach compels the generator to learn from underrepresented regions of the data manifold. We conduct extensive experiments using the MIMIC-III database. Our results demonstrate that Bt-GAN achieves SOTA accuracy while significantly improving fairness and minimizing bias amplification. We also perform an in-depth explainability analysis to provide additional evidence supporting the validity of our study. In conclusion, our research introduces a novel and professional approach to addressing the limitations of synthetic data generation in the healthcare domain. By incorporating fairness considerations and leveraging advanced techniques such as GANs, we pave the way for more reliable and unbiased predictions in healthcare applications.

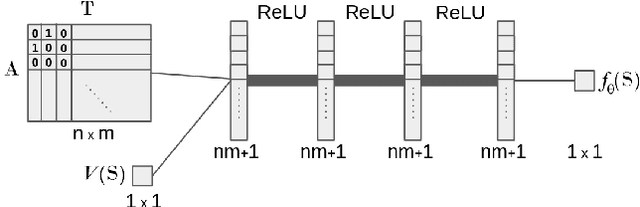

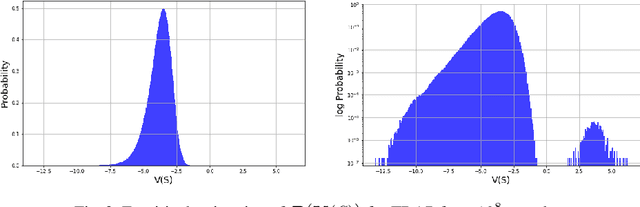

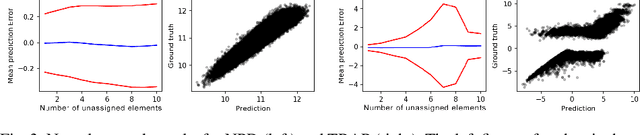

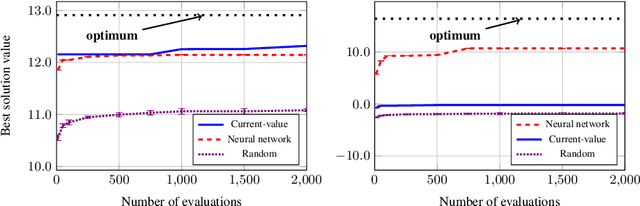

Towards Utilitarian Combinatorial Assignment with Deep Neural Networks and Heuristic Algorithms

Jul 01, 2021

Abstract:This paper presents preliminary work on using deep neural networks to guide general-purpose heuristic algorithms for performing utilitarian combinatorial assignment. In more detail, we use deep learning in an attempt to produce heuristics that can be used together with e.g., search algorithms to generate feasible solutions of higher quality more quickly. Our results indicate that our approach could be a promising future method for constructing such heuristics.

Enhancing Lattice-based Motion Planning with Introspective Learning and Reasoning

May 15, 2020

Abstract:Lattice-based motion planning is a hybrid planning method where a plan made up of discrete actions simultaneously is a physically feasible trajectory. The planning takes both discrete and continuous aspects into account, for example action pre-conditions and collision-free action-duration in the configuration space. Safe motion planing rely on well-calibrated safety-margins for collision checking. The trajectory tracking controller must further be able to reliably execute the motions within this safety margin for the execution to be safe. In this work we are concerned with introspective learning and reasoning about controller performance over time. Normal controller execution of the different actions is learned using reliable and uncertainty-aware machine learning techniques. By correcting for execution bias we manage to substantially reduce the safety margin of motion actions. Reasoning takes place to both verify that the learned models stays safe and to improve collision checking effectiveness in the motion planner by the use of more accurate execution predictions with a smaller safety margin. The presented approach allows for explicit awareness of controller performance under normal circumstances, and timely detection of incorrect performance in abnormal circumstances. Evaluation is made on the nonlinear dynamics of a quadcopter in 3D using simulation. Video: https://youtu.be/STmZduvSUMM

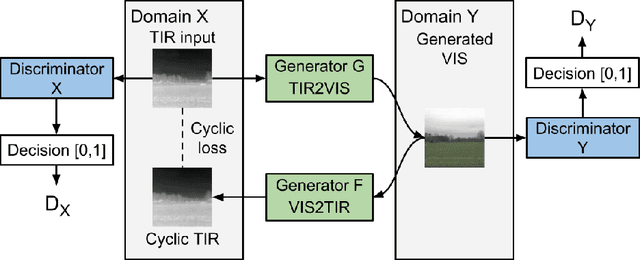

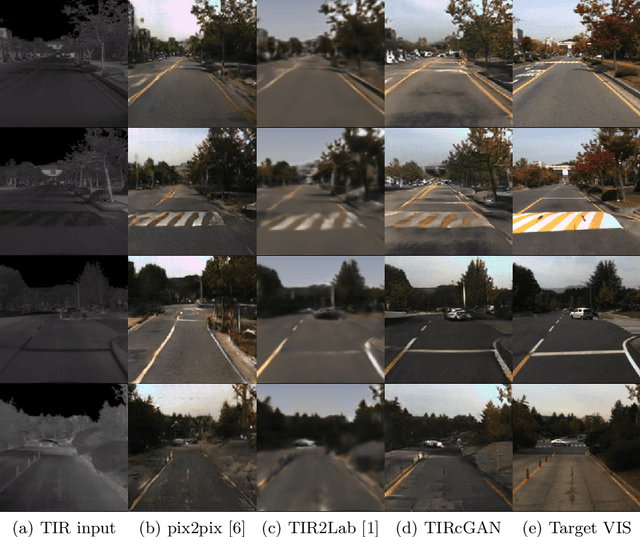

Unpaired Thermal to Visible Spectrum Transfer using Adversarial Training

Apr 03, 2019

Abstract:Thermal Infrared (TIR) cameras are gaining popularity in many computer vision applications due to their ability to operate under low-light conditions. Images produced by TIR cameras are usually difficult for humans to perceive visually, which limits their usability. Several methods in the literature were proposed to address this problem by transforming TIR images into realistic visible spectrum (VIS) images. However, existing TIR-VIS datasets suffer from imperfect alignment between TIR-VIS image pairs which degrades the performance of supervised methods. We tackle this problem by learning this transformation using an unsupervised Generative Adversarial Network (GAN) which trains on unpaired TIR and VIS images. When trained and evaluated on KAIST-MS dataset, our proposed methods was shown to produce significantly more realistic and sharp VIS images than the existing state-of-the-art supervised methods. In addition, our proposed method was shown to generalize very well when evaluated on a new dataset of new environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge