Damian Owerko

Generalizability of Graph Neural Networks for Decentralized Unlabeled Motion Planning

Sep 29, 2024

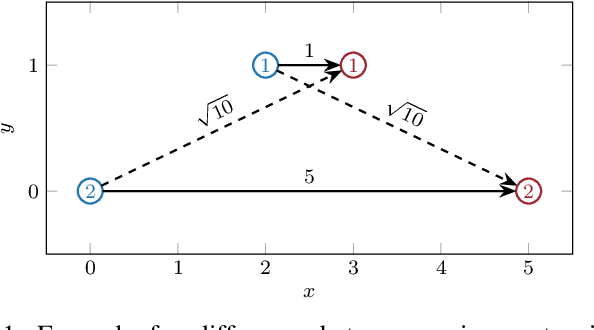

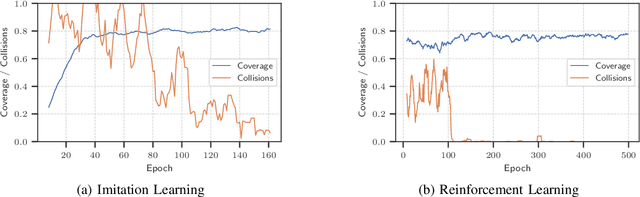

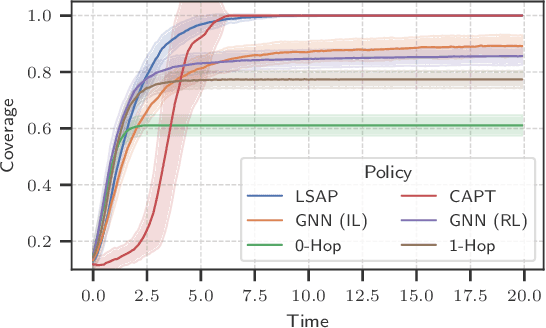

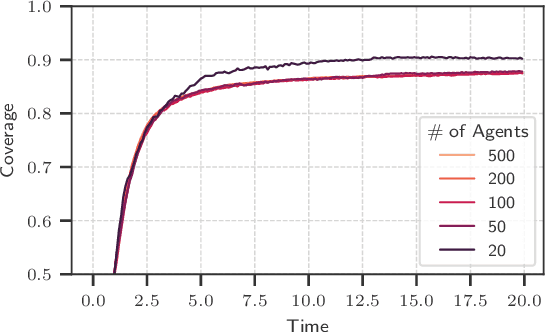

Abstract:Unlabeled motion planning involves assigning a set of robots to target locations while ensuring collision avoidance, aiming to minimize the total distance traveled. The problem forms an essential building block for multi-robot systems in applications such as exploration, surveillance, and transportation. We address this problem in a decentralized setting where each robot knows only the positions of its $k$-nearest robots and $k$-nearest targets. This scenario combines elements of combinatorial assignment and continuous-space motion planning, posing significant scalability challenges for traditional centralized approaches. To overcome these challenges, we propose a decentralized policy learned via a Graph Neural Network (GNN). The GNN enables robots to determine (1) what information to communicate to neighbors and (2) how to integrate received information with local observations for decision-making. We train the GNN using imitation learning with the centralized Hungarian algorithm as the expert policy, and further fine-tune it using reinforcement learning to avoid collisions and enhance performance. Extensive empirical evaluations demonstrate the scalability and effectiveness of our approach. The GNN policy trained on 100 robots generalizes to scenarios with up to 500 robots, outperforming state-of-the-art solutions by 8.6\% on average and significantly surpassing greedy decentralized methods. This work lays the foundation for solving multi-robot coordination problems in settings where scalability is important.

Transferability of Convolutional Neural Networks in Stationary Learning Tasks

Jul 21, 2023

Abstract:Recent advances in hardware and big data acquisition have accelerated the development of deep learning techniques. For an extended period of time, increasing the model complexity has led to performance improvements for various tasks. However, this trend is becoming unsustainable and there is a need for alternative, computationally lighter methods. In this paper, we introduce a novel framework for efficient training of convolutional neural networks (CNNs) for large-scale spatial problems. To accomplish this we investigate the properties of CNNs for tasks where the underlying signals are stationary. We show that a CNN trained on small windows of such signals achieves a nearly performance on much larger windows without retraining. This claim is supported by our theoretical analysis, which provides a bound on the performance degradation. Additionally, we conduct thorough experimental analysis on two tasks: multi-target tracking and mobile infrastructure on demand. Our results show that the CNN is able to tackle problems with many hundreds of agents after being trained with fewer than ten. Thus, CNN architectures provide solutions to these problems at previously computationally intractable scales.

Solving Large-scale Spatial Problems with Convolutional Neural Networks

Jun 14, 2023Abstract:Over the past decade, deep learning research has been accelerated by increasingly powerful hardware, which facilitated rapid growth in the model complexity and the amount of data ingested. This is becoming unsustainable and therefore refocusing on efficiency is necessary. In this paper, we employ transfer learning to improve training efficiency for large-scale spatial problems. We propose that a convolutional neural network (CNN) can be trained on small windows of signals, but evaluated on arbitrarily large signals with little to no performance degradation, and provide a theoretical bound on the resulting generalization error. Our proof leverages shift-equivariance of CNNs, a property that is underexploited in transfer learning. The theoretical results are experimentally supported in the context of mobile infrastructure on demand (MID). The proposed approach is able to tackle MID at large scales with hundreds of agents, which was computationally intractable prior to this work.

Deep Convolutional Neural Networks for Multi-Target Tracking: A Transfer Learning Approach

Oct 28, 2022

Abstract:Multi-target tracking (MTT) is a traditional signal processing task, where the goal is to estimate the states of an unknown number of moving targets from noisy sensor measurements. In this paper, we revisit MTT from a deep learning perspective and propose convolutional neural network (CNN) architectures to tackle it. We represent the target states and sensor measurements as images. Thereby we recast the problem as a image-to-image prediction task for which we train a fully convolutional model. This architecture is motivated by a novel theoretical bound on the transferability error of CNN. The proposed CNN architecture outperforms a GM-PHD filter on the MTT task with 10 targets. The CNN performance transfers without re-training to a larger MTT task with 250 targets with only a $13\%$ increase in average OSPA.

Unsupervised Optimal Power Flow Using Graph Neural Networks

Oct 17, 2022

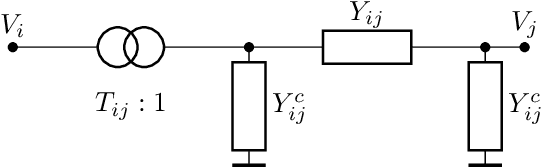

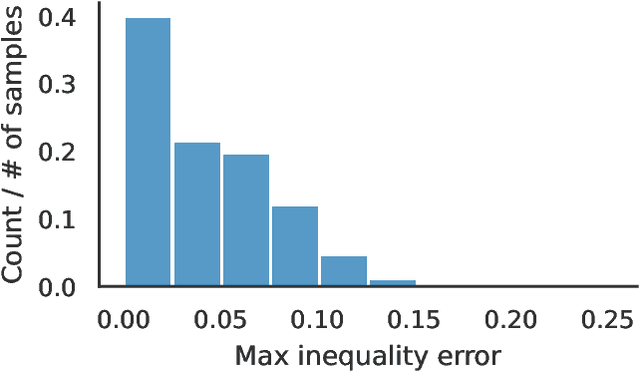

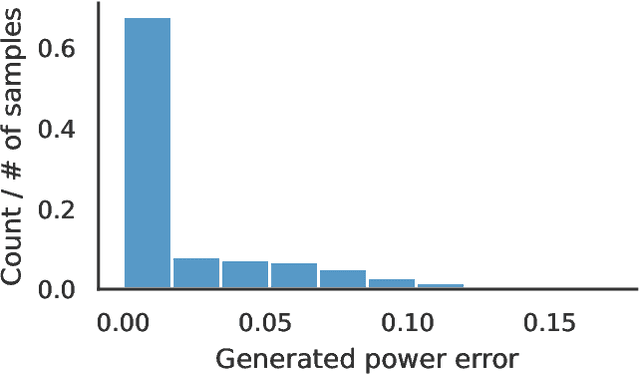

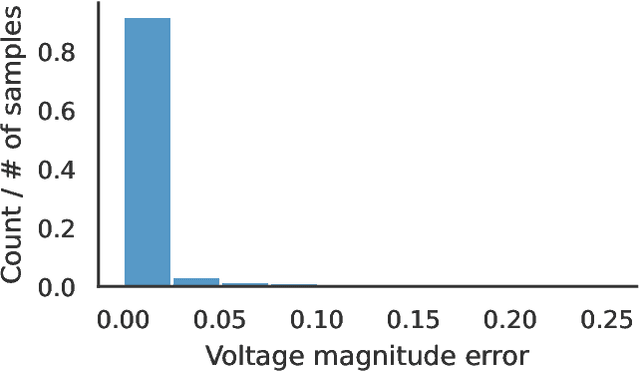

Abstract:Optimal power flow (OPF) is a critical optimization problem that allocates power to the generators in order to satisfy the demand at a minimum cost. Solving this problem exactly is computationally infeasible in the general case. In this work, we propose to leverage graph signal processing and machine learning. More specifically, we use a graph neural network to learn a nonlinear parametrization between the power demanded and the corresponding allocation. We learn the solution in an unsupervised manner, minimizing the cost directly. In order to take into account the electrical constraints of the grid, we propose a novel barrier method that is differentiable and works on initially infeasible points. We show through simulations that the use of GNNs in this unsupervised learning context leads to solutions comparable to standard solvers while being computationally efficient and avoiding constraint violations most of the time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge