Clark Youngdong Son

Fully Distributed Informative Planning for Environmental Learning with Multi-Robot Systems

Dec 29, 2021

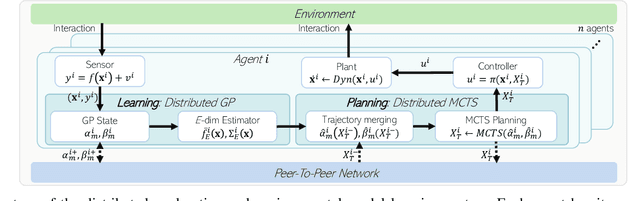

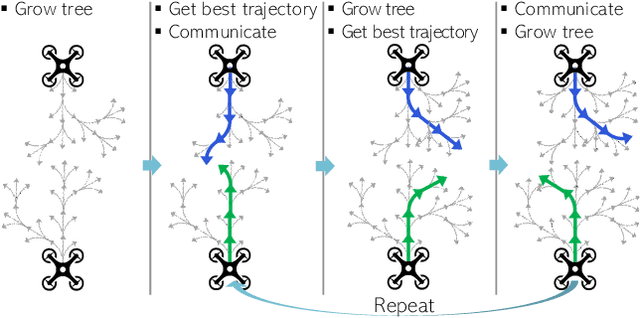

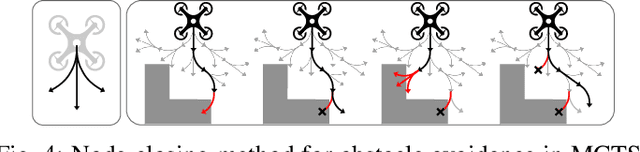

Abstract:This paper proposes a cooperative environmental learning algorithm working in a fully distributed manner. A multi-robot system is more effective for exploration tasks than a single robot, but it involves the following challenges: 1) online distributed learning of environmental map using multiple robots; 2) generation of safe and efficient exploration path based on the learned map; and 3) maintenance of the scalability with respect to the number of robots. To this end, we divide the entire process into two stages of environmental learning and path planning. Distributed algorithms are applied in each stage and combined through communication between adjacent robots. The environmental learning algorithm uses a distributed Gaussian process, and the path planning algorithm uses a distributed Monte Carlo tree search. As a result, we build a scalable system without the constraint on the number of robots. Simulation results demonstrate the performance and scalability of the proposed system. Moreover, a real-world-dataset-based simulation validates the utility of our algorithm in a more realistic scenario.

Robust Real-time RGB-D Visual Odometry in Dynamic Environments via Rigid Motion Model

Jul 19, 2019

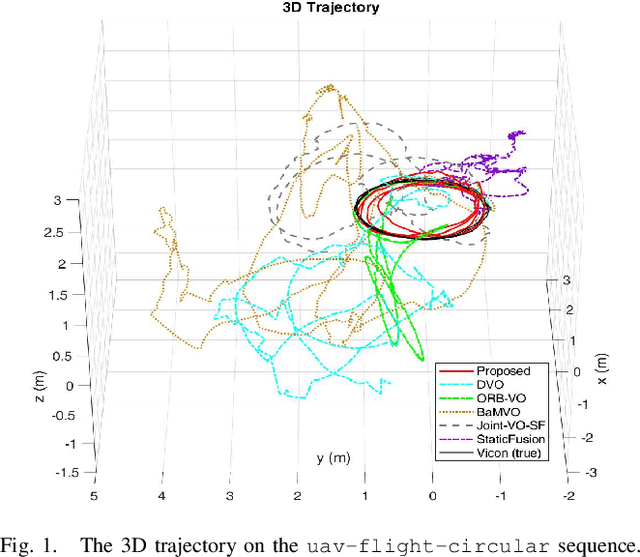

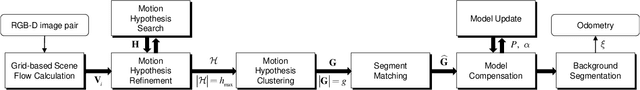

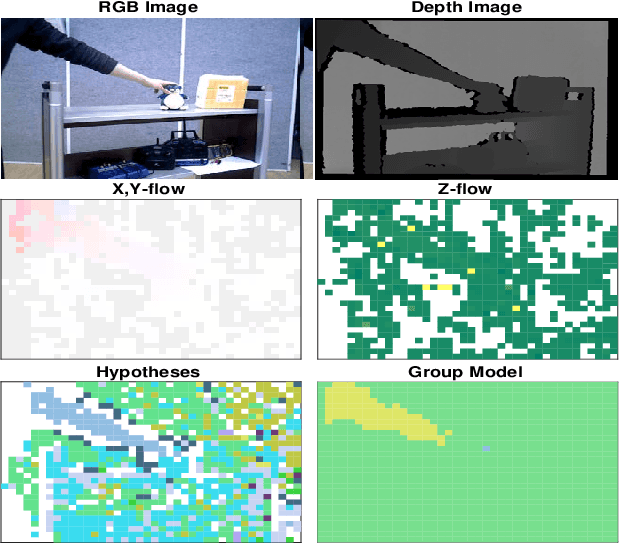

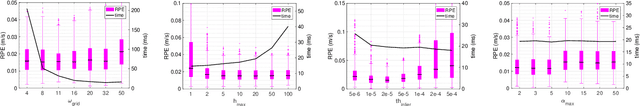

Abstract:In the paper, we propose a robust real-time visual odometry in dynamic environments via rigid-motion model updated by scene flow. The proposed algorithm consists of spatial motion segmentation and temporal motion tracking. The spatial segmentation first generates several motion hypotheses by using a grid-based scene flow and clusters the extracted motion hypotheses, separating objects that move independently of one another. Further, we use a dual-mode motion model to consistently distinguish between the static and dynamic parts in the temporal motion tracking stage. Finally, the proposed algorithm estimates the pose of a camera by taking advantage of the region classified as static parts. In order to evaluate the performance of visual odometry under the existence of dynamic rigid objects, we use self-collected dataset containing RGB-D images and motion capture data for ground-truth. We compare our algorithm with state-of-the-art visual odometry algorithms. The validation results suggest that the proposed algorithm can estimate the pose of a camera robustly and accurately in dynamic environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge