Claire Birnie

Joint Microseismic Event Detection and Location with a Detection Transformer

Jul 16, 2023

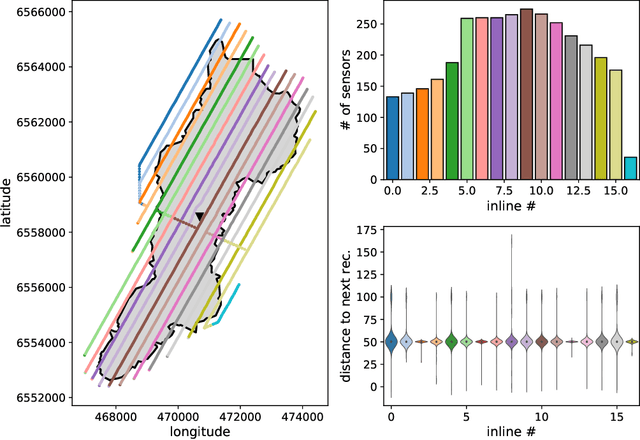

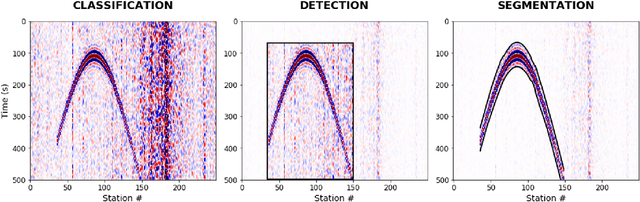

Abstract:Microseismic event detection and location are two primary components in microseismic monitoring, which offers us invaluable insights into the subsurface during reservoir stimulation and evolution. Conventional approaches for event detection and location often suffer from manual intervention and/or heavy computation, while current machine learning-assisted approaches typically address detection and location separately; such limitations hinder the potential for real-time microseismic monitoring. We propose an approach to unify event detection and source location into a single framework by adapting a Convolutional Neural Network backbone and an encoder-decoder Transformer with a set-based Hungarian loss, which is applied directly to recorded waveforms. The proposed network is trained on synthetic data simulating multiple microseismic events corresponding to random source locations in the area of suspected microseismic activities. A synthetic test on a 2D profile of the SEAM Time Lapse model illustrates the capability of the proposed method in detecting the events properly and locating them in the subsurface accurately; while, a field test using the Arkoma Basin data further proves its practicability, efficiency, and its potential in paving the way for real-time monitoring of microseismic events.

Explainable Artificial Intelligence driven mask design for self-supervised seismic denoising

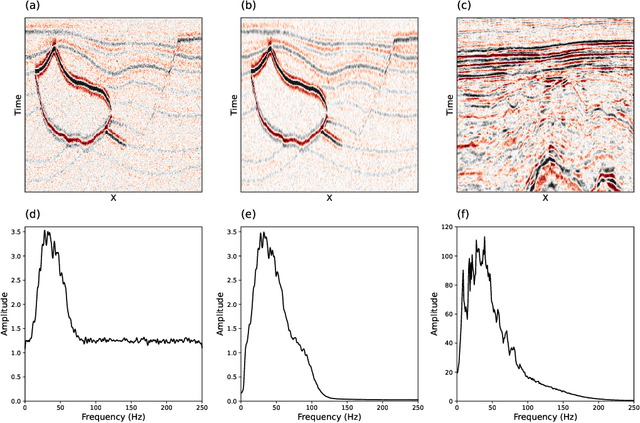

Jul 13, 2023Abstract:The presence of coherent noise in seismic data leads to errors and uncertainties, and as such it is paramount to suppress noise as early and efficiently as possible. Self-supervised denoising circumvents the common requirement of deep learning procedures of having noisy-clean training pairs. However, self-supervised coherent noise suppression methods require extensive knowledge of the noise statistics. We propose the use of explainable artificial intelligence approaches to see inside the black box that is the denoising network and use the gained knowledge to replace the need for any prior knowledge of the noise itself. This is achieved in practice by leveraging bias-free networks and the direct linear link between input and output provided by the associated Jacobian matrix; we show that a simple averaging of the Jacobian contributions over a number of randomly selected input pixels, provides an indication of the most effective mask to suppress noise present in the data. The proposed method therefore becomes a fully automated denoising procedure requiring no clean training labels or prior knowledge. Realistic synthetic examples with noise signals of varying complexities, ranging from simple time-correlated noise to complex pseudo rig noise propagating at the velocity of the ocean, are used to validate the proposed approach. Its automated nature is highlighted further by an application to two field datasets. Without any substantial pre-processing or any knowledge of the acquisition environment, the automatically identified blind-masks are shown to perform well in suppressing both trace-wise noise in common shot gathers from the Volve marine dataset and colored noise in post stack seismic images from a land seismic survey.

Transfer learning for self-supervised, blind-spot seismic denoising

Sep 25, 2022

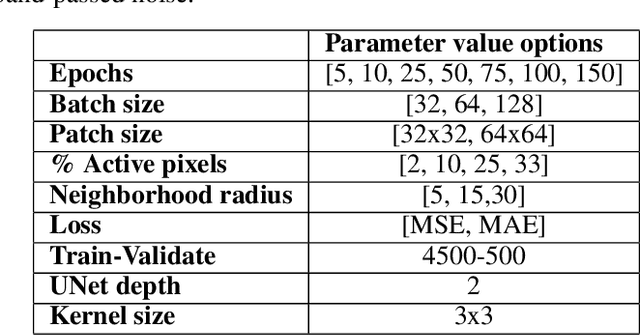

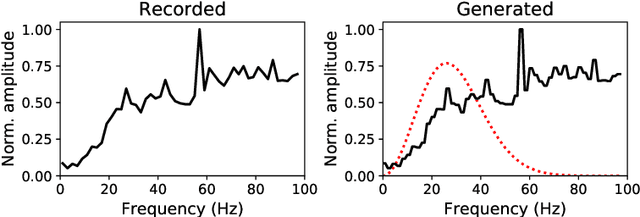

Abstract:Noise in seismic data arises from numerous sources and is continually evolving. The use of supervised deep learning procedures for denoising of seismic datasets often results in poor performance: this is due to the lack of noise-free field data to act as training targets and the large difference in characteristics between synthetic and field datasets. Self-supervised, blind-spot networks typically overcome these limitation by training directly on the raw, noisy data. However, such networks often rely on a random noise assumption, and their denoising capabilities quickly decrease in the presence of even minimally-correlated noise. Extending from blind-spots to blind-masks can efficiently suppress coherent noise along a specific direction, but it cannot adapt to the ever-changing properties of noise. To preempt the network's ability to predict the signal and reduce its opportunity to learn the noise properties, we propose an initial, supervised training of the network on a frugally-generated synthetic dataset prior to fine-tuning in a self-supervised manner on the field dataset of interest. Considering the change in peak signal-to-noise ratio, as well as the volume of noise reduced and signal leakage observed, we illustrate the clear benefit in initialising the self-supervised network with the weights from a supervised base-training. This is further supported by a test on a field dataset where the fine-tuned network strikes the best balance between signal preservation and noise reduction. Finally, the use of the unrealistic, frugally-generated synthetic dataset for the supervised base-training includes a number of benefits: minimal prior geological knowledge is required, substantially reduced computational cost for the dataset generation, and a reduced requirement of re-training the network should recording conditions change, to name a few.

The potential of self-supervised networks for random noise suppression in seismic data

Sep 15, 2021

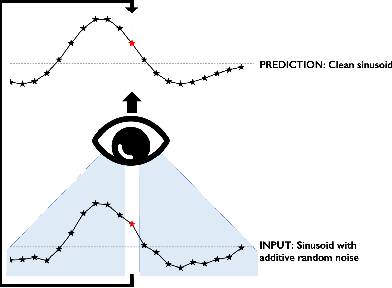

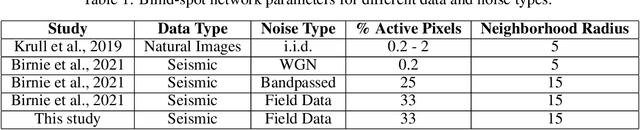

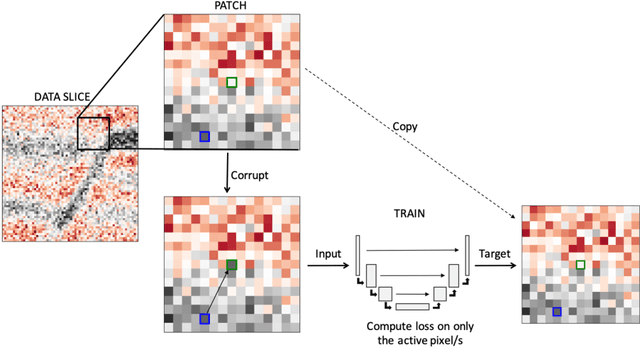

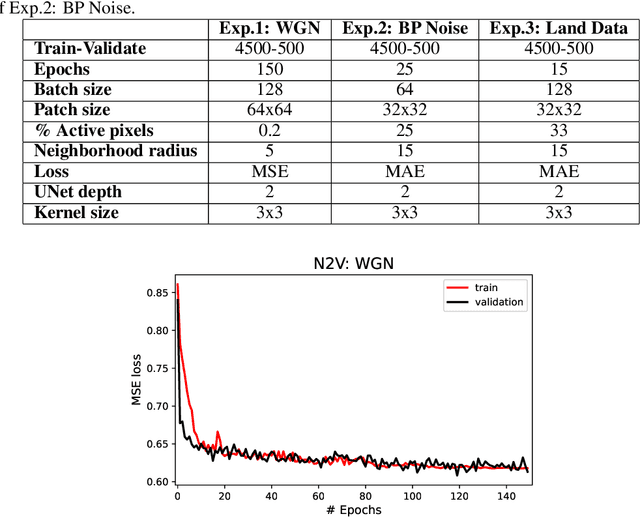

Abstract:Noise suppression is an essential step in any seismic processing workflow. A portion of this noise, particularly in land datasets, presents itself as random noise. In recent years, neural networks have been successfully used to denoise seismic data in a supervised fashion. However, supervised learning always comes with the often unachievable requirement of having noisy-clean data pairs for training. Using blind-spot networks, we redefine the denoising task as a self-supervised procedure where the network uses the surrounding noisy samples to estimate the noise-free value of a central sample. Based on the assumption that noise is statistically independent between samples, the network struggles to predict the noise component of the sample due to its randomnicity, whilst the signal component is accurately predicted due to its spatio-temporal coherency. Illustrated on synthetic examples, the blind-spot network is shown to be an efficient denoiser of seismic data contaminated by random noise with minimal damage to the signal; therefore, providing improvements in both the image domain and down-the-line tasks, such as inversion. To conclude the study, the suggested approach is applied to field data and the results are compared with two commonly used random denoising techniques: FX-deconvolution and Curvelet transform. By demonstrating that blind-spot networks are an efficient suppressor of random noise, we believe this is just the beginning of utilising self-supervised learning in seismic applications.

An introduction to distributed training of deep neural networks for segmentation tasks with large seismic datasets

Feb 25, 2021

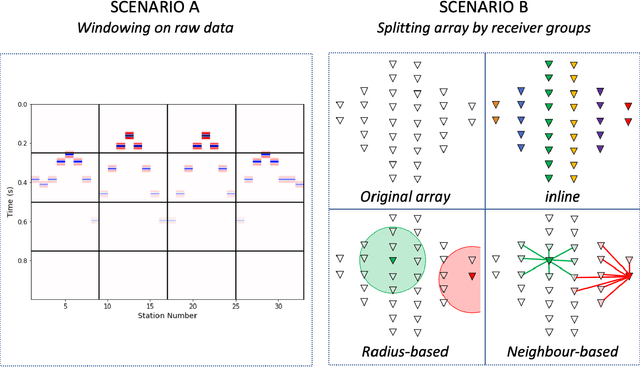

Abstract:Deep learning applications are drastically progressing in seismic processing and interpretation tasks. However, the majority of approaches subsample data volumes and restrict model sizes to minimise computational requirements. Subsampling the data risks losing vital spatio-temporal information which could aid training whilst restricting model sizes can impact model performance, or in some extreme cases, renders more complicated tasks such as segmentation impossible. This paper illustrates how to tackle the two main issues of training of large neural networks: memory limitations and impracticably large training times. Typically, training data is preloaded into memory prior to training, a particular challenge for seismic applications where data is typically four times larger than that used for standard image processing tasks (float32 vs. uint8). Using a microseismic use case, we illustrate how over 750GB of data can be used to train a model by using a data generator approach which only stores in memory the data required for that training batch. Furthermore, efficient training over large models is illustrated through the training of a 7-layer UNet with input data dimensions of 4096X4096. Through a batch-splitting distributed training approach, training times are reduced by a factor of four. The combination of data generators and distributed training removes any necessity of data 1 subsampling or restriction of neural network sizes, offering the opportunity of utilisation of larger networks, higher-resolution input data or moving from 2D to 3D problem spaces.

Bidirectional recurrent neural networks for seismic event detection

Dec 05, 2020

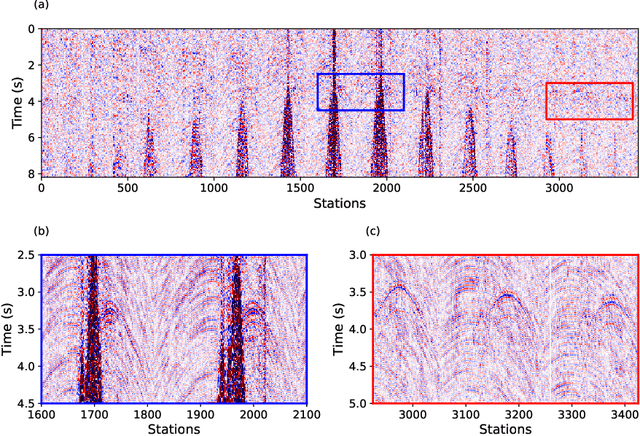

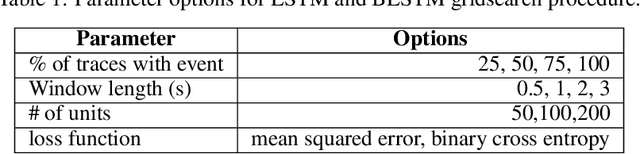

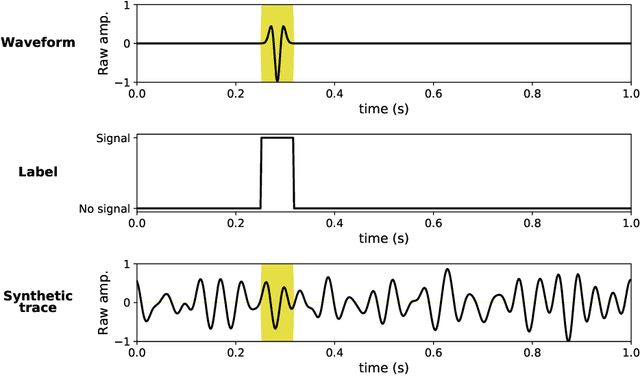

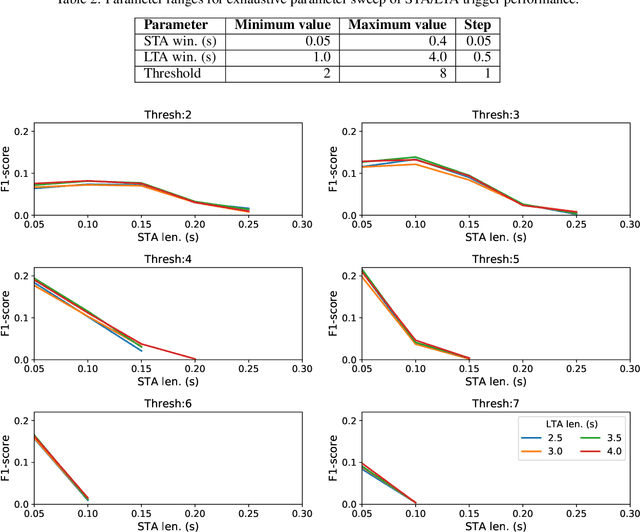

Abstract:Real time, accurate passive seismic event detection is a critical safety measure across a range of monitoring applications from reservoir stability to carbon storage to volcanic tremor detection. The most common detection procedure remains the Short-Term-Average to Long-Term-Average (STA/LTA) trigger despite its common pitfalls of requiring a signal-to-noise ratio greater than one and being highly sensitive to the trigger parameters. Whilst numerous alternatives have been proposed, they often are tailored to a specific monitoring setting and therefore cannot be globally applied, or they are too computationally expensive therefore cannot be run real time. This work introduces a deep learning approach to event detection that is an alternative to the STA/LTA trigger. A bi-directional, long-short-term memory, neural network is trained solely on synthetic traces. Evaluated on synthetic and field data, the neural network approach significantly outperforms the STA/LTA trigger both on the number of correctly detected arrivals as well as on reducing the number of falsely detected events. Its real time applicability is proven with 600 traces processed in real time on a single processing unit.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge