Chenmin Ba

Habin Institute of Technology

Differentiable Self-Adaptive Learning Rate

Oct 19, 2022

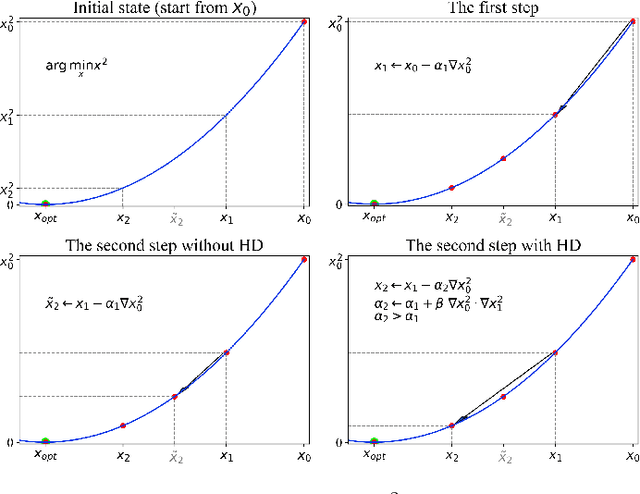

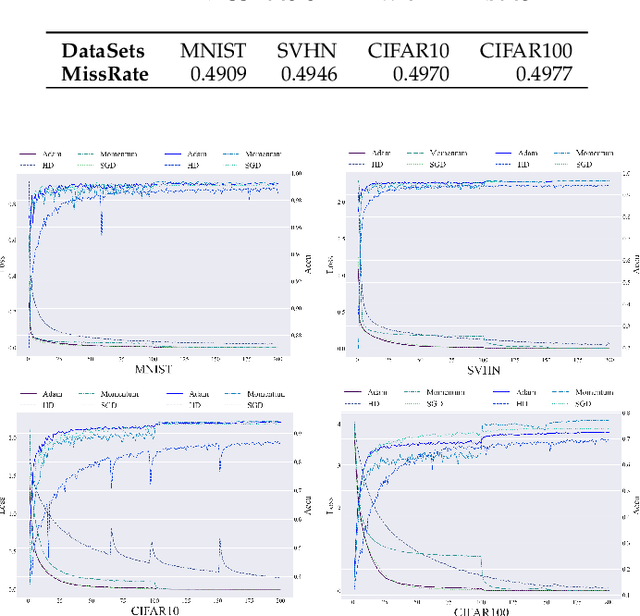

Abstract:Learning rate adaptation is a popular topic in machine learning. Gradient Descent trains neural nerwork with a fixed learning rate. Learning rate adaptation is proposed to accelerate the training process through adjusting the step size in the training session. Famous works include Momentum, Adam and Hypergradient. Hypergradient is the most special one. Hypergradient achieved adaptation by calculating the derivative of learning rate with respect to cost function and utilizing gradient descent for learning rate. However, Hypergradient is still not perfect. In practice, Hypergradient fail to decrease training loss after learning rate adaptation with a large probability. Apart from that, evidence has been found that Hypergradient are not suitable for dealing with large datesets in the form of minibatch training. Most unfortunately, Hypergradient always fails to get a good accuracy on the validation dataset although it could reduce training loss to a very tiny value. To solve Hypergradient's problems, we propose a novel adaptation algorithm, where learning rate is parameter specific and internal structured. We conduct extensive experiments on multiple network models and datasets compared with various benchmark optimizers. It is shown that our algorithm can achieve faster and higher qualified convergence than those state-of-art optimizers.

Automatic Hyper-Parameter Optimization Based on Mapping Discovery from Data to Hyper-Parameters

Mar 03, 2020

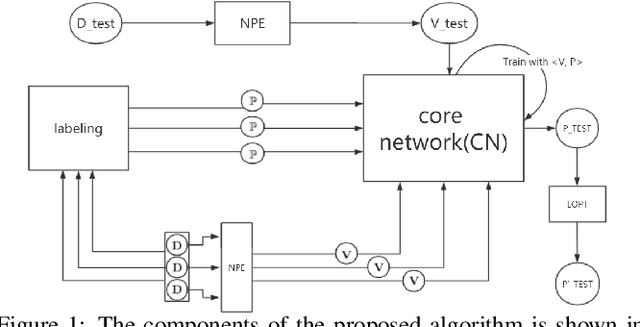

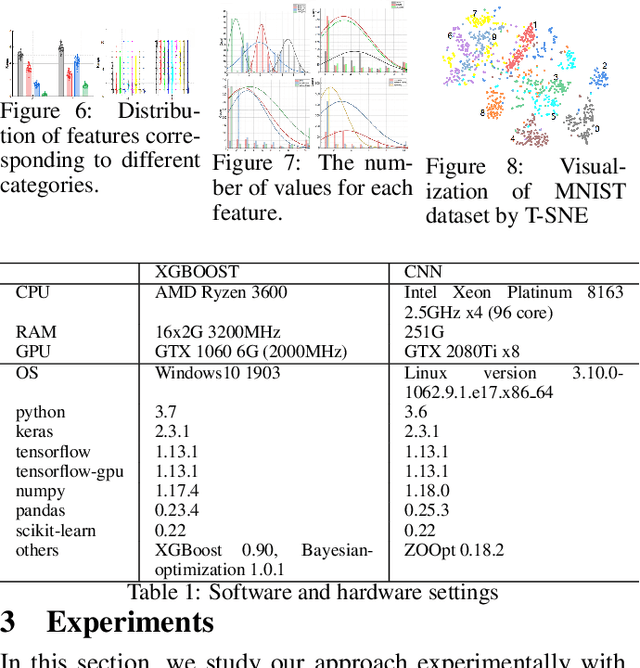

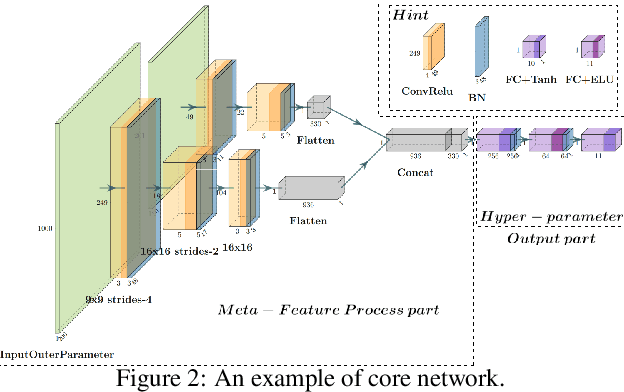

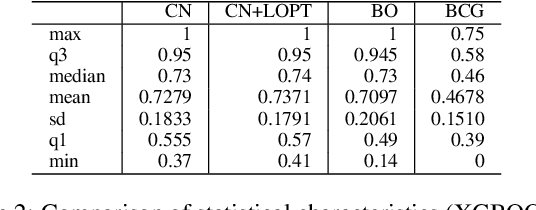

Abstract:Machine learning algorithms have made remarkable achievements in the field of artificial intelligence. However, most machine learning algorithms are sensitive to the hyper-parameters. Manually optimizing the hyper-parameters is a common method of hyper-parameter tuning. However, it is costly and empirically dependent. Automatic hyper-parameter optimization (autoHPO) is favored due to its effectiveness. However, current autoHPO methods are usually only effective for a certain type of problems, and the time cost is high. In this paper, we propose an efficient automatic parameter optimization approach, which is based on the mapping from data to the corresponding hyper-parameters. To describe such mapping, we propose a sophisticated network structure. To obtain such mapping, we develop effective network constrution algorithms. We also design strategy to optimize the result futher during the application of the mapping. Extensive experimental results demonstrate that the proposed approaches outperform the state-of-the-art apporaches significantly.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge