Chengyu Sun

Labeled Subgraph Entropy Kernel

Mar 21, 2023

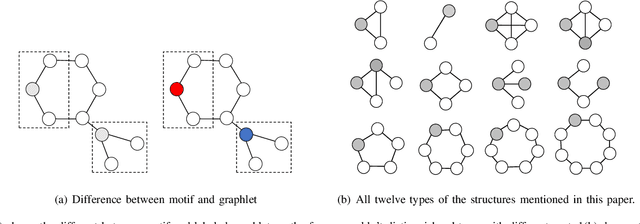

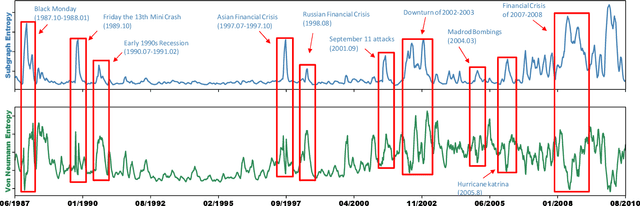

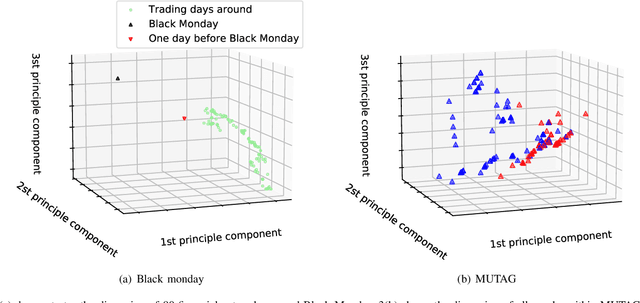

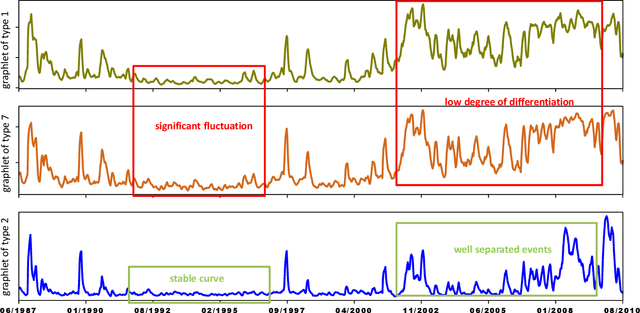

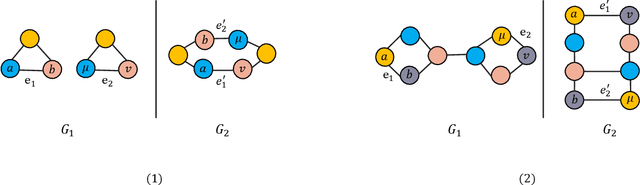

Abstract:In recent years, kernel methods are widespread in tasks of similarity measuring. Specifically, graph kernels are widely used in fields of bioinformatics, chemistry and financial data analysis. However, existing methods, especially entropy based graph kernels are subject to large computational complexity and the negligence of node-level information. In this paper, we propose a novel labeled subgraph entropy graph kernel, which performs well in structural similarity assessment. We design a dynamic programming subgraph enumeration algorithm, which effectively reduces the time complexity. Specially, we propose labeled subgraph, which enriches substructure topology with semantic information. Analogizing the cluster expansion process of gas cluster in statistical mechanics, we re-derive the partition function and calculate the global graph entropy to characterize the network. In order to test our method, we apply several real-world datasets and assess the effects in different tasks. To capture more experiment details, we quantitatively and qualitatively analyze the contribution of different topology structures. Experimental results successfully demonstrate the effectiveness of our method which outperforms several state-of-the-art methods.

HAT-GAE: Self-Supervised Graph Auto-encoders with Hierarchical Adaptive Masking and Trainable Corruption

Jan 28, 2023Abstract:Self-supervised auto-encoders have emerged as a successful framework for representation learning in computer vision and natural language processing in recent years, However, their application to graph data has been met with limited performance due to the non-Euclidean and complex structure of graphs in comparison to images or text, as well as the limitations of conventional auto-encoder architectures. In this paper, we investigate factors impacting the performance of auto-encoders on graph data and propose a novel auto-encoder model for graph representation learning. Our model incorporates a hierarchical adaptive masking mechanism to incrementally increase the difficulty of training in order to mimic the process of human cognitive learning, and a trainable corruption scheme to enhance the robustness of learned representations. Through extensive experimentation on ten benchmark datasets, we demonstrate the superiority of our proposed method over state-of-the-art graph representation learning models.

Two-level Graph Neural Network

Jan 03, 2022

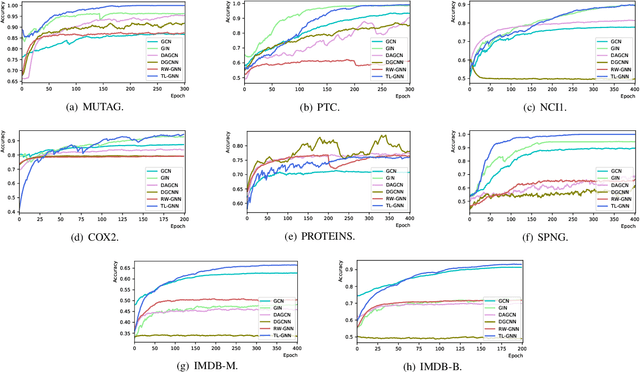

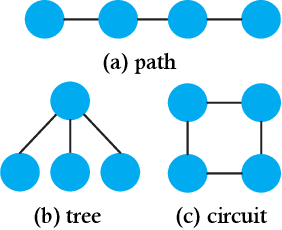

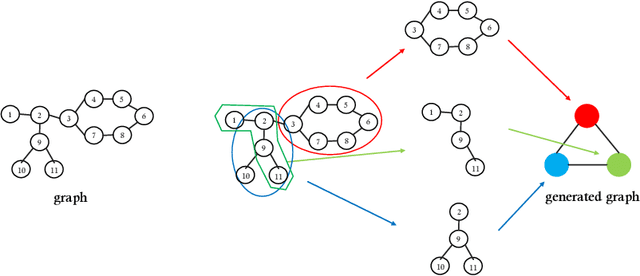

Abstract:Graph Neural Networks (GNNs) are recently proposed neural network structures for the processing of graph-structured data. Due to their employed neighbor aggregation strategy, existing GNNs focus on capturing node-level information and neglect high-level information. Existing GNNs therefore suffer from representational limitations caused by the Local Permutation Invariance (LPI) problem. To overcome these limitations and enrich the features captured by GNNs, we propose a novel GNN framework, referred to as the Two-level GNN (TL-GNN). This merges subgraph-level information with node-level information. Moreover, we provide a mathematical analysis of the LPI problem which demonstrates that subgraph-level information is beneficial to overcoming the problems associated with LPI. A subgraph counting method based on the dynamic programming algorithm is also proposed, and this has time complexity is O(n^3), n is the number of nodes of a graph. Experiments show that TL-GNN outperforms existing GNNs and achieves state-of-the-art performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge