Chedy Raissi

CharNeRF: 3D Character Generation from Concept Art

Feb 27, 2024Abstract:3D modeling holds significant importance in the realms of AR/VR and gaming, allowing for both artistic creativity and practical applications. However, the process is often time-consuming and demands a high level of skill. In this paper, we present a novel approach to create volumetric representations of 3D characters from consistent turnaround concept art, which serves as the standard input in the 3D modeling industry. While Neural Radiance Field (NeRF) has been a game-changer in image-based 3D reconstruction, to the best of our knowledge, there is no known research that optimizes the pipeline for concept art. To harness the potential of concept art, with its defined body poses and specific view angles, we propose encoding it as priors for our model. We train the network to make use of these priors for various 3D points through a learnable view-direction-attended multi-head self-attention layer. Additionally, we demonstrate that a combination of ray sampling and surface sampling enhances the inference capabilities of our network. Our model is able to generate high-quality 360-degree views of characters. Subsequently, we provide a simple guideline to better leverage our model to extract the 3D mesh. It is important to note that our model's inferencing capabilities are influenced by the training data's characteristics, primarily focusing on characters with a single head, two arms, and two legs. Nevertheless, our methodology remains versatile and adaptable to concept art from diverse subject matters, without imposing any specific assumptions on the data.

DeepLSH: Deep Locality-Sensitive Hash Learning for Fast and Efficient Near-Duplicate Crash Report Detection

Oct 10, 2023Abstract:Automatic crash bucketing is a crucial phase in the software development process for efficiently triaging bug reports. It generally consists in grouping similar reports through clustering techniques. However, with real-time streaming bug collection, systems are needed to quickly answer the question: What are the most similar bugs to a new one?, that is, efficiently find near-duplicates. It is thus natural to consider nearest neighbors search to tackle this problem and especially the well-known locality-sensitive hashing (LSH) to deal with large datasets due to its sublinear performance and theoretical guarantees on the similarity search accuracy. Surprisingly, LSH has not been considered in the crash bucketing literature. It is indeed not trivial to derive hash functions that satisfy the so-called locality-sensitive property for the most advanced crash bucketing metrics. Consequently, we study in this paper how to leverage LSH for this task. To be able to consider the most relevant metrics used in the literature, we introduce DeepLSH, a Siamese DNN architecture with an original loss function, that perfectly approximates the locality-sensitivity property even for Jaccard and Cosine metrics for which exact LSH solutions exist. We support this claim with a series of experiments on an original dataset, which we make available.

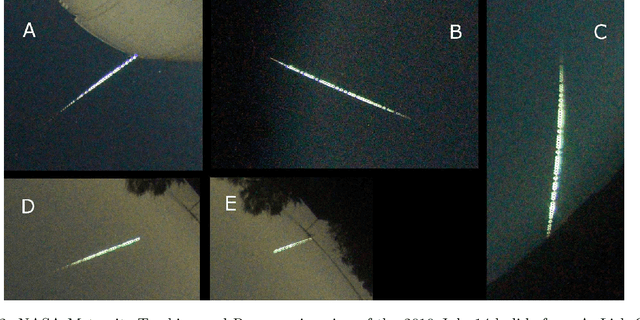

Recovery of Meteorites Using an Autonomous Drone and Machine Learning

Jun 11, 2021

Abstract:The recovery of freshly fallen meteorites from tracked and triangulated meteors is critical to determining their source asteroid families. However, locating meteorite fragments in strewn fields remains a challenge with very few meteorites being recovered from the meteors triangulated in past and ongoing meteor camera networks. We examined if locating meteorites can be automated using machine learning and an autonomous drone. Drones can be programmed to fly a grid search pattern and take systematic pictures of the ground over a large survey area. Those images can be analyzed using a machine learning classifier to identify meteorites in the field among many other features. Here, we describe a proof-of-concept meteorite classifier that deploys off-line a combination of different convolution neural networks to recognize meteorites from images taken by drones in the field. The system was implemented in a conceptual drone setup and tested in the suspected strewn field of a recent meteorite fall near Walker Lake, Nevada.

* 16 pages, 9 Figures

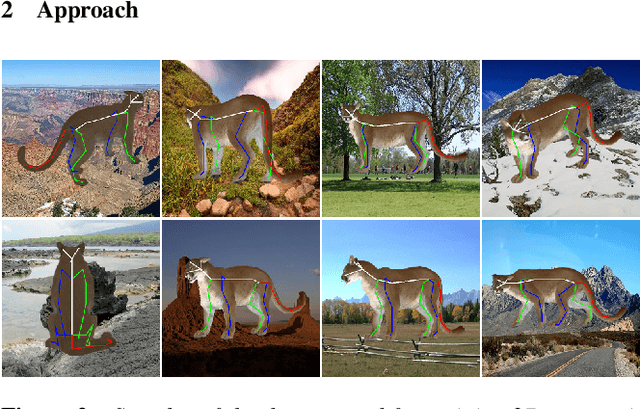

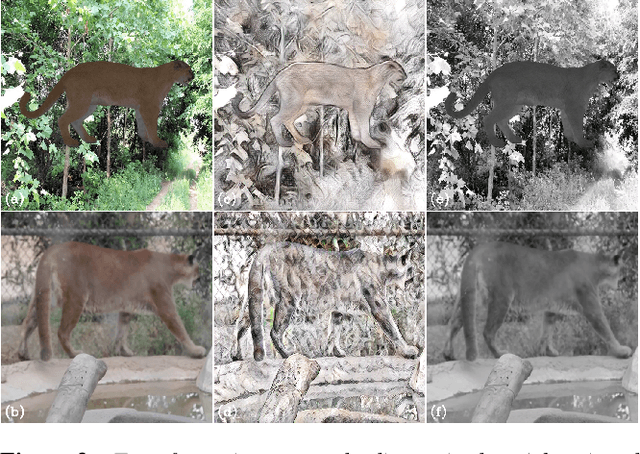

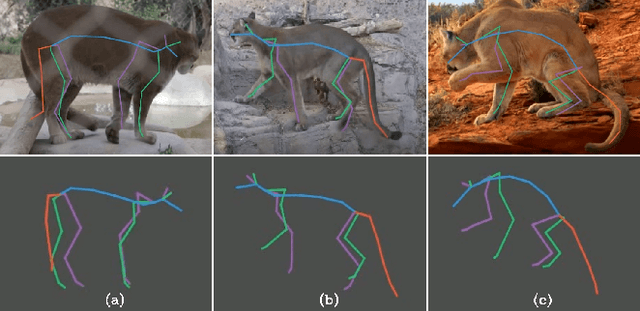

ZooBuilder: 2D and 3D Pose Estimation for Quadrupeds Using Synthetic Data

Sep 01, 2020

Abstract:This work introduces a novel strategy for generating synthetic training data for 2D and 3D pose estimation of animals using keyframe animations. With the objective to automate the process of creating animations for wildlife, we train several 2D and 3D pose estimation models with synthetic data, and put in place an end-to-end pipeline called ZooBuilder. The pipeline takes as input a video of an animal in the wild, and generates the corresponding 2D and 3D coordinates for each joint of the animal's skeleton. With this approach, we produce motion capture data that can be used to create animations for wildlife.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge