Alexis Rolland

Regularized Contrastive Learning of Semantic Search

Sep 27, 2022

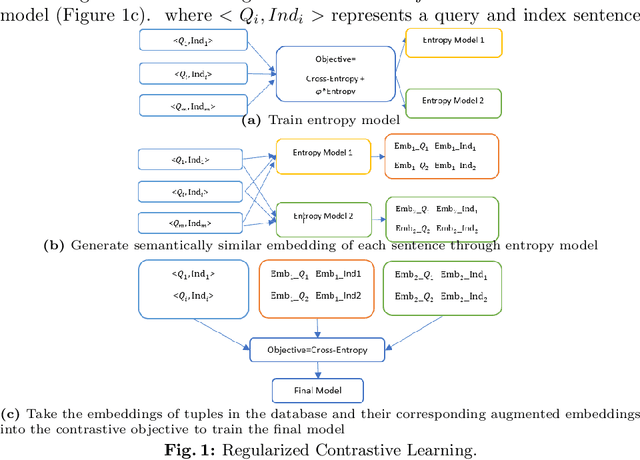

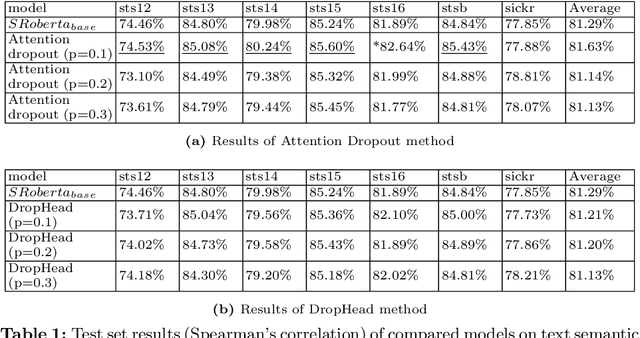

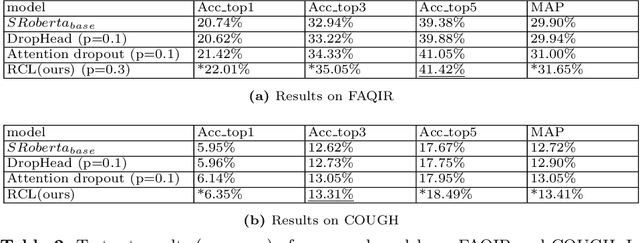

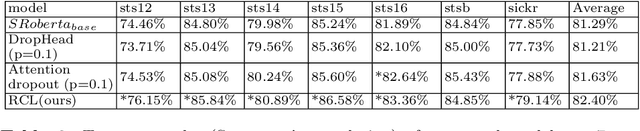

Abstract:Semantic search is an important task which objective is to find the relevant index from a database for query. It requires a retrieval model that can properly learn the semantics of sentences. Transformer-based models are widely used as retrieval models due to their excellent ability to learn semantic representations. in the meantime, many regularization methods suitable for them have also been proposed. In this paper, we propose a new regularization method: Regularized Contrastive Learning, which can help transformer-based models to learn a better representation of sentences. It firstly augments several different semantic representations for every sentence, then take them into the contrastive objective as regulators. These contrastive regulators can overcome overfitting issues and alleviate the anisotropic problem. We firstly evaluate our approach on 7 semantic search benchmarks with the outperforming pre-trained model SRoBERTA. The results show that our method is more effective for learning a superior sentence representation. Then we evaluate our approach on 2 challenging FAQ datasets, Cough and Faqir, which have long query and index. The results of our experiments demonstrate that our method outperforms baseline methods.

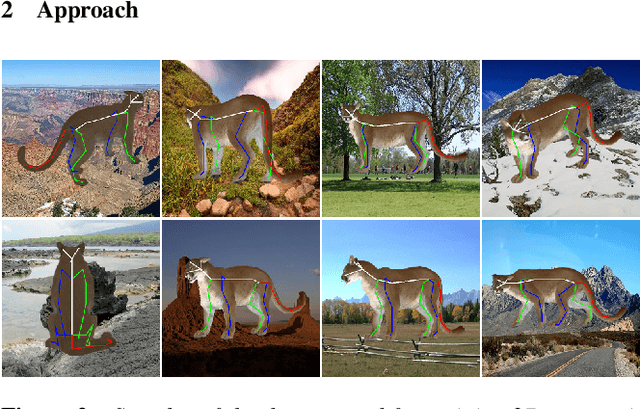

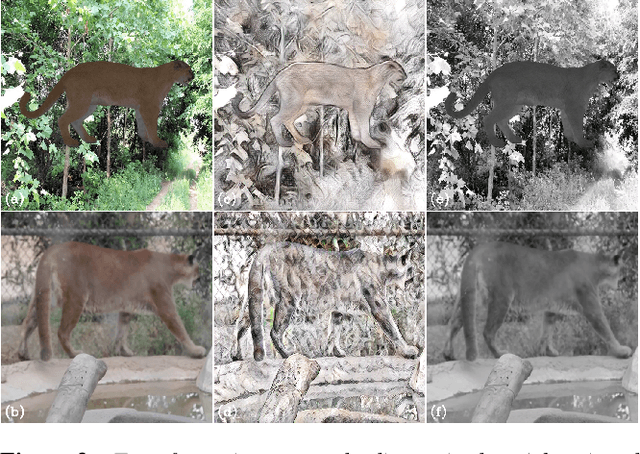

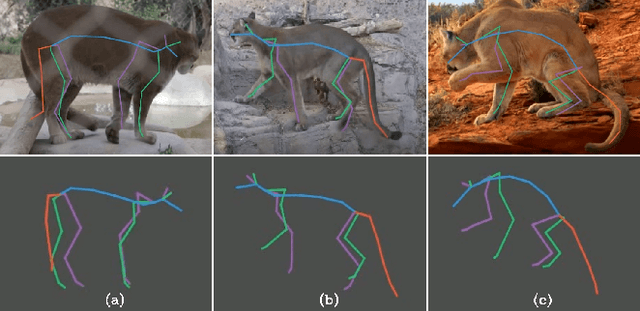

ZooBuilder: 2D and 3D Pose Estimation for Quadrupeds Using Synthetic Data

Sep 01, 2020

Abstract:This work introduces a novel strategy for generating synthetic training data for 2D and 3D pose estimation of animals using keyframe animations. With the objective to automate the process of creating animations for wildlife, we train several 2D and 3D pose estimation models with synthetic data, and put in place an end-to-end pipeline called ZooBuilder. The pipeline takes as input a video of an animal in the wild, and generates the corresponding 2D and 3D coordinates for each joint of the animal's skeleton. With this approach, we produce motion capture data that can be used to create animations for wildlife.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge