Charlotte Siegmann

Black-Box Access is Insufficient for Rigorous AI Audits

Jan 25, 2024

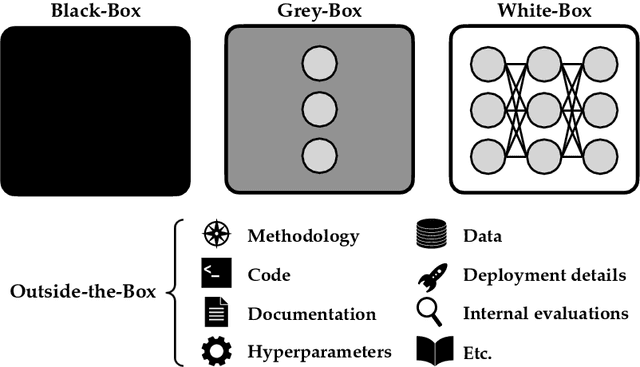

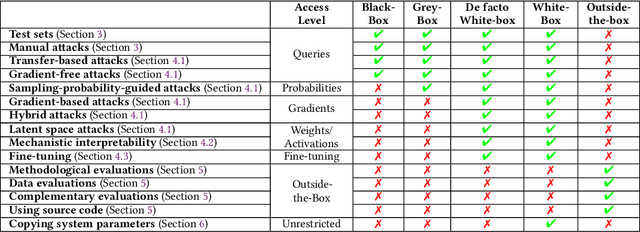

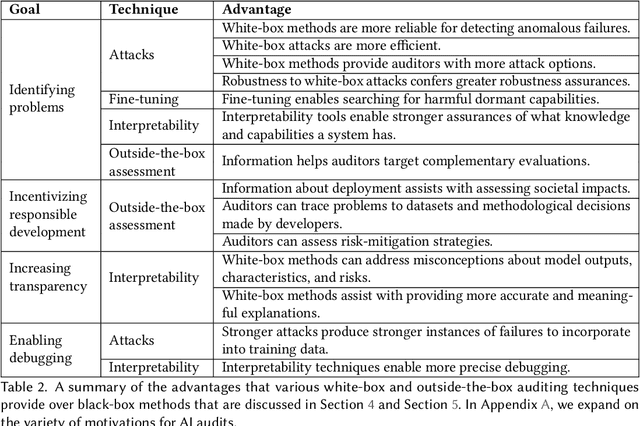

Abstract:External audits of AI systems are increasingly recognized as a key mechanism for AI governance. The effectiveness of an audit, however, depends on the degree of system access granted to auditors. Recent audits of state-of-the-art AI systems have primarily relied on black-box access, in which auditors can only query the system and observe its outputs. However, white-box access to the system's inner workings (e.g., weights, activations, gradients) allows an auditor to perform stronger attacks, more thoroughly interpret models, and conduct fine-tuning. Meanwhile, outside-the-box access to its training and deployment information (e.g., methodology, code, documentation, hyperparameters, data, deployment details, findings from internal evaluations) allows for auditors to scrutinize the development process and design more targeted evaluations. In this paper, we examine the limitations of black-box audits and the advantages of white- and outside-the-box audits. We also discuss technical, physical, and legal safeguards for performing these audits with minimal security risks. Given that different forms of access can lead to very different levels of evaluation, we conclude that (1) transparency regarding the access and methods used by auditors is necessary to properly interpret audit results, and (2) white- and outside-the-box access allow for substantially more scrutiny than black-box access alone.

The Brussels Effect and Artificial Intelligence: How EU regulation will impact the global AI market

Aug 23, 2022

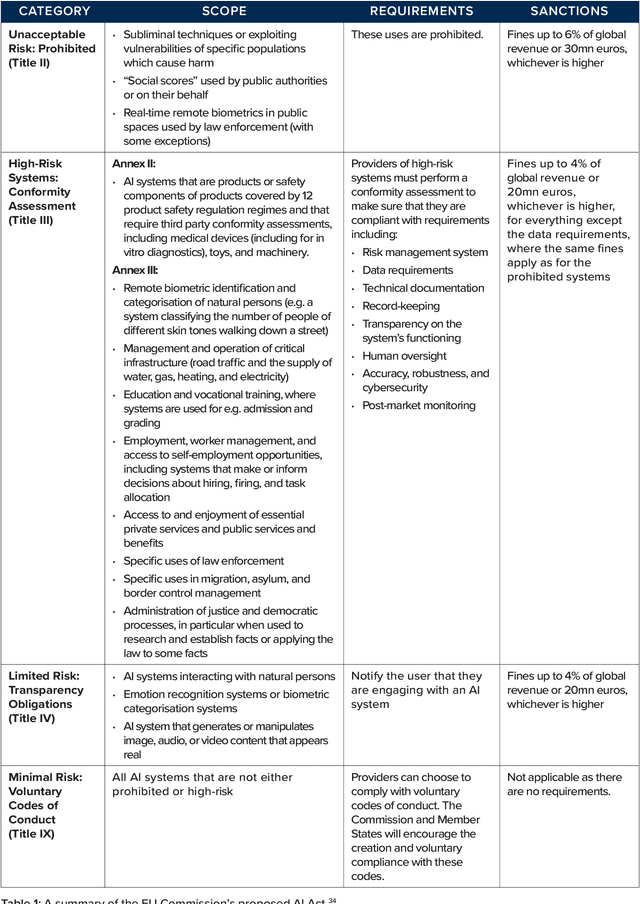

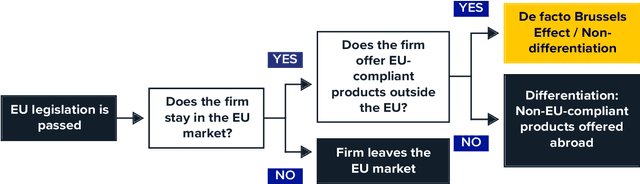

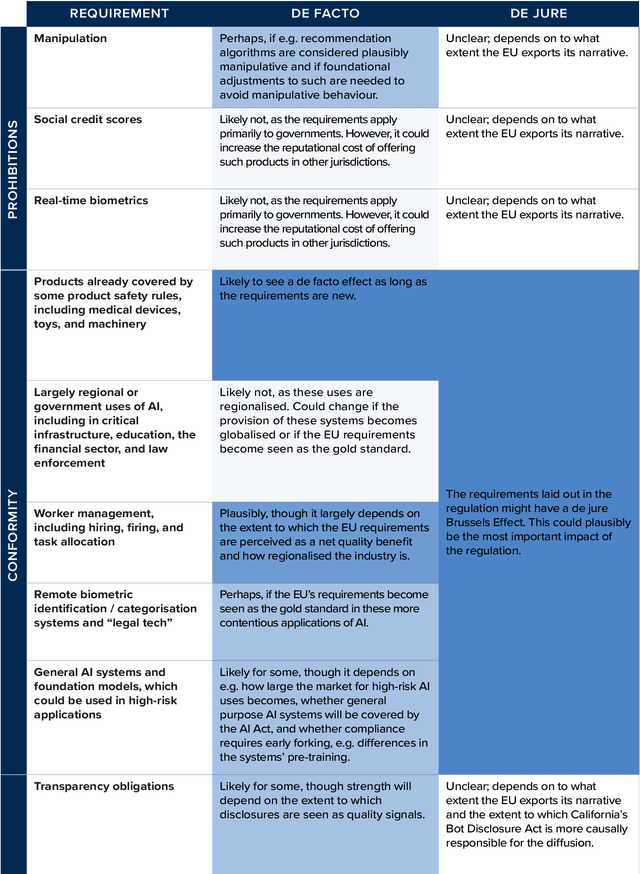

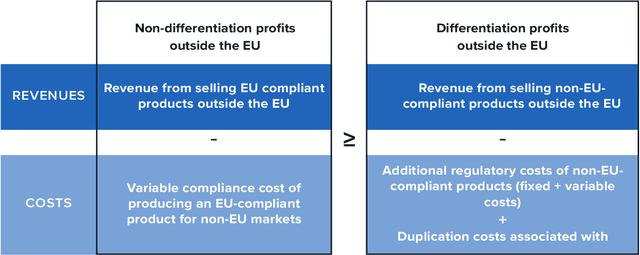

Abstract:The European Union is likely to introduce among the first, most stringent, and most comprehensive AI regulatory regimes of the world's major jurisdictions. In this report, we ask whether the EU's upcoming regulation for AI will diffuse globally, producing a so-called "Brussels Effect". Building on and extending Anu Bradford's work, we outline the mechanisms by which such regulatory diffusion may occur. We consider both the possibility that the EU's AI regulation will incentivise changes in products offered in non-EU countries (a de facto Brussels Effect) and the possibility it will influence regulation adopted by other jurisdictions (a de jure Brussels Effect). Focusing on the proposed EU AI Act, we tentatively conclude that both de facto and de jure Brussels effects are likely for parts of the EU regulatory regime. A de facto effect is particularly likely to arise in large US tech companies with AI systems that the AI Act terms "high-risk". We argue that the upcoming regulation might be particularly important in offering the first and most influential operationalisation of what it means to develop and deploy trustworthy or human-centred AI. If the EU regime is likely to see significant diffusion, ensuring it is well-designed becomes a matter of global importance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge