Brian Crafton

Hardware-aware Pruning of DNNs using LFSR-Generated Pseudo-Random Indices

Nov 09, 2019

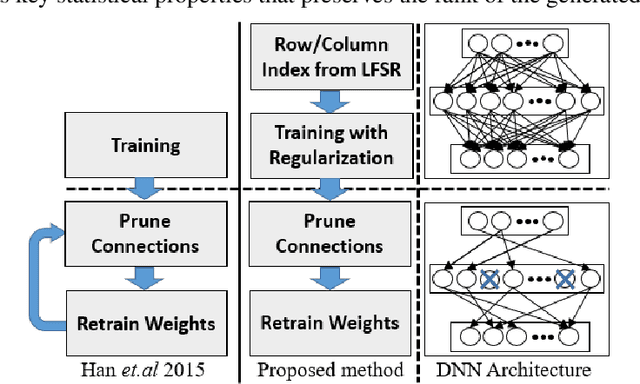

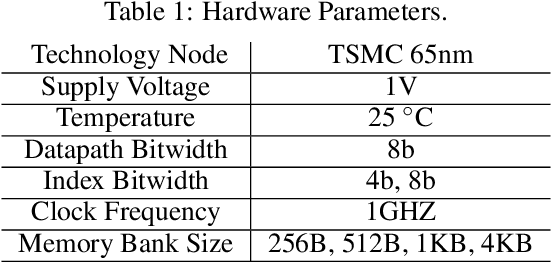

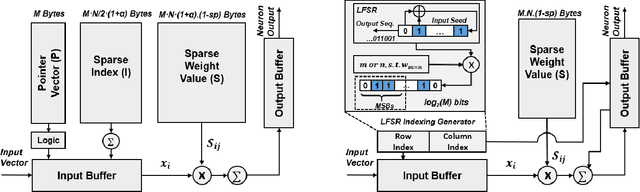

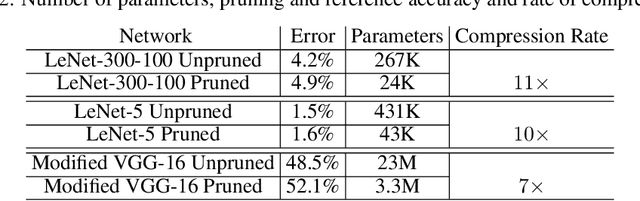

Abstract:Deep neural networks (DNNs) have been emerged as the state-of-the-art algorithms in broad range of applications. To reduce the memory foot-print of DNNs, in particular for embedded applications, sparsification techniques have been proposed. Unfortunately, these techniques come with a large hardware overhead. In this paper, we present a hardware-aware pruning method where the locations of non-zero weights are derived in real-time from a Linear Feedback Shift Registers (LFSRs). Using the proposed method, we demonstrate a total saving of energy and area up to 63.96% and 64.23% for VGG-16 network on down-sampled ImageNet, respectively for iso-compression-rate and iso-accuracy.

Direct Feedback Alignment with Sparse Connections for Local Learning

Jan 30, 2019

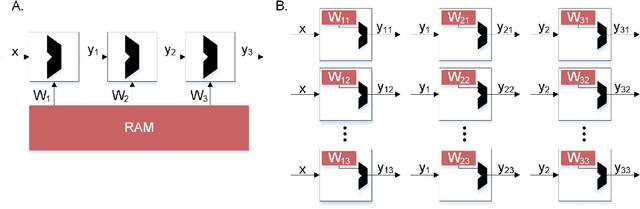

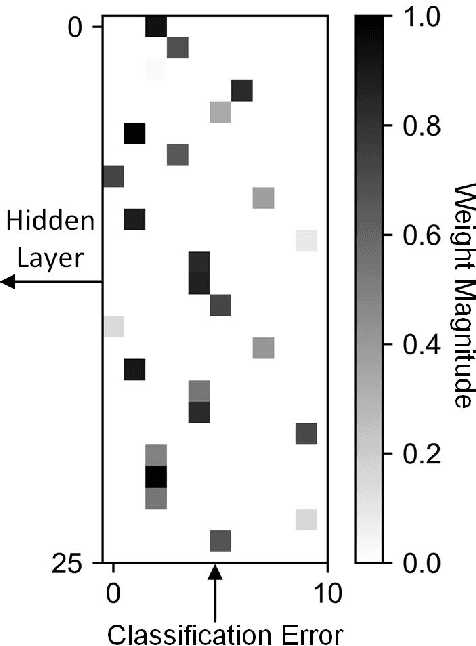

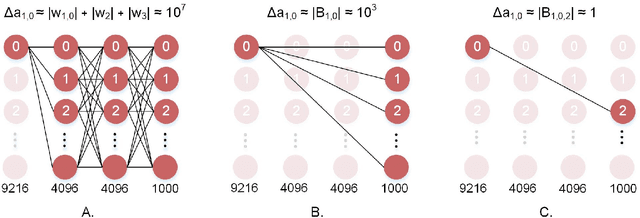

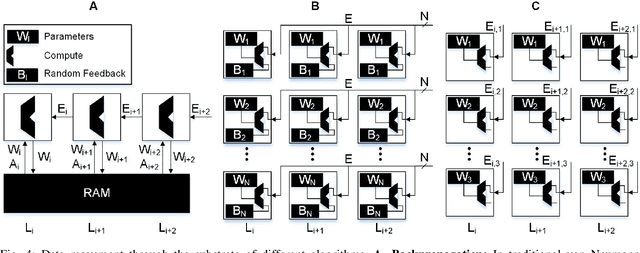

Abstract:Recent advances in deep neural networks (DNNs) owe their success to training algorithms that use backpropagation and gradient-descent. Backpropagation, while highly effective on von Neumann architectures, becomes inefficient when scaling to large networks. Commonly referred to as the weight transport problem, each neuron's dependence on the weights and errors located deeper in the network require exhaustive data movement which presents a key problem in enhancing the performance and energy-efficiency of machine-learning hardware. In this work, we propose a bio-plausible alternative to backpropagation drawing from advances in feedback alignment algorithms in which the error computation at a single synapse reduces to the product of three scalar values, satisfying the three factor rule. Using a sparse feedback matrix, we show that a neuron needs only a fraction of the information previously used by the feedback alignment algorithms to yield results which are competitive with backpropagation. Consequently, memory and compute can be partitioned and distributed whichever way produces the most efficient forward pass so long as a single error can be delivered to each neuron. We evaluate our algorithm using standard data sets, including ImageNet, to address the concern of scaling to challenging problems. Our results show orders of magnitude improvement in data movement and 2x improvement in multiply-and-accumulate operations over backpropagation. All the code and results are available under https://github.com/bcrafton/ssdfa.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge