Boyu Gao

Enhancing Deep Knowledge Tracing with Auxiliary Tasks

Feb 14, 2023

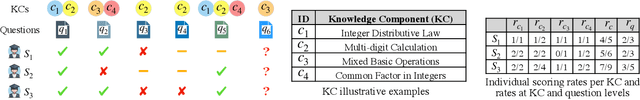

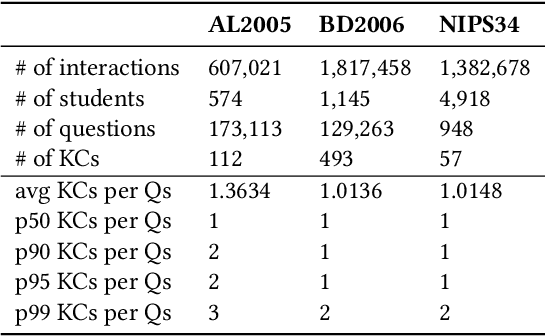

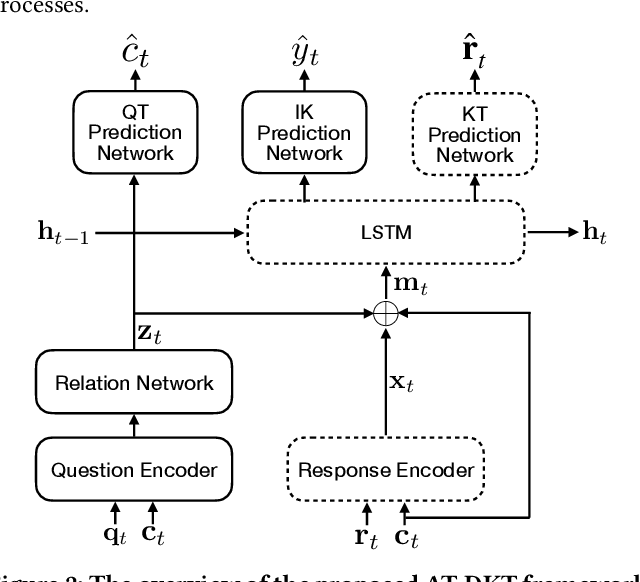

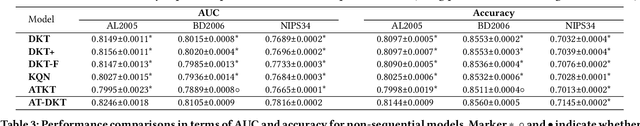

Abstract:Knowledge tracing (KT) is the problem of predicting students' future performance based on their historical interactions with intelligent tutoring systems. Recent studies have applied multiple types of deep neural networks to solve the KT problem. However, there are two important factors in real-world educational data that are not well represented. First, most existing works augment input representations with the co-occurrence matrix of questions and knowledge components\footnote{\label{ft:kc}A KC is a generalization of everyday terms like concept, principle, fact, or skill.} (KCs) but fail to explicitly integrate such intrinsic relations into the final response prediction task. Second, the individualized historical performance of students has not been well captured. In this paper, we proposed \emph{AT-DKT} to improve the prediction performance of the original deep knowledge tracing model with two auxiliary learning tasks, i.e., \emph{question tagging (QT) prediction task} and \emph{individualized prior knowledge (IK) prediction task}. Specifically, the QT task helps learn better question representations by predicting whether questions contain specific KCs. The IK task captures students' global historical performance by progressively predicting student-level prior knowledge that is hidden in students' historical learning interactions. We conduct comprehensive experiments on three real-world educational datasets and compare the proposed approach to both deep sequential KT models and non-sequential models. Experimental results show that \emph{AT-DKT} outperforms all sequential models with more than 0.9\% improvements of AUC for all datasets, and is almost the second best compared to non-sequential models. Furthermore, we conduct both ablation studies and quantitative analysis to show the effectiveness of auxiliary tasks and the superior prediction outcomes of \emph{AT-DKT}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge